The controversy over the recently announced decision by YouTube to remove publicly viewable “Dislike” counts from all videos is continuing to grow. Many YT creators feel that the loss of a publicly viewable Like/Dislike ratio will be a serious detriment. I know that I consider that ratio useful.

There are some good arguments by Google/YouTube for this action, particularly relating to harassment campaigns targeting the Dislikes on specific videos. However, I believe that YouTube has gone too far in this instance, when a more nuanced approach would be preferable.

In particular, my view is that it is reasonable to remove the publicly viewable Dislike counts from videos by default, but that creators should be provided with an option to re-enable those counts on their specific videos (or on all of their videos) if they wish to do so.

With YouTube removing the counts by default, YouTube creators who are not aware of these issues will be automatically protected. But creators who feel that showing Dislike counts is good for them could opt to display them. Win-win!

–Lauren–

UPDATE (September 3, 2021): Apple has now announced that “based on feedback” they are delaying the launch of this project to “collect input and make improvements” before release.

– – –

Apple’s newly revealed plan to scan users’ Apple devices for photos and messages related to child abuse is actually fairly easy to explain from a high-level technical standpoint.

Apple has abandoned their “end-to-end” encrypted messaging promises. They’re gone. Poof! Flushed down the john. Because a communication system that supposedly is end-to-end encrypted — but has a backdoor built into user devices — is like being sold a beautiful car and discovering after the fact that it doesn’t have any engine. It’s fraudulent.

The depth of Apple’s betrayal of its users is not specifically in the context of dealing with child abuse — which we all agree is a very important issue indeed — but that by building any kind of backdoor mechanism into their devices they’ve opened the legal door to courts and other government entities around the world to make ever broader demands for secret, remote access to the data on your Apple phones and other devices. And even if you trust your government today with such power — imagine what a future government in whom you have less faith may do.

In essence, Apple has given away the game. It’s as if you went into a hospital to have your appendix removed, and when you awoke you learned that they also removed one of your kidneys and an eye. Surprise!

There is no general requirement that Apple (or other firms) provide end-to-end crypto in their products. But Apple has routinely proclaimed itself to be a bastion of users’ privacy, while simultaneously being highly critical of various other major firms’ privacy practices.

That’s all just history now, a popped balloon. Apple hasn’t only jumped the shark, they’ve fallen into the water and are sinking like a stone to the bottom.

–Lauren–

As the COVID “Delta” variant continues its spread around the globe, the Biden administration has deployed something of a basketball-style full-court press against misinformation on social media sites. That its intentions are laudable is evident and not at issue. Misinformation on social media and in other venues (such as various cable “news” channels), definitely play a major role in vaccine hesitancy — though it appears that political and peer allegiances play a significant role in this as well, even for persons who have accurate information about the available vaccines.

Yet good intentions by the administration do not necessarily always translate into optimum statements and actions, especially in an ecosystem as large and complex as social media. When President Biden recently asserted that Facebook is “killing people” (a statement that he later walked back) it raised many eyebrows both in the U.S. and internationally.

I implied above that the extent to which vaccine misinformation (as opposed to or in combination with other factors) is directly related to COVID infections and/or deaths is not a straightforward metric. But we can still certainly assert that Facebook has traditionally been an enormous — likely the largest — source of misinformation on social media. And it is also true, as Facebook strongly retorted in the wake of Biden’s original remark, that Facebook has been working to reduce COVID misinformation and increase the viewing of accurate disease and vaccine information on their platform. Other firms such as Twitter and Google have also been putting enormous resources toward misinformation control (and its subset of “disinformation” — which is misinformation being purposely disseminated with the knowledge that it is false).

But for those both inside and outside government who assert that these firms “aren’t doing enough” to control misinformation, there are technical realities that need to be fully understood. And key among these is this: There is no practical way to eliminate all misinformation from these platforms. It is fundamentally impossible without preventing ordinary users from posting content at all — at which point these platforms wouldn’t be social media any longer.

Even if it were possible for a human moderator (or humans in concert with automated scanning) to pre-moderate every single user posting before permitting them to be seen and/or shared publicly, differences in interpretation (“Is this statement in this post really misinformation?”), errors, and other factors would mean that some misinformation is bound to spread — and that can happen very quickly and in ways that would not necessarily be easily detected either by human moderators or by automated content scanning systems. But this is academic. Without drastically curtailing the amount of User Generated Content (UGC) being submitted to these platforms, such pre-moderation models are impractical.

Some other statements from the administration also triggered concerns. The administration appeared to suggest that the same misinformation standards should be applied by all social media firms — a concept that would obviously eliminate the ability of the Trust & Safety teams at these firms to make independent decisions on these matters. And while the administration denied that it was dictating to firms what content should be removed as misinformation, they did say that they were in frequent contact with firms about perceived misinformation. Exactly what that means is uncertain. The administration also said that a short list of “influencers” were responsible for most misinformation on social media — though it wasn’t really apparent what the administration would want firms to do with that list. Disable all associated accounts? Watch those accounts more closely for disinformation? I certainly don’t know what was meant.

But the fundamental nature of the dilemma is even more basic. For governments to become involved at all in social media firms’ decisions about misinformation is a classic slippery slope, for multiple reasons.

Even if government entities are only providing social media firms with “suggestions” or “pointers” to what they believe to be misinformation, the oversized influence that these could have on firms’ decisions cannot be overestimated, especially when some of these same governments have been threatening these same firms with antitrust and other actions.

Perhaps of even more concern, government involvement in misinformation content decisions could potentially undermine the currently very strong argument that these firms are not subject to First Amendment considerations, and so are able to make their own decisions about what content they will permit on their platforms. Loss of this crucial protection would be a big win for those politicians and groups who wish to prevent social media firms from removing hate speech and misinformation from their platforms. So ironically, government involvement in suggesting that particular content is misinformation could end up making it even more difficult for these firms to remove misinformation at all!

Even if you feel that the COVID crisis is reason enough to endorse government involvement in social media content takedowns, please consider for a moment the next steps. Today we’re talking about COVID misinformation. What sort of misinformation — there’s a lot out there! — will we be talking about tomorrow? Do we want the government urging content removal about various other kinds of misinformation? How do we even define misinformation in widely different subject areas?

And even if you agree with the current administration’s views on misinformation, how do you know that you will agree with the next administration’s views on these topics? If you want the current administration to have these powers, will you be agreeable to potentially a very different kind of administration having such powers in the future? The previous administration and the current one have vastly diverging views on a multitude of issues. We have every reason to expect at least some future administrations to follow this pattern.

The bottom line is clear. Even with the best of motives, governments should not be involved in content decisions involving misinformation on social media. Period.

–Lauren–

Ransomware is currently a huge topic in the news. A crucial gasoline pipeline shuts down. A major meat processor is sidelined. It almost feels as if there are new announced ransomware attacks every few days, and there are certainly many such attacks that are never made public.

We see commentators claiming that ransomware attacks are the software equivalent of 9/11, and that perpetrators should be treated as terrorists. Over on one popular right-wing news channel, a commentator gave a literal “thumbs up” to the idea that ransomware perpetrators might be assassinated.

The Biden administration and others are suggesting that if Russia’s Putin isn’t responsible for these attacks, he at least must be giving his tacit approval to the ones apparently originating there. For his part, Putin is laughing off such ideas.

There clearly is political hay to be made from linking ransomware attacks to state actors, but it is certainly true that ransomware attacks can potentially have much the same devastating impacts on crucial infrastructure and operations as more “traditional” cyberattacks.

And while it is definitely possible for a destruction-oriented cyberattack to masquerade as a ransomware attack, it is also true that the vast majority of ransomware attacks appear to be aimed not at actually causing damage, but for the rather more prosaic purpose of extorting money from the targeted firms.

All this having been said, there is actually a much more alarming bottom line. The vast majority of these ransomware attacks are not terribly sophisticated in execution. They don’t need to depend on armies of top-tier black-hat hackers. They usually leverage well-known authentication weaknesses, such as corporate networks accessible without robust 2-factor authentication techniques, and/or firms’ reliance on outmoded firewall/VPN security models.

Too often, we see that a single compromised password gives attackers essentially unlimited access behind corporate firewalls, with predictably dire results.

The irony is that the means to avoid these kinds of attacks are already available — but too many firms just don’t want to make the efforts to deploy them. In effect, their systems are left largely exposed — and then there’s professed surprise when the crooks simply saunter in! There are hobbyist forums on the Net, having already implemented these security improvements, that are now actually better protected than many major corporations!

I’ve discussed the specifics many times in the past. The use of 2-factor (aka 2-step) authentication can make compromised username/password combinations far less useful to attackers. When FIDO/U2F security keys are properly deployed to provide this authentication, successful fraudulent logins tend rapidly toward nil.

Combining these security key models with “zero trust” authentication, such as Google’s “BeyondCorp” (https://cloud.google.com/beyondcorp), and security is even further enhanced, since no longer can an attacker simply penetrating a firewall or compromised VPN find themselves with largely unfettered access to targeted internal corporate resources.

These kinds of security tools are available immediately. There is no need to wait for government actions or admissions from Putin! And sooner rather than later, firms and institutions that continue to stall on deploying these kinds of security methodologies will likely find themselves answering ever more pointed questions from their stockholders or other stakeholders, demanding to know why these security improvements weren’t already made *before* these organizations were targeted by new highly publicized ransomware attacks!

–Lauren–

While we’re all still reeling from the recent horrific, tragic. and utterly preventable incidents of mass shooting murders, inside the D.C. beltway today events are taking place that could put innumerable medically challenged Americans at deep risk — and the culprit is Louis DeJoy, the Postal Service (USPS) Postmaster General and Trump megadonor.

His 10-year plan for destroying the USPS, by treating it like his former for-profit shipping logistics business rather than the SERVICE is was intended to be — was released today, along with a flurry of self-congratulatory official USPS tweets that immediately attracted massive negative replies, most of them demanding that DeJoy be removed from his position. Now. Right now!

I strongly concur with this sentiment.

Even as first class and other mail delays have already been terrifying postal customers dependent on the USPS for critical prescription medications and other crucial products, DeJoy’s plan envisions even longer mail delays — including additional days of delay for delivery of local first class mail, banning first class mail from air shipping, raising rates, cutting back on post office hours, and — well, you get the idea.

Fundamentally the plan is simple. Destroy the USPS via the “death by a thousand cuts” — leaving to slowly twist in the wind those businesses and individuals without the wherewithal to rely on much more expensive commercial carriers.

While President Biden has taken some initial steps regarding the USPS by appointing several new appointees to the USPS board of governors (who need to be confirmed by the Senate), and this could lead to the ability for the ultimate ousting of DeJoy (since only the board can fire him directly), we do not have the time for this process to play out.

Biden has apparently been reluctant to take the “nuclear option” of firing DeJoy’s supporters on the board — they can be fired “for cause” — but many observers assert that their complicity in this DeJoy plan to wreck USPS services would be cause enough.

One thing is for sure. The kinds of changes that DeJoy is pushing through would be expensive and time consuming to unwind later on. And in the meantime, everybody — businesses and ordinary people alike — will suffer greatly at DeJoy’s hands.

President Biden should act immediately to take any and all legal steps to get DeJoy out of the USPS before DeJoy can do even more damage to us all.

–Lauren–

As it stands right now, major news organizations — in league with compliant politicians around the world — seem poised to use the power of their national governments to take actions that could absolutely destroy the essentially open Web, as we’ve known it since Sir Tim Berners-Lee created the first operational web server and client browser at CERN in 1990.

Australia — home of the right-wing Rupert Murdoch empire — is in the lead of pushing this nightmarish travesty, but other countries around the world are lining up to join in swinging wrecking balls at Web users worldwide.

Large Internet firms like Facebook and Google, feeling pressure to protect their income streams more than to protect their users, are taking varying approaches toward this situation, but the end result will likely be the same in any case — users get the shaft.

The underlying problem is that news organizations are now demanding to be paid by firms like Google and Facebook merely for being linked from them. The implications of this should be obvious — it creates the slippery slope where more and more sites of all sorts around the world would demand to be paid for links, with the result that the largest, richest Internet firms would likely be the last ones standing, and competition (along with choices available to users) would wither away.

The current situation is still in considerable flux — seemingly changing almost hour by hour — but the trend lines are clear. Google had originally taken a strong stance against this model, rightly pointing out how it could wreck the entire concept of open linking across the Web, the Web’s very foundation! But at the last minute, it seems that Google lost its backbone, and has been announcing payoff deals to Murdoch and others, which of course will just encourage more such demands. At the moment Facebook has taken the opposite approach, and has literally cut off news from their Australian users. The negative collateral effects that this move has created make it unlikely that this can be a long-term action.

But what we’re really seeing from Facebook and Google (and other large Internet firms who are likely to be joining their ranks in this respect) — despite their differing approaches at the moment — is essentially their floundering around in a kind of desperation. They don’t really want (and/or don’t know how) to address the vast damage that will be done to the overall Web by their actions, beyond their own individual ecosystems. From a profit center standpoint this arguably makes sense, but from the standpoint of ordinary users worldwide it does not.

To use the vernacular, users are being royally screwed, and that screwing has only just begun.

Some observers of how the news organizations and their government sycophants are pushing their demands have called these actions blackmail. There is one universal rule when dealing with blackmailers — no matter how much you pay them, they’ll always come back demanding more. In the case of the news link wars, the end result if the current path is continued, will be their demands for the entire Web — users be damned.

–Lauren–

Claims of “cancel culture” seems to be everywhere these days. Almost every day, we seem to hear somebody complaining that they have been “canceled” from social media, and pretty much inevitably there is an accompanying claim of politically biased motives for the action.

The term “cancel culture” itself appears to have been pretty much unknown until several years ago, and seems to have morphed from the term “call-out culture” — which ironically is generally concerned with someone getting more publicity than they desire, rather than less.

Be that as it may, cancel culture complaints — the lions’ share of which emanate from the political right wing — are now routinely used to lambaste social media and other Internet firms, to assert that their actions are based on political statements with which the firms do not agree and (according to these accusations) seek to suppress.

However, even a casual inspection of these claims suggest that the actual issues in play are hate speech, violent speech, and dangerous misinformation and disinformation — not political viewpoints, and formal studies reinforce this observation, e.g. False Accusation: The Unfounded Claim that Social Media Companies Censor Conservatives.

Putting aside for now the fact that the First Amendment does not apply to other than government actions against speech, even a cursory examination of the data reveals — confirmed by more rigorous analysis — not only that right-wing entities are overwhelmingly the source of most associated dangerous speech (though they are by no means the only source, there are sources on the left as well), but conservatives overall still have prominent visibility on social media platforms, dramatically calling into question the claims of “free speech” violations overall.

Inexorably intertwined with this are various loud, misguided, and dangerous demands for changes to (and in some cases total repeal of) Communications Decency Act Section 230, the key legislation that makes all forms of Internet UGC — User Generated Content — practical in the first place.

And here we see pretty much equally unsound proposals (largely completely conflicting with each other) from both sides of the political spectrum, often apparently based on political motives and/or a dramatic ignorance of the negative collateral damage that would be done to ordinary users if such proposals were enacted.

The draconian penalties associated with various of these proposals — aimed at Internet firms — would almost inevitably lead not to the actually desired goals of the right or left, but rather to the crushing of ordinary Internet users, by vastly reducing (or even eliminating entirely) the amount of their content on these platforms — that is, videos they create, comments, discussion forms, and everything else users want to share with others.

The practical effect of these proposals would be not to create more free speech or simply reduce hate and violent speech, misinformation and disinformation, but to make it impractical for Internet platforms to support user content — which is vast in scale beyond the imagination of most persons — in anything like the ways it is supported today. The risks would just be too enormous, and methodologies to meet the new demanded standards — even if we assume the future deployment of advanced AI systems and vast new armies of proactive moderators — do not exist and likely could never exist in a practical and affordable manner.

This is truly one of those “be careful what you wish for” moments, like asking the newly-released genie to “fix social media” and with a wave of his hand he eliminates the ability of anyone in the public — prominent or not, on the right or the left — to share their views or other content.

So as we see, complaints about social media are being driven largely by highly political arguments, but in reality invoke enormously complex technical challenges at gigantic scales — many of which we don’t even fundamentally understand given the toxic political culture of today.

As much as nobody would likely argue that Section 230 is perfect, I have yet to see any realistic proposals to change it that would not make matters far worse — especially for ordinary users who largely don’t understand how much they have to lose in these battles.

Like democracy itself, which has been referred to as “the worst possible system of governance, except for all the others” — buying into the big lie of cancel culture and demands to alter Section 230 is wrong for the Internet and would be terrible for its users.

–Lauren–

I increasingly suspect that the days of large-scale public distribution of unmoderated UGC (User Generated Content) on the Internet may shortly begin drawing to a close in significant ways. The most likely path leading to this over time will be a combination of steps taken independently by social media firms and future legislative mandates.

Such moderation at scale may follow the model of AI-based first-level filtering, followed by layers of human moderators. It seems unlikely that today’s scale of postings could continue under such a moderation model, but future technological developments may well turn out to be highly capable in this realm.

Back in 1985 when I launched my “Stargate” experiment to broadcast Usenet Netnews over the broadcast television vertical blanking interval of national “Superstation WTBS,” I decided that the project would only carry moderated Usenet newsgroups. Even more than 35 years ago, I was concerned about some of the behavior and content already beginning to become common on Usenet. My main related concerns back then did not involve hate speech or violent speech — which were not significant problems on the Net at that point — but human nature being what it is I felt that the situation was likely to get much worse rather than better.

What I had largely forgotten in the decades since then though, until I did a Google search on the topic today (a great deal of original or later information on Stargate is still online, including various of my relevant messages in very early mailing list archives that will likely long outlive me), is the level of animosity about that decision that I received at the time. My determination for Stargate to only carry moderated groups triggered cries of “censorship,” but I did not feel that responsible moderation equated with censorship — and that is still my view today.

And now, all these many years later, it’s clear that we’ve made no real progress in these regards. In fact, the associated issues of abuse of unmoderated content in hateful and dangerous ways makes the content problems that I was mostly concerned about back then seem like a soap bubble popping, compared with a nuclear bomb detonating now.

We must solve this. We must begin serious and coordinated work in this vein immediately. And my extremely strong preference is that we deal with these issues together as firms, organizations, customers, and users — rather than depend on government actions that, if history is any guide, will likely do enormous negative collateral damage.

Time is of the essence.

–Lauren–

The post below was originally published on 10 August 2019. In light of recent events, particularly the storming of the United States Capital by a violent mob — resulting in five deaths — and subsequent actions by major social media firms relating to the exiting President Donald Trump (terms of service enforcement actions by these firms that I do endorse under these extraordinary circumstances), I feel that the original post is again especially relevant. While the threats of moves by the Trump administration against CDA Section 230 are now moot, it is clear that 230 will be a central focus of Congress going forward, and it’s crucial that we all understand the risks of tampering with this key legislation that is foundational to the availability of responsible speech and content on the Internet. –Lauren–

– – – – – – – – – –

The Right’s (and Left’s) Insane Internet Content Power Grab

(10 August 2019)

Rumors are circulating widely — and some news sources claim to have seen actual drafts — of a possible Trump administration executive order aimed at giving the government control over content at large social media and other major Internet platforms.

This effort is based on one of the biggest lies of our age — the continuing claims mostly from the conservative right (but also from some elements of the liberal left) that these firms are using politically biased decisions to determine which content is inappropriate for their platforms. That lie is largely based on the false premise that it's impossible for employees of these firms to separate their personal political beliefs from content management decisions.

In fact, there is no evidence of political bias in these decisions at these firms. It is completely appropriate for these firms to remove hate speech and related attacks from their platforms — most of which does come from the right (though not exclusively so). Nazis, KKK, and a whole array of racist, antisemitic, anti-Muslim, misogynistic, and other violent hate groups are disproportionately creatures of the political right wing.

So it is understandable that hate speech and related content takedowns would largely affect the right — because they're the primary source of these postings and associated materials.

At the scales that these firms operate, no decision-making ecosystem can be 100% accurate, and so errors will occur. But that does not change the underlying reality that the "political bias" arguments are false.

The rumored draft Trump executive order would apparently give the FCC and FTC powers to determine if these firms were engaging in "inappropriate censorship" — the primary implied threat appears to be future changes to Section 230 of the Communications Decency Act, which broadly protects these (and other) firms and individuals from liability for materials that other parties post to their sites. In fact, 230 is effectively what makes social media possible in the first place, since without it the liability risks of allowing users to post anything publicly would almost certainly be overwhelming.

But wait, it gets worse!

At the same time that these political forces are making the false claims that content is taken down inappropriately from these sites for political purposes, governments and politicians are also demanding — especially in the wake of recent mass shootings — that these firms immediately take down an array of violent postings and similar content. The reality that (for example) such materials may be posted only minutes before shootings occur, and may be widely re-uploaded by other users in an array of formats after the fact, doesn't faze the politicians and others making these demands, who apparently either don't understand the enormous scale on which these firms operate, or simply don't care about such truths when they get in the way of politicians' political pandering.

The upshot of all this is an insane situation — demands that offending material be taken down almost instantly, but also demands that no material be taken down inappropriately. Even with the best of AI algorithms and a vast human monitoring workforce, these dual demands are in fundamental conflict. Individually, neither are practical. Taken together, they are utterly impossible.

Of course, we know what's actually going on. Many politicians on both the right and left are desperate to micromanage the Net, to control it for their own political and personal purposes. For them, it's not actually about protecting users, it's mostly about protecting themselves.

Here in the U.S., the First Amendment guarantees that any efforts like Trump's will trigger an orgy of court battles. For Trump himself, this probably doesn't matter too much — he likely doesn't really care how these battles turn out, so long as he's managed to score points with his base along the way.

But the broader risks of such strategies attacking the Internet are enormously dangerous, and Republicans who might smile today about such efforts would do well to imagine similar powers in the hands of a future Democratic administration.

Such governmental powers over Internet content are far too dangerous to be permitted to the administrations of any party. They are anathema to the very principles that make the Internet great. They must not be permitted to take root under any circumstances.

-Lauren-

If you head over to our new Substack newsletter, you can read much more about these records, see a longer list, and keep in touch with DiS by subscribing - as from January I'm sending out weekly album recommendations.

If you head over to our new Substack newsletter, you can read much more about these records, see a longer list, and keep in touch with DiS by subscribing - as from January I'm sending out weekly album recommendations.

There was no poll involved in creating this list. It's just my personal favourite, the ones that cut the deepest.

As the lone voice of the site nowadays, these were the records that were stuck on repeat on our ghost-ship... and I hope you find a new favourite from these (as that's the only point of listing season, right?!)

21) Jehnny Beth - To Love Is To Live

20) Angel Olsen - Whole New Mess

19) Princess Nokia - Everything is Beautiful

18) Nine Inch Nails - Ghosts V: Together & Ghosts VI: Locusts

17) Kate NV - Room for The Moon

16) Polly Scattergood - In This Moment

15) The Big Moon - Walking Like We Do

14) Sarah Davachi - Cantus, Descant

13) Daniel Avery & Alessandro Cortini - Illusion of Time

12) Fiona Apple - Fetch The Bolt Cutters

11) Juanita Stein - Snapshot

10) Moses Sumney - græ

9) Perfume Genius - Set Fire To My Heart Immediately

8) Julianna Barwick - Healing Is A Miracle

7) Mary Lattimore - Silver Ladders

6) Phoebe Bridgers - Punisher

5) Agnes Obel - Myopia

4) I Break Horses - Warnings

3) Laura Marling - Song For Our Daughter

2) Hayley Williams - Petals for Armor

1) G⬛⬛ M⬛⬛⬛⬛⬛⬛ - ⬛⬛⬛ ⬛⬛⬛⬛⬛⬛

Don't call it a comeback... actually, do!

Don't call it a comeback... actually, do!

This is Sean Adams, the founder of Drowned in Sound.

Firstly, I'm sorry for the radio silence on this site for the last 18 months or so. I wrote a goodbye message a few times but didn't have the heart to publish it. It felt too final to say farewell.

As you may have seen on our social channels or in the media (even Billboard reported on our demise!), we decided to "pause" publishing due to what you could call financial constraints. Or to put it another way... our advertising revenue went from being an inhabitable house on a hillside to the entire cliff crumbling into the sea, hitting every rock on its way in... the camera slowly zoomed in as the debris was ravaged by the waves... and the director lingered on the shot for far longer than was necessary.

However, just before the end credits started rolling, we shared news that we managed to keep our "infamous" forums going.

I just wanted to offer a MASSIVE heart-felt thank you (THANK YOU!) to everyone who made a donation and continues to help keep the lights on and our community alive. It costs $600 a month due to the continued popularity of this free service, and every £1 you can spare makes a huge different. Learn more about how you can help with a regular or one-off contributoion, here.

Anyway...

What's this newsletter...?Drowned in Sound turns 20 on October 1st, so to mark the occasion and to keep the flame of the site alive, I'm starting a newsletter. Or rather, going back to DiS' roots, as before the site started, it was my personal newsletter under the guise of The Last Resort, featuring my incoherent teenage ramblings about Muse's first demo and stuff like that.

In this new newsletter, you can expect a mixture of my personal recommendations and hopefully cogent and coherent reflections on the last 20 years of music, alongside some gems from our archive, playlists, and recommend reads around the web. It's also quite likely I'll fail to resist sharing cat photos and existential memes...

Plus I'll likely drop in some bits about the class of 2021 too. I've posted a few reminders of DiS' past over on https://drownedinsound.substack.com, which will soon become easy to find on our homepage.

Without further ado... you can subscribe for free here:

To ensure it stays celebratory and doesn't get too self-indulgent, I've also decided to set up a slightly more personal newsletter in parallel, which ties in with the current Unhappy Hour strand of the monthly DiS radio show mixing together the two best flavours of music: melancholy and mellow. If that sad cocktail sounds of interest, here's the first edition about mittens, mezcal, and Lykke Li.

Sorry! I don't want your emailsThat's fair enough. Who needs another email in their inbox?! All of the posts will be available online and promoted on our social channels.

If you're not already, you can follow us/me on Twitter, Facebook, and Instagram.

More news soon...

Bye for now,

Sean xo

DiS founder launches new Unhappy Hour newsletter & playlist series to go with the monthly Drowned in Sound radio show (more info below).

DiS founder launches new Unhappy Hour newsletter & playlist series to go with the monthly Drowned in Sound radio show (more info below).

Here's the first edition to give you a taste of what's to come.

SubscribeSometimes I like to walk in the rain.

Headphones are a must for me on any walk (sorry, nature!) and from a lot of research, I can confirm that there's nothing more perfect than Lykke Li on a drizzly day. Pick any of her four albums on a day where it's less like tears are falling from heaven and closer to that feeling of walking through a dragon's breath. It. Is. Perfection.

If you're so inclined, you may feel at one with the cloud when 'Sadness is a Blessing' makes its final descent. It's one of those snow globe crescendos that swirls but also feels motionless amid a flood of emotion. Still, like a changing tide.

On 'Sadness... ' Motown rimshots snap beneath the lines "sorrow, the only lover I've ever known..." which hangs in the air, holding out its hand for the follow up "sorrow the only lover I can call my own..." Its mitten strings untangle as Lykke slowly pirouettes into "sadness is my boyfriend, oh sadness I'm your girl." It's such a killer line. I'd assume I'll one day meet someone who has it as a tattoo - if I haven't already!

If you're reading this and have never heard this song or haven't let its fug sprawl around you for a while, I don't mind if you feel compelled to run off and listen to it right away.

I've also made it track one on the playlist that will accompany these Unhappy Hour missives: subscribe here.

Still with me? I won't go on for much longer, promise... Let's get back to walking in a misty wood with sad piano laments overpowering the mulch underfoot... Whilst Lykke may have tried to fool us by opening her most recent album with with an all-lowercase string-nest entitled 'hard rain', there's just something about her music that feels more omnidirectional than that. Hard and heavy and oppressive her music is not. It's such more pervasive and powerful than that.

Perhaps it's the way her voice plumes around the microphone. Her gasps often left in the final edits. Gusts of humanity. Sighs in various stages of ecstasy and exasperation. It's perhaps in the textures created in the space between her exhalations and the microphone where Lykke Li's magic illuminates. It's that filled void that a listener shares in headphones and it feels intensely intimate, even though there's a distant cool gloom to everything Lykke does.

Many say that photography is the art of capturing light. Sound is the vibration of air, which makes the best producers electrocardiographers, capturing the pulse of someone's heart and soul. I say this because there's something about the way Lykke makes the air move that hits so different to almost anyone else. Listening to her voice live or on almost anyone's track, whether it's her recent sad banger hit with Mark Ronson or a resurrected tune with Royksopp, there's an unmistakable pause, like the air skids to a stop... rubber and smoke sprawls in super slow motion. Shards of glass erupt and glitter as they spray...

I'm aware a male rock critic describing a woman's voice at any greater length would be creepy (if it isn't a bit already). However, the medium is often the message but not always because sometimes the message is "I lay in silence, the silence talks... my heart keeps pulling in the wrong decision." Imagine if you not only wrote that line but it became a mainstream hit. On Spotify 'Late Night Feelings' has had 67 million plays - yes, sixty-seven MILLION! And only a third of of them were me...

It's maybe not even her best lyric. Not that it's a competition but from the crystallisation of reluctance "when everybody's dancing, I don't want to..." to "sex, money, feelings, die, baby don't you cry..." there are too many contenders for the shimmering crown of melancholic bliss. It's little wonder she's gone from the top of the Hype Machine back in the mid-noughties to working with David Lynch and forcing time to standstill during Twilight (soundtracks which REALLY deserve a serious reappraisal at some point for the cultural impact they've had). It's for all these reasons and more she's christening this newsletter for lovers of wonderfully miserable music.

The irony of all of this of course is that the meaning of Lykke's name in Swedish is "happiness, good fortune" and yet it's from moments of unhappiness that she's made enough to fund her own mezcal business (it's called Yola Mezcal and I can 1000% confirm it's one of the best brands) and carry on making it drizzle in our hearts for decades to come.

Until next time...

Keeeeeep cryyyyiiiing!!

Sean xo

P.S. Yes, I have clocked that as someone who as a teenager named a website "Drowned in Sound" that this drizzle and rain talk is another watery metaphor too far. What can I say? "Lonely rivers sigh..."

Here's the link to the Lykke Li: An Unhappy Hour Spotify playlist, which is hopefully well worth one hour and 13 minutes of your life at some point. Maybe on an autumnal walk.

Do let me know what you think of the tracks and this newsletter or the Unhappy Hour radio show via Twitter @seaninsound or Insta.

Further ReadingOne of Lykke Li's first interviews was Drowned in Sound's DiScover feature back in 2008. Loved this bit "sometimes I'm so fragile and weak, but other times not at all. It's almost as if I have this much stronger spirit inside that I can't imagine ever failing me - 'cause if it did, I can't even begin to imagine how I'd live. So I'd say it's more about my own different personalities and that struggle." Read the full piece here.

VOGUE on How Yola Jimenez Is Making Mezcal With Women's Empowerment in Mind.

NME's Andrew Trendell's interviewed Lykke Li on how heartbreak, hip hop and lots of mezcal helped 'So Sad So Sexy' come to life.

CONSEQUENCE OF SOUND spoke to Lykke Li about what "Lynchian" means.

The Unhappy Hour: Radio ShowYou can stream recent editions of the show on Mixcloud for free. Tune in to hear 2 hours of mellow and miserable music every 4 weeks, hosted by me, Sean Adams, the founder of the Drowned in Sound website.

CSS is about styling boxes. In fact, the whole web is made of boxes, from the browser viewport to elements on a page. But every once in a while a new feature comes along that makes us rethink our design approach.

Round displays, for example, make it fun to play with circular clip areas. Mobile screen notches and virtual keyboards offer challenges to best organize content that stays clear of them. And dual screen or foldable devices make us rethink how to best use available space in a number of different device postures.

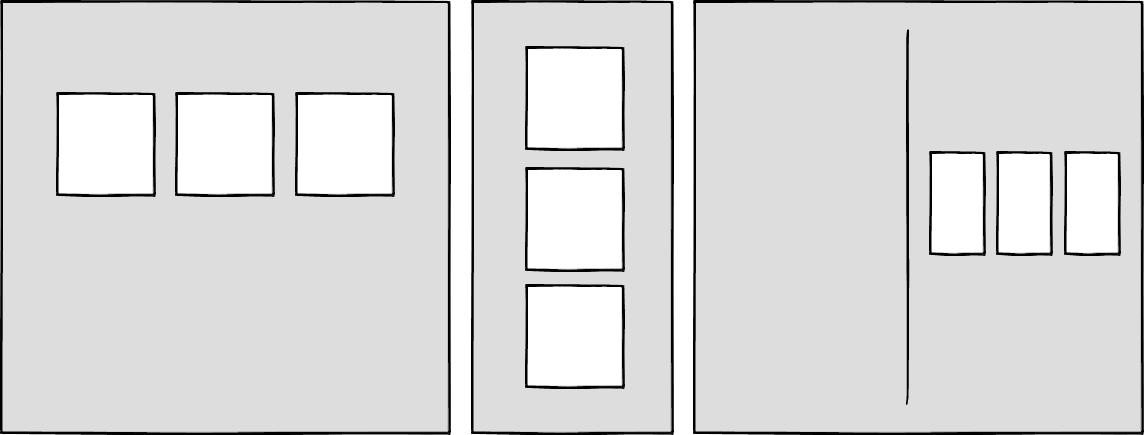

Sketches of a round display, a common rectangular mobile display, and a device with a foldable display.

Sketches of a round display, a common rectangular mobile display, and a device with a foldable display.

These recent evolutions of the web platform made it both more challenging and more interesting to design products. They're great opportunities for us to break out of our rectangular boxes.

I'd like to talk about a new feature similar to the above: the Window Controls Overlay for Progressive Web Apps (PWAs).

Progressive Web Apps are blurring the lines between apps and websites. They combine the best of both worlds. On one hand, they're stable, linkable, searchable, and responsive just like websites. On the other hand, they provide additional powerful capabilities, work offline, and read files just like native apps.

As a design surface, PWAs are really interesting because they challenge us to think about what mixing web and device-native user interfaces can be. On desktop devices in particular, we have more than 40 years of history telling us what applications should look like, and it can be hard to break out of this mental model.

At the end of the day though, PWAs on desktop are constrained to the window they appear in: a rectangle with a title bar at the top.

Here's what a typical desktop PWA app looks like:

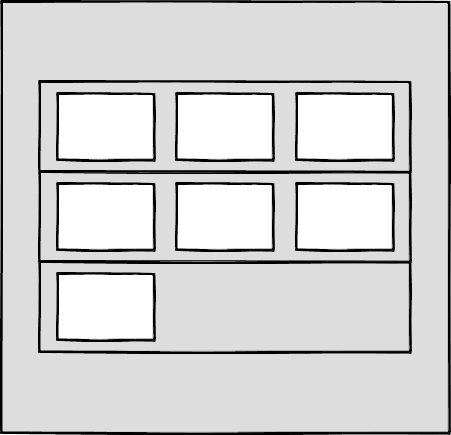

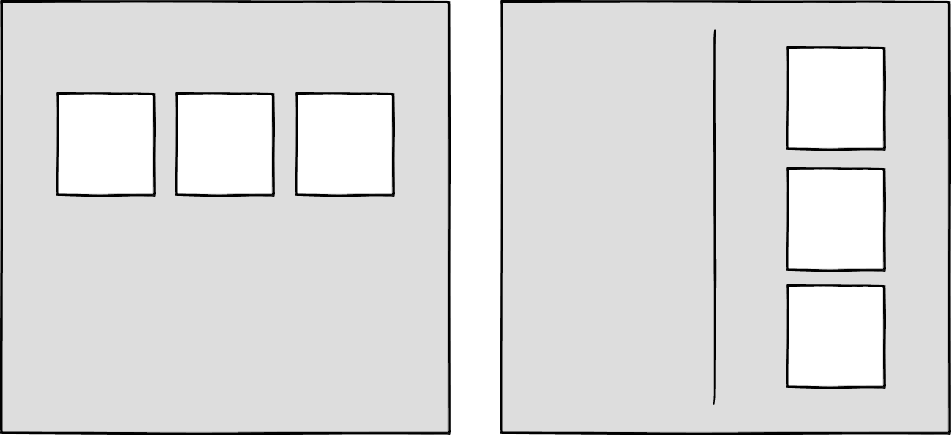

Sketches of two rectangular user interfaces representing the desktop Progressive Web App status quo on the macOS and Windows operating systems, respectively.

Sketches of two rectangular user interfaces representing the desktop Progressive Web App status quo on the macOS and Windows operating systems, respectively.

Sure, as the author of a PWA, you get to choose the color of the title bar (using the Web Application Manifest theme_color property), but that's about it.

What if we could think outside this box, and reclaim the real estate of the app's entire window? Doing so would give us a chance to make our apps more beautiful and feel more integrated in the operating system.

This is exactly what the Window Controls Overlay offers. This new PWA functionality makes it possible to take advantage of the full surface area of the app, including where the title bar normally appears.

About the title bar and window controlsLet's start with an explanation of what the title bar and window controls are.

The title bar is the area displayed at the top of an app window, which usually contains the app's name. Window controls are the affordances, or buttons, that make it possible to minimize, maximize, or close the app's window, and are also displayed at the top.

Window Controls Overlay removes the physical constraint of the title bar and window controls areas. It frees up the full height of the app window, enabling the title bar and window control buttons to be overlaid on top of the application's web content.

If you are reading this article on a desktop computer, take a quick look at other apps. Chances are they're already doing something similar to this. In fact, the very web browser you are using to read this uses the top area to display tabs.

Spotify displays album artwork all the way to the top edge of the application window.

Microsoft Word uses the available title bar space to display the auto-save and search functionalities, and more.

The whole point of this feature is to allow you to make use of this space with your own content while providing a way to account for the window control buttons. And it enables you to offer this modified experience on a range of platforms while not adversely affecting the experience on browsers or devices that don't support Window Controls Overlay. After all, PWAs are all about progressive enhancement, so this feature is a chance to enhance your app to use this extra space when it's available.

Let's use the featureFor the rest of this article, we'll be working on a demo app to learn more about using the feature.

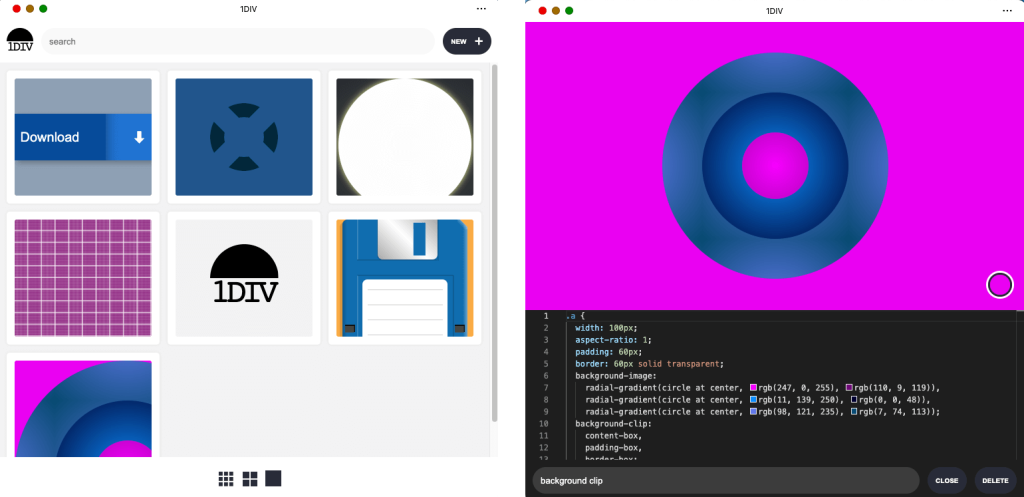

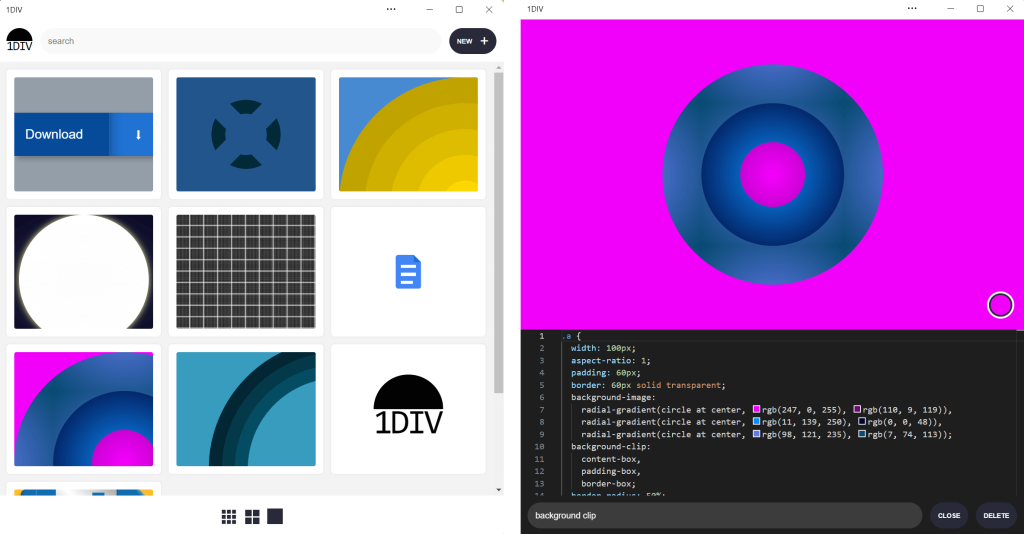

The demo app is called 1DIV. It's a simple CSS playground where users can create designs using CSS and a single HTML element.

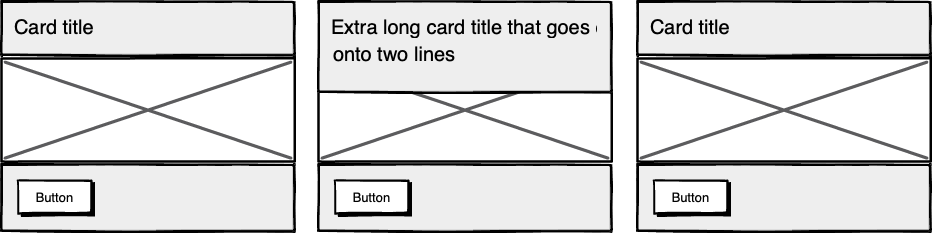

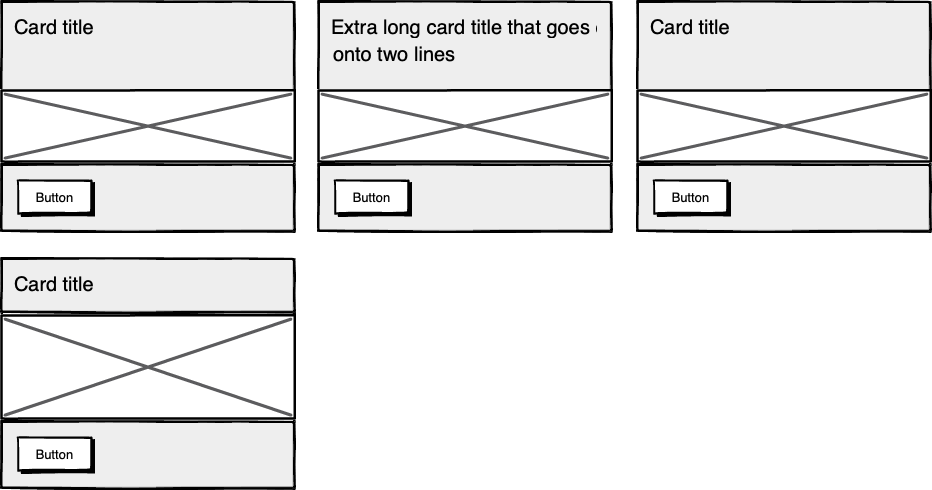

The app has two pages. The first lists the existing CSS designs you've created:

The second page enables you to create and edit CSS designs:

Since I've added a simple web manifest and service worker, we can install the app as a PWA on desktop. Here is what it looks like on macOS:

Screenshots of the 1DIV app thumbnail view and CSS editor view on macOS. This version of the app's window has a separate control bar at the top for the app name and window control buttons.

Screenshots of the 1DIV app thumbnail view and CSS editor view on macOS. This version of the app's window has a separate control bar at the top for the app name and window control buttons.

And on Windows:

Screenshots of the 1DIV app thumbnail view and CSS editor view on the Windows operating system. This version of the app's window also has a separate control bar at the top for the app name and window control buttons.

Screenshots of the 1DIV app thumbnail view and CSS editor view on the Windows operating system. This version of the app's window also has a separate control bar at the top for the app name and window control buttons.

Our app is looking good, but the white title bar in the first page is wasted space. In the second page, it would be really nice if the design area went all the way to the top of the app window.

Let's use the Window Controls Overlay feature to improve this.

Enabling Window Controls OverlayThe feature is still experimental at the moment. To try it, you need to enable it in one of the supported browsers.

As of now, it has been implemented in Chromium, as a collaboration between Microsoft and Google. We can therefore use it in Chrome or Edge by going to the internal about://flags page, and enabling the Desktop PWA Window Controls Overlay flag.

Using Window Controls OverlayTo use the feature, we need to add the following display_override member to our web app's manifest file:

{

"name": "1DIV",

"description": "1DIV is a mini CSS playground",

"lang": "en-US",

"start_url": "/",

"theme_color": "#ffffff",

"background_color": "#ffffff",

"display_override": [

"window-controls-overlay"

],

"icons": [

...

]

}

On the surface, the feature is really simple to use. This manifest change is the only thing we need to make the title bar disappear and turn the window controls into an overlay.

However, to provide a great experience for all users regardless of what device or browser they use, and to make the most of the title bar area in our design, we'll need a bit of CSS and JavaScript code.

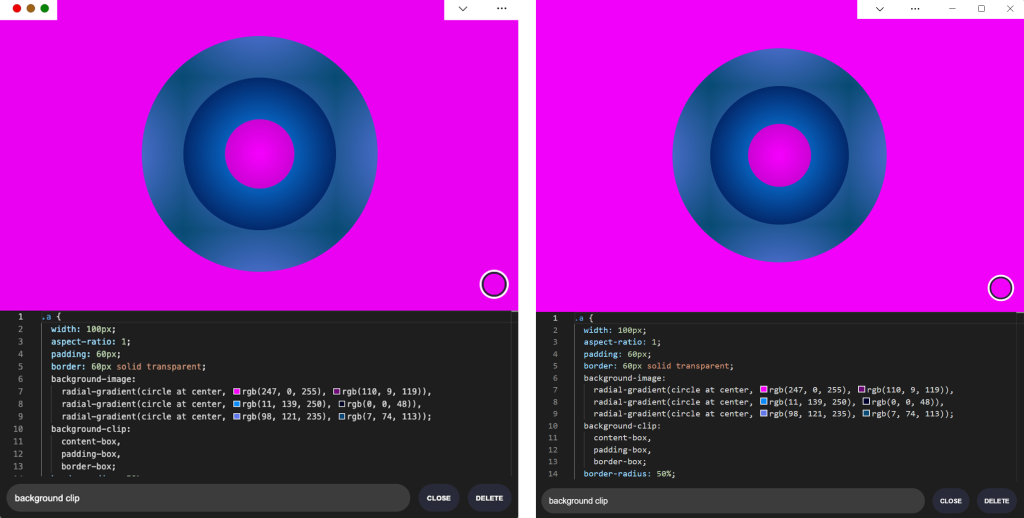

Here is what the app looks like now:

The title bar is gone, which is what we wanted, but our logo, search field, and NEW button are partially covered by the window controls because now our layout starts at the top of the window.

It's similar on Windows, with the difference that the close, maximize, and minimize buttons appear on the right side, grouped together with the PWA control buttons:

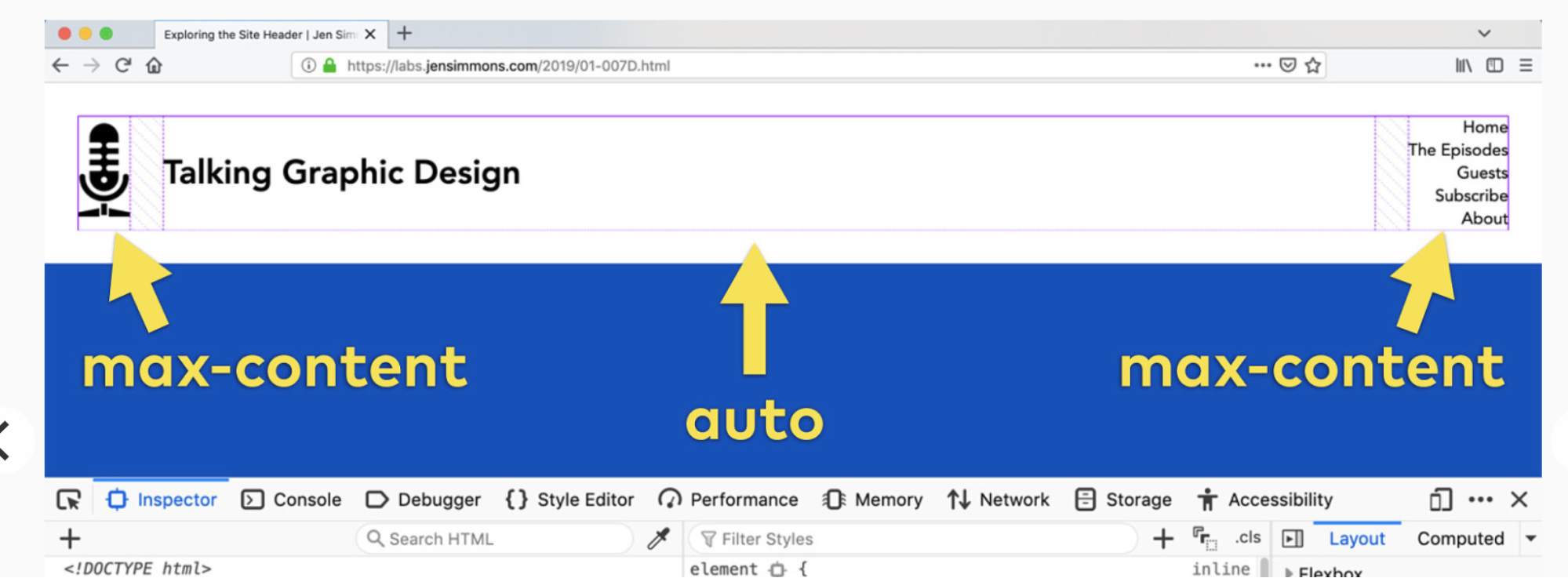

Along with the feature, new CSS environment variables have been introduced:

- titlebar-area-x

- titlebar-area-y

- titlebar-area-width

- titlebar-area-height

You use these variables with the CSS env() function to position your content where the title bar would have been while ensuring it won't overlap with the window controls. In our case, we'll use two of the variables to position our header, which contains the logo, search bar, and NEW button.

header {

position: absolute;

left: env(titlebar-area-x, 0);

width: env(titlebar-area-width, 100%);

height: var(--toolbar-height);

}

The titlebar-area-x variable gives us the distance from the left of the viewport to where the title bar would appear, and titlebar-area-width is its width. (Remember, this is not equivalent to the width of the entire viewport, just the title bar portion, which as noted earlier, doesn't include the window controls.)

By doing this, we make sure our content remains fully visible. We're also defining fallback values (the second parameter in the env() function) for when the variables are not defined (such as on non-supporting browsers, or when the Windows Control Overlay feature is disabled).

Now our header adapts to its surroundings, and it doesn't feel like the window control buttons have been added as an afterthought. The app looks a lot more like a native app.

Changing the window controls background color so it blends inNow let's take a closer look at our second page: the CSS playground editor.

Screenshots of the 1DIV app CSS editor view with Window Controls Overlay in macOS and Windows, respectively. The window controls overlay areas have a solid white background color, which contrasts with the hot pink color of the example CSS design displayed in the editor.

Screenshots of the 1DIV app CSS editor view with Window Controls Overlay in macOS and Windows, respectively. The window controls overlay areas have a solid white background color, which contrasts with the hot pink color of the example CSS design displayed in the editor.

Not great. Our CSS demo area does go all the way to the top, which is what we wanted, but the way the window controls appear as white rectangles on top of it is quite jarring.

We can fix this by changing the app's theme color. There are a couple of ways to define it:

- PWAs can define a theme color in the web app manifest file using the theme_color manifest member. This color is then used by the OS in different ways. On desktop platforms, it is used to provide a background color to the title bar and window controls.

- Websites can use the theme-color meta tag as well. It's used by browsers to customize the color of the UI around the web page. For PWAs, this color can override the manifest theme_color.

In our case, we can set the manifest theme_color to white to provide the right default color for our app. The OS will read this color value when the app is installed and use it to make the window controls background color white. This color works great for our main page with the list of demos.

The theme-color meta tag can be changed at runtime, using JavaScript. So we can do that to override the white with the right demo background color when one is opened.

Here is the function we'll use:

function themeWindow(bgColor) {

document.querySelector("meta[name=theme-color]").setAttribute('content', bgColor);

}

With this in place, we can imagine how using color and CSS transitions can produce a smooth change from the list page to the demo page, and enable the window control buttons to blend in with the rest of the app's interface.

Now, getting rid of the title bar entirely does have an important accessibility consequence: it's much more difficult to move the application window around.

The title bar provides a sizable area for users to click and drag, but by using the Window Controls Overlay feature, this area becomes limited to where the control buttons are, and users have to very precisely aim between these buttons to move the window.

Fortunately, this can be fixed using CSS with the app-region property. This property is, for now, only supported in Chromium-based browsers and needs the -webkit- vendor prefix.

To make any element of the app become a dragging target for the window, we can use the following:

-webkit-app-region: drag;

It is also possible to explicitly make an element non-draggable:

-webkit-app-region: no-drag;

These options can be useful for us. We can make the entire header a dragging target, but make the search field and NEW button within it non-draggable so they can still be used as normal.

However, because the editor page doesn't display the header, users wouldn't be able to drag the window while editing code. So let's use a different approach. We'll create another element before our header, also absolutely positioned, and dedicated to dragging the window.

<div ></div> <header>...</header>

.drag {

position: absolute;

top: 0;

width: 100%;

height: env(titlebar-area-height, 0);

-webkit-app-region: drag;

}

With the above code, we're making the draggable area span the entire viewport width, and using the titlebar-area-height variable to make it as tall as what the title bar would have been. This way, our draggable area is aligned with the window control buttons as shown below.

And, now, to make sure our search field and button remain usable:

header .search,

header .new {

-webkit-app-region: no-drag;

}

With the above code, users can click and drag where the title bar used to be. It is an area that users expect to be able to use to move windows on desktop, and we're not breaking this expectation, which is good.

It may be useful for an app to know both whether the window controls overlay is visible and when its size changes. In our case, if the user made the window very narrow, there wouldn't be enough space for the search field, logo, and button to fit, so we'd want to push them down a bit.

The Window Controls Overlay feature comes with a JavaScript API we can use to do this: navigator.windowControlsOverlay.

The API provides three interesting things:

- navigator.windowControlsOverlay.visible lets us know whether the overlay is visible.

- navigator.windowControlsOverlay.getBoundingClientRect() lets us know the position and size of the title bar area.

- navigator.windowControlsOverlay.ongeometrychange lets us know when the size or visibility changes.

Let's use this to be aware of the size of the title bar area and move the header down if it's too narrow.

if (navigator.windowControlsOverlay) {

navigator.windowControlsOverlay.addEventListener('geometrychange', () => {

const { width } = navigator.windowControlsOverlay.getBoundingClientRect();

document.body.classList.toggle('narrow', width < 250);

});

}

In the example above, we set the narrow class on the body of the app if the title bar area is narrower than 250px. We could do something similar with a media query, but using the windowControlsOverlay API has two advantages for our use case:

- It's only fired when the feature is supported and used; we don't want to adapt the design otherwise.

- We get the size of the title bar area across operating systems, which is great because the size of the window controls is different on Mac and Windows. Using a media query wouldn't make it possible for us to know exactly how much space remains.

.narrow header {

top: env(titlebar-area-height, 0);

left: 0;

width: 100%;

}

Using the above CSS code, we can move our header down to stay clear of the window control buttons when the window is too narrow, and move the thumbnails down accordingly.

Using the Window Controls Overlay feature, we were able to take our simple demo app and turn it into something that feels so much more integrated on desktop devices. Something that reaches out of the usual window constraints and provides a custom experience for its users.

In reality, this feature only gives us about 30 pixels of extra room and comes with challenges on how to deal with the window controls. And yet, this extra room and those challenges can be turned into exciting design opportunities.

More devices of all shapes and forms get invented all the time, and the web keeps on evolving to adapt to them. New features get added to the web platform to allow us, web authors, to integrate more and more deeply with those devices. From watches or foldable devices to desktop computers, we need to evolve our design approach for the web. Building for the web now lets us think outside the rectangular box.

So let's embrace this. Let's use the standard technologies already at our disposal, and experiment with new ideas to provide tailored experiences for all devices, all from a single codebase!

If you get a chance to try the Window Controls Overlay feature and have feedback about it, you can open issues on the spec's repository. It's still early in the development of this feature, and you can help make it even better. Or, you can take a look at the feature's existing documentation, or this demo app and its source code.

Do you find yourself designing screens with only a vague idea of how the things on the screen relate to the things elsewhere in the system? Do you leave stakeholder meetings with unclear directives that often seem to contradict previous conversations? You know a better understanding of user needs would help the team get clear on what you are actually trying to accomplish, but time and budget for research is tight. When it comes to asking for more direct contact with your users, you might feel like poor Oliver Twist, timidly asking, "Please, sir, I want some more."

Here's the trick. You need to get stakeholders themselves to identify high-risk assumptions and hidden complexity, so that they become just as motivated as you to get answers from users. Basically, you need to make them think it's their idea.

In this article, I'll show you how to collaboratively expose misalignment and gaps in the team's shared understanding by bringing the team together around two simple questions:

- What are the objects?

- What are the relationships between those objects?

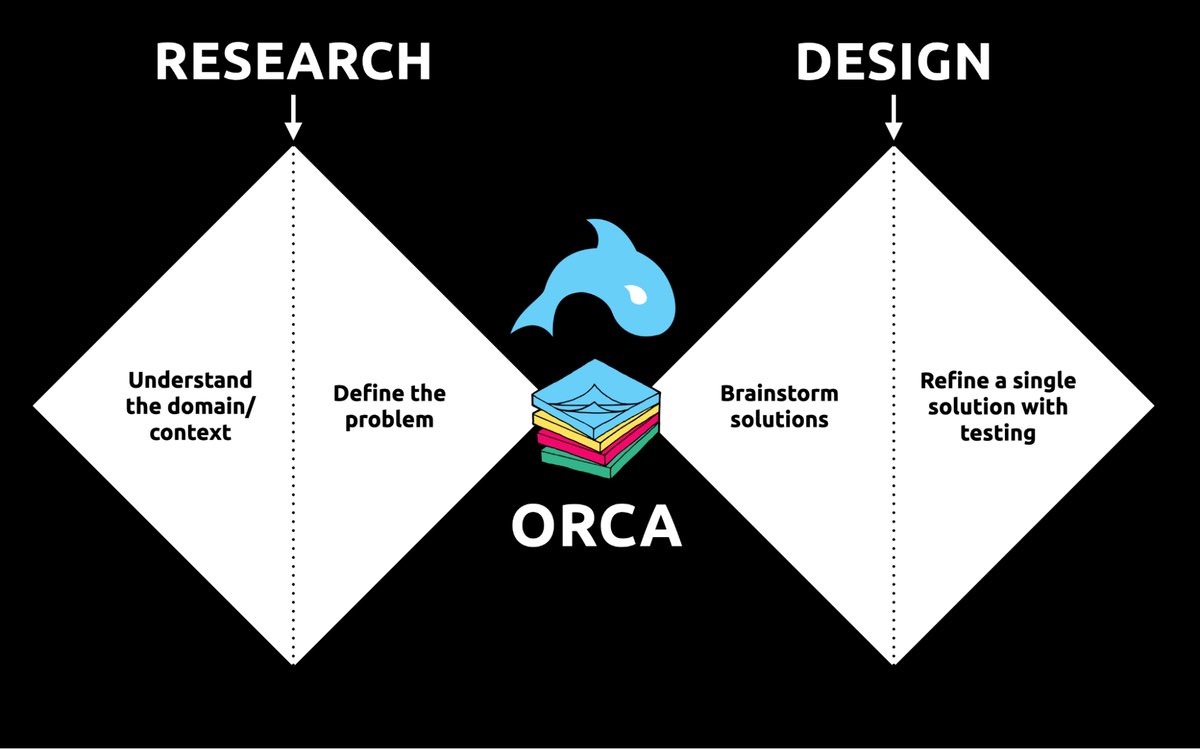

These two questions align to the first two steps of the ORCA process, which might become your new best friend when it comes to reducing guesswork. Wait, what's ORCA?! Glad you asked.

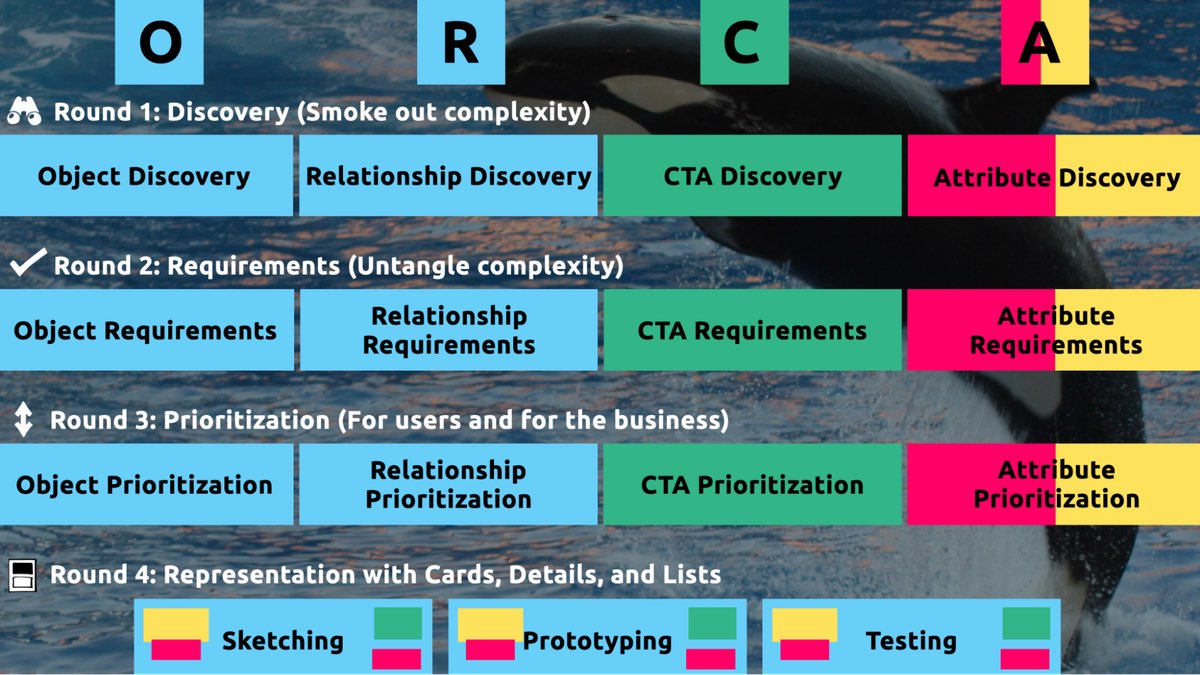

ORCA stands for Objects, Relationships, CTAs, and Attributes, and it outlines a process for creating solid object-oriented user experiences. Object-oriented UX is my design philosophy. ORCA is an iterative methodology for synthesizing user research into an elegant structural foundation to support screen and interaction design. OOUX and ORCA have made my work as a UX designer more collaborative, effective, efficient, fun, strategic, and meaningful.

The ORCA process has four iterative rounds and a whopping fifteen steps. In each round we get more clarity on our Os, Rs, Cs, and As.

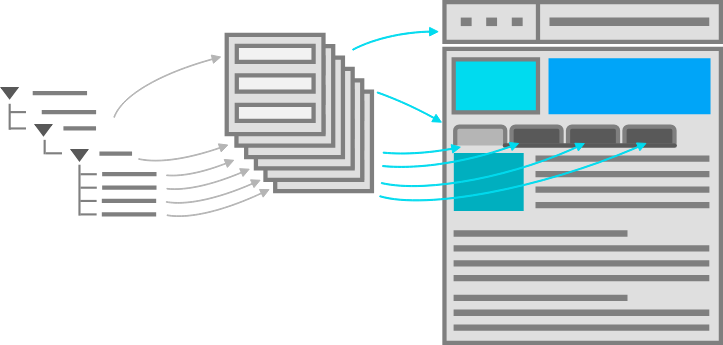

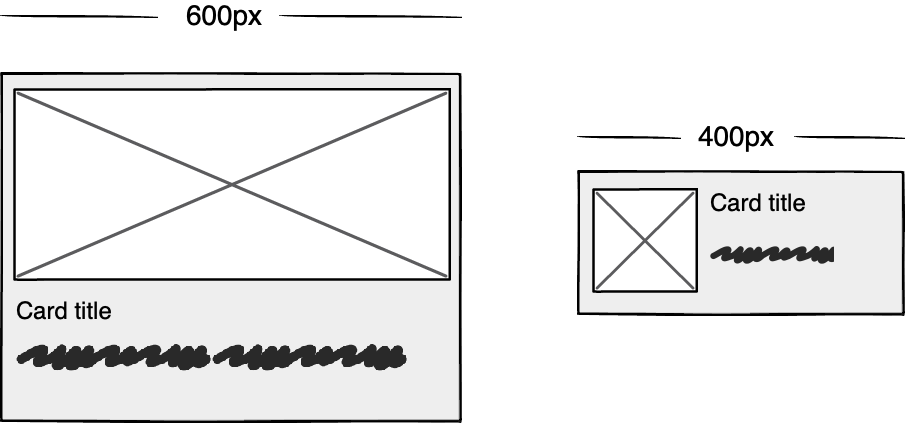

The four rounds and fifteen steps of the ORCA process. In the OOUX world, we love color-coding. Blue is reserved for objects! (Yellow is for core content, pink is for metadata, and green is for calls-to-action. Learn more about the color-coded object map and connecting CTAs to objects.)

The four rounds and fifteen steps of the ORCA process. In the OOUX world, we love color-coding. Blue is reserved for objects! (Yellow is for core content, pink is for metadata, and green is for calls-to-action. Learn more about the color-coded object map and connecting CTAs to objects.)

I sometimes say that ORCA is a "garbage in, garbage out" process. To ensure that the testable prototype produced in the final round actually tests well, the process needs to be fed by good research. But if you don't have a ton of research, the beginning of the ORCA process serves another purpose: it helps you sell the need for research.

ORCA strengthens the weak spot between research and design by helping distill research into solid information architecture—scaffolding for the screen design and interaction design to hang on.

ORCA strengthens the weak spot between research and design by helping distill research into solid information architecture—scaffolding for the screen design and interaction design to hang on.

In other words, the ORCA process serves as a gauntlet between research and design. With good research, you can gracefully ride the killer whale from research into design. But without good research, the process effectively spits you back into research and with a cache of specific open questions.

Getting in the same curiosity-boatWhat gets us into trouble is not what we don't know. It's what we know for sure that just ain't so.

Mark Twain

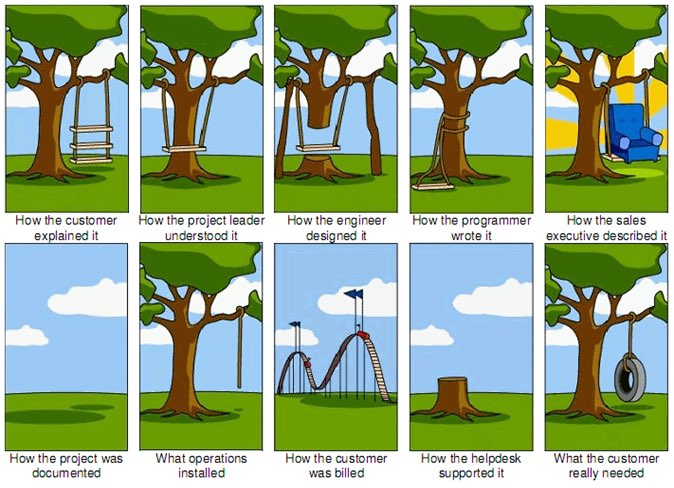

The first two steps of the ORCA process—Object Discovery and Relationship Discovery—shine a spotlight on the dark, dusty corners of your team's misalignments and any inherent complexity that's been swept under the rug. It begins to expose what this classic comic so beautifully illustrates:

The original "Tree Swing Project Management" cartoon dates back to the 1960s or 1970s and has no artist attribution we could find.

The original "Tree Swing Project Management" cartoon dates back to the 1960s or 1970s and has no artist attribution we could find.

This is one reason why so many UX designers are frustrated in their job and why many projects fail. And this is also why we often can't sell research: every decision-maker is confident in their own mental picture.

Once we expose hidden fuzzy patches in each picture and the differences between them all, the case for user research makes itself.

But how we do this is important. However much we might want to, we can't just tell everyone, "YOU ARE WRONG!" Instead, we need to facilitate and guide our team members to self-identify holes in their picture. When stakeholders take ownership of assumptions and gaps in understanding, BAM! Suddenly, UX research is not such a hard sell, and everyone is aboard the same curiosity-boat.

Say your users are doctors. And you have no idea how doctors use the system you are tasked with redesigning.

You might try to sell research by honestly saying: "We need to understand doctors better! What are their pain points? How do they use the current app?" But here's the problem with that. Those questions are vague, and the answers to them don't feel acutely actionable.

Instead, you want your stakeholders themselves to ask super-specific questions. This is more like the kind of conversation you need to facilitate. Let's listen in:

"Wait a sec, how often do doctors share patients? Does a patient in this system have primary and secondary doctors?"

"Can a patient even have more than one primary doctor?"

"Is it a 'primary doctor' or just a 'primary caregiver'… Can't that role be a nurse practitioner?"

"No, caregivers are something else… That's the patient's family contacts, right?"

"So are caregivers in scope for this redesign?"

"Yeah, because if a caregiver is present at an appointment, the doctor needs to note that. Like, tag the caregiver on the note… Or on the appointment?"

Now we are getting somewhere. Do you see how powerful it can be getting stakeholders to debate these questions themselves? The diabolical goal here is to shake their confidence—gently and diplomatically.

When these kinds of questions bubble up collaboratively and come directly from the mouths of your stakeholders and decision-makers, suddenly, designing screens without knowing the answers to these questions seems incredibly risky, even silly.

If we create software without understanding the real-world information environment of our users, we will likely create software that does not align to the real-world information environment of our users. And this will, hands down, result in a more confusing, more complex, and less intuitive software product.

The two questionsBut how do we get to these kinds of meaty questions diplomatically, efficiently, collaboratively, and reliably?

We can do this by starting with those two big questions that align to the first two steps of the ORCA process:

- What are the objects?

- What are the relationships between those objects?

In practice, getting to these answers is easier said than done. I'm going to show you how these two simple questions can provide the outline for an Object Definition Workshop. During this workshop, these "seed" questions will blossom into dozens of specific questions and shine a spotlight on the need for more user research.

Prep work: Noun foragingIn the next section, I'll show you how to run an Object Definition Workshop with your stakeholders (and entire cross-functional team, hopefully). But first, you need to do some prep work.

Basically, look for nouns that are particular to the business or industry of your project, and do it across at least a few sources. I call this noun foraging.

Here are just a few great noun foraging sources:

- the product's marketing site

- the product's competitors' marketing sites (competitive analysis, anyone?)

- the existing product (look at labels!)

- user interview transcripts

- notes from stakeholder interviews or vision docs from stakeholders

Put your detective hat on, my dear Watson. Get resourceful and leverage what you have. If all you have is a marketing website, some screenshots of the existing legacy system, and access to customer service chat logs, then use those.

As you peruse these sources, watch for the nouns that are used over and over again, and start listing them (preferably on blue sticky notes if you'll be creating an object map later!).

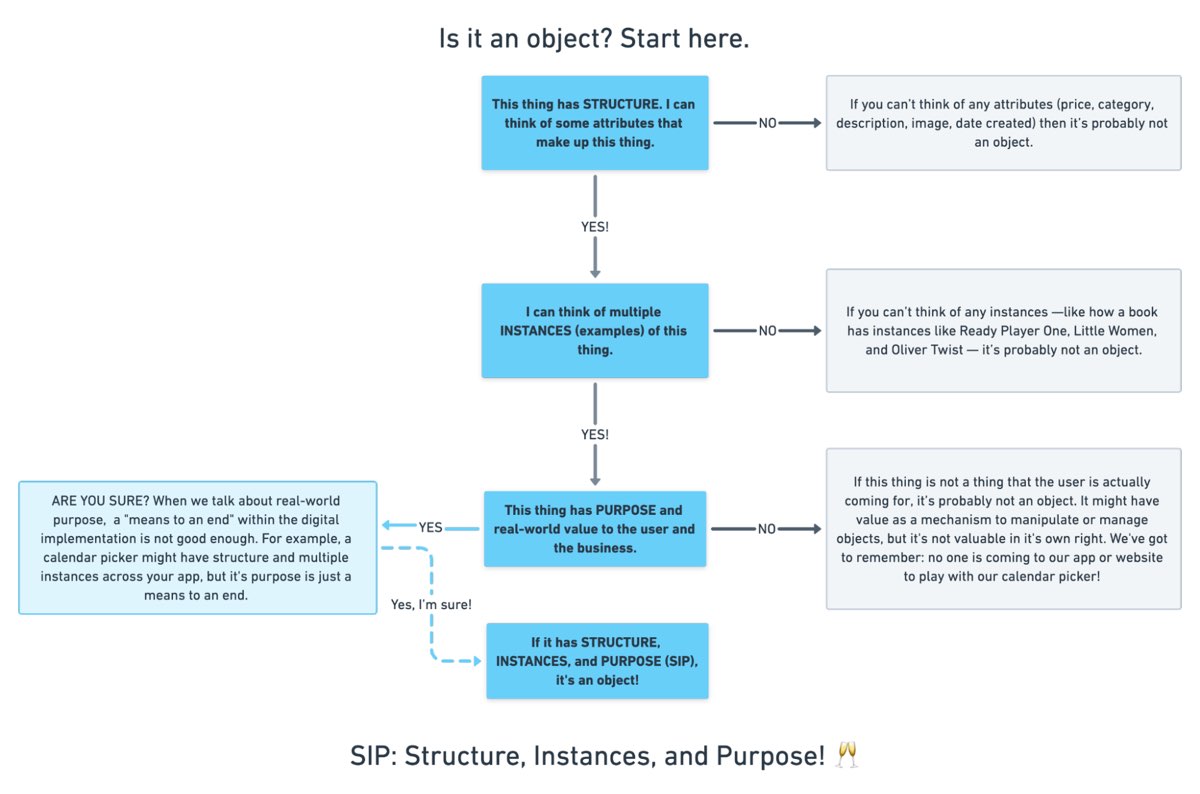

You'll want to focus on nouns that might represent objects in your system. If you are having trouble determining if a noun might be object-worthy, remember the acronym SIP and test for:

- Structure

- Instances

- Purpose

Think of a library app, for example. Is "book" an object?

Structure: can you think of a few attributes for this potential object? Title, author, publish date… Yep, it has structure. Check!

Instance: what are some examples of this potential "book" object? Can you name a few? The Alchemist, Ready Player One, Everybody Poops… OK, check!

Purpose: why is this object important to the users and business? Well, "book" is what our library client is providing to people and books are why people come to the library… Check, check, check!

SIP: Structure, Instances, and Purpose! (Here's a flowchart where I elaborate even more on SIP.)

SIP: Structure, Instances, and Purpose! (Here's a flowchart where I elaborate even more on SIP.)

As you are noun foraging, focus on capturing the nouns that have SIP. Avoid capturing components like dropdowns, checkboxes, and calendar pickers—your UX system is not your design system! Components are just the packaging for objects—they are a means to an end. No one is coming to your digital place to play with your dropdown! They are coming for the VALUABLE THINGS and what they can do with them. Those things, or objects, are what we are trying to identify.

Let's say we work for a startup disrupting the email experience. This is how I'd start my noun foraging.

First I'd look at my own email client, which happens to be Gmail. I'd then look at Outlook and the new HEY email. I'd look at Yahoo, Hotmail…I'd even look at Slack and Basecamp and other so-called "email replacers." I'd read some articles, reviews, and forum threads where people are complaining about email. While doing all this, I would look for and write down the nouns.

(Before moving on, feel free to go noun foraging for this hypothetical product, too, and then scroll down to see how much our lists match up. Just don't get lost in your own emails! Come back to me!)

Drumroll, please…

Here are a few nouns I came up with during my noun foraging:

- email message

- thread

- contact

- client

- rule/automation

- email address that is not a contact?

- contact groups

- attachment

- Google doc file / other integrated file

- newsletter? (HEY treats this differently)

- saved responses and templates

In the OOUX world, we love color-coding. Blue is reserved for objects! (Yellow is for core content, pink is for metadata, and green is for calls-to-action. Learn more about the color coded object map and connecting CTAs to objects.)

In the OOUX world, we love color-coding. Blue is reserved for objects! (Yellow is for core content, pink is for metadata, and green is for calls-to-action. Learn more about the color coded object map and connecting CTAs to objects.)

Scan your list of nouns and pick out words that you are completely clueless about. In our email example, it might be client or automation. Do as much homework as you can before your session with stakeholders: google what's googleable. But other terms might be so specific to the product or domain that you need to have a conversation about them.

Aside: here are some real nouns foraged during my own past project work that I needed my stakeholders to help me understand:

- Record Locator

- Incentive Home

- Augmented Line Item

- Curriculum-Based Measurement Probe

This is really all you need to prepare for the workshop session: a list of nouns that represent potential objects and a short list of nouns that need to be defined further.

Facilitate an Object Definition WorkshopYou could actually start your workshop with noun foraging—this activity can be done collaboratively. If you have five people in the room, pick five sources, assign one to every person, and give everyone ten minutes to find the objects within their source. When the time's up, come together and find the overlap. Affinity mapping is your friend here!

If your team is short on time and might be reluctant to do this kind of grunt work (which is usually the case) do your own noun foraging beforehand, but be prepared to show your work. I love presenting screenshots of documents and screens with all the nouns already highlighted. Bring the artifacts of your process, and start the workshop with a five-minute overview of your noun foraging journey.

HOT TIP: before jumping into the workshop, frame the conversation as a requirements-gathering session to help you better understand the scope and details of the system. You don't need to let them know that you're looking for gaps in the team's understanding so that you can prove the need for more user research—that will be our little secret. Instead, go into the session optimistically, as if your knowledgeable stakeholders and PMs and biz folks already have all the answers.

Then, let the question whack-a-mole commence.

1. What is this thing?Want to have some real fun? At the beginning of your session, ask stakeholders to privately write definitions for the handful of obscure nouns you might be uncertain about. Then, have everyone show their cards at the same time and see if you get different definitions (you will). This is gold for exposing misalignment and starting great conversations.

As your discussion unfolds, capture any agreed-upon definitions. And when uncertainty emerges, quietly (but visibly) start an "open questions" parking lot.

Do you remember when having a great website was enough? Now, people are getting answers from Siri, Google search snippets, and mobile apps, not just our websites. Forward-thinking organizations have adopted an omnichannel content strategy, whose mission is to reach audiences across multiple digital channels and platforms.

But how do you set up a content management system (CMS) to reach your audience now and in the future? I learned the hard way that creating a content model—a definition of content types, attributes, and relationships that let people and systems understand content—with my more familiar design-system thinking would capsize my customer's omnichannel content strategy. You can avoid that outcome by creating content models that are semantic and that also connect related content.

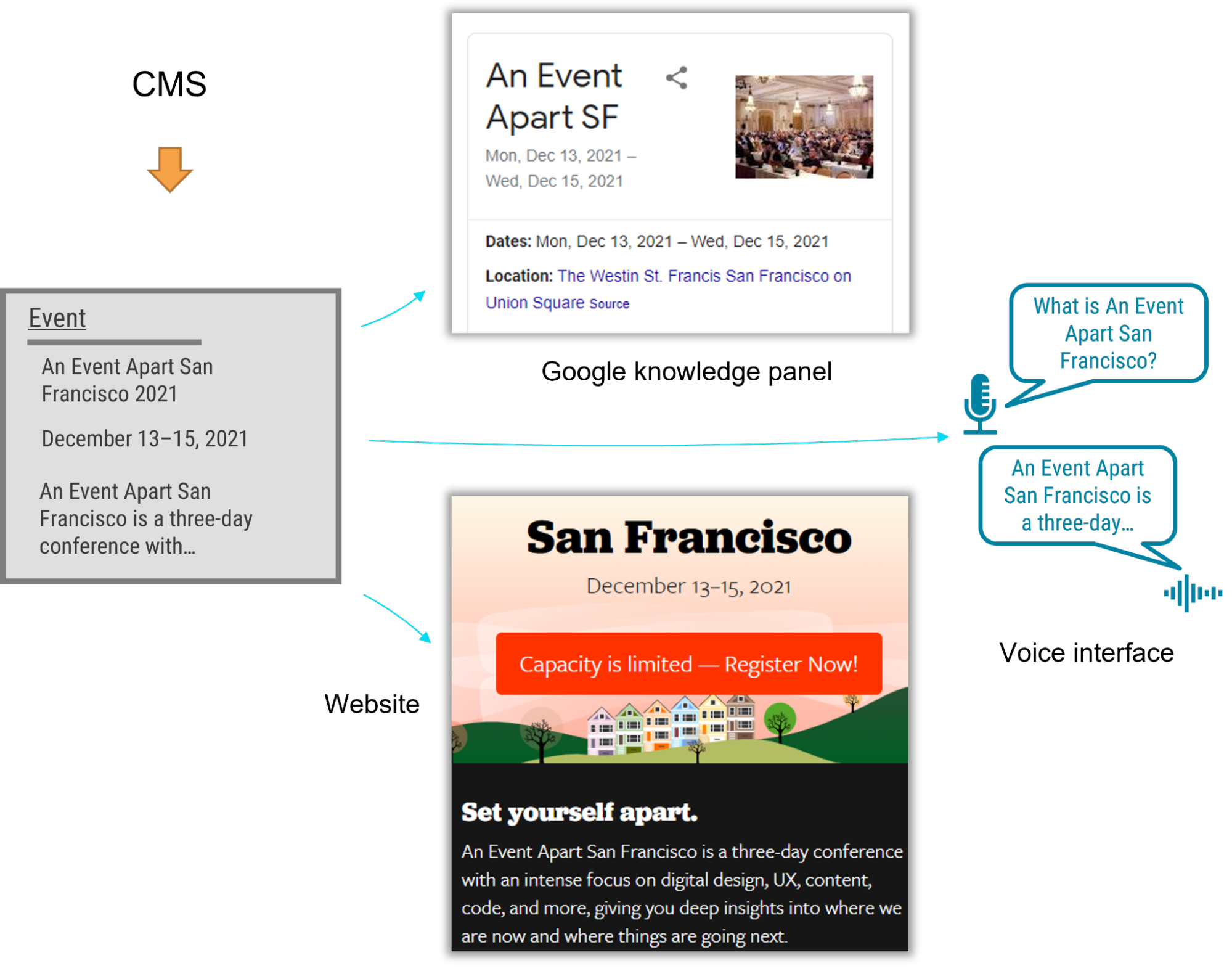

I recently had the opportunity to lead the CMS implementation for a Fortune 500 company. The client was excited by the benefits of an omnichannel content strategy, including content reuse, multichannel marketing, and robot delivery—designing content to be intelligible to bots, Google knowledge panels, snippets, and voice user interfaces.

A content model is a critical foundation for an omnichannel content strategy, and for our content to be understood by multiple systems, the model needed semantic types—types named according to their meaning instead of their presentation. Our goal was to let authors create content and reuse it wherever it was relevant. But as the project proceeded, I realized that supporting content reuse at the scale that my customer needed required the whole team to recognize a new pattern.

Despite our best intentions, we kept drawing from what we were more familiar with: design systems. Unlike web-focused content strategies, an omnichannel content strategy can't rely on WYSIWYG tools for design and layout. Our tendency to approach the content model with our familiar design-system thinking constantly led us to veer away from one of the primary purposes of a content model: delivering content to audiences on multiple marketing channels.

Two essential principles for an effective content modelWe needed to help our designers, developers, and stakeholders understand that we were doing something very different from their prior web projects, where it was natural for everyone to think about content as visual building blocks fitting into layouts. The previous approach was not only more familiar but also more intuitive—at least at first—because it made the designs feel more tangible. We discovered two principles that helped the team understand how a content model differs from the design systems that we were used to:

- Content models must define semantics instead of layout.

- And content models should connect content that belongs together.

A semantic content model uses type and attribute names that reflect the meaning of the content, not how it will be displayed. For example, in a nonsemantic model, teams might create types like teasers, media blocks, and cards. Although these types might make it easy to lay out content, they don't help delivery channels understand the content's meaning, which in turn would have opened the door to the content being presented in each marketing channel. In contrast, a semantic content model uses type names like product, service, and testimonial so that each delivery channel can understand the content and use it as it sees fit.

When you're creating a semantic content model, a great place to start is to look over the types and properties defined by Schema.org, a community-driven resource for type definitions that are intelligible to platforms like Google search.

A semantic content model has several benefits:

- Even if your team doesn't care about omnichannel content, a semantic content model decouples content from its presentation so that teams can evolve the website's design without needing to refactor its content. In this way, content can withstand disruptive website redesigns.

- A semantic content model also provides a competitive edge. By adding structured data based on Schema.org's types and properties, a website can provide hints to help Google understand the content, display it in search snippets or knowledge panels, and use it to answer voice-interface user questions. Potential visitors could discover your content without ever setting foot in your website.

- Beyond those practical benefits, you'll also need a semantic content model if you want to deliver omnichannel content. To use the same content in multiple marketing channels, delivery channels need to be able to understand it. For example, if your content model were to provide a list of questions and answers, it could easily be rendered on a frequently asked questions (FAQ) page, but it could also be used in a voice interface or by a bot that answers common questions.

For example, using a semantic content model for articles, events, people, and locations lets A List Apart provide cleanly structured data for search engines so that users can read the content on the website, in Google knowledge panels, and even with hypothetical voice interfaces in the future.

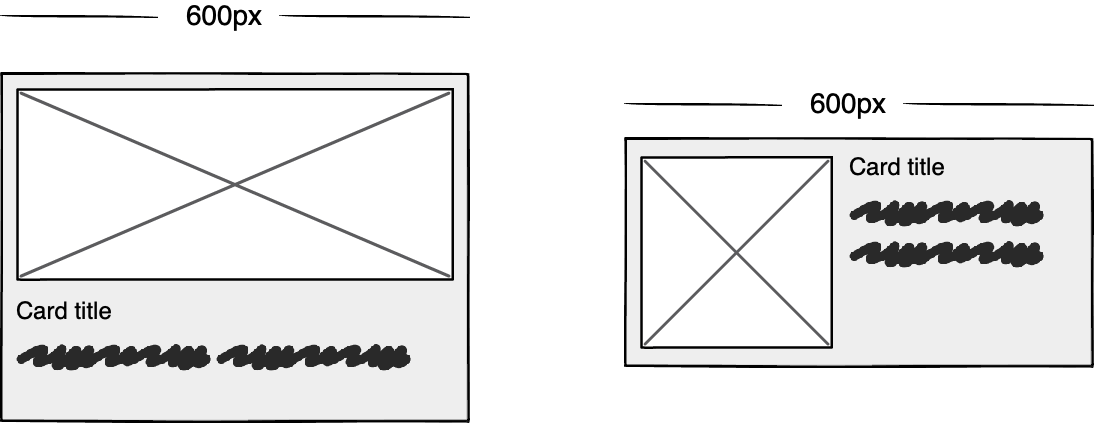

Content models that connect

Content models that connect