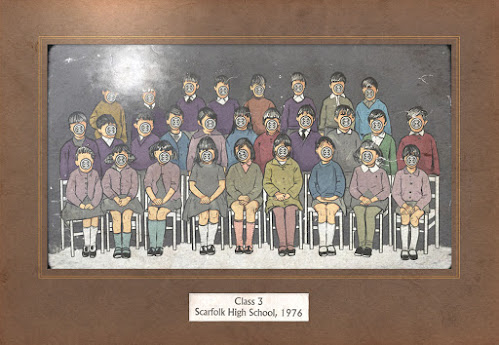

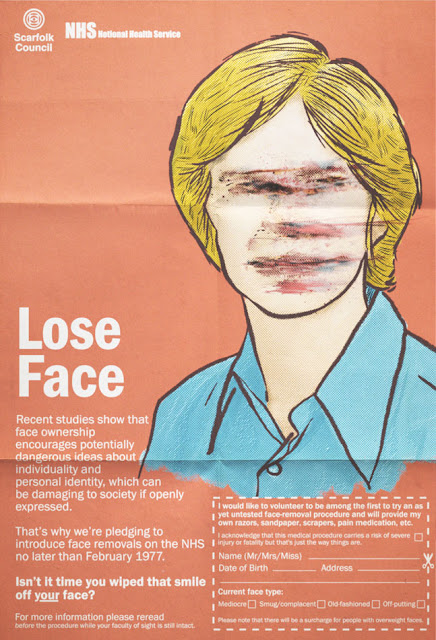

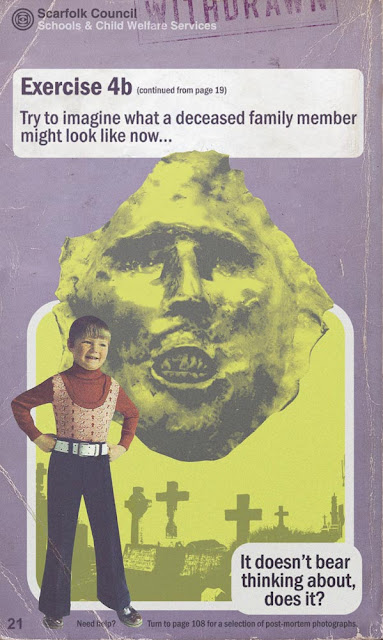

On 7 September, 1976, dozens of children, including every single pupil from class 3, Scarfolk High School, vanished on their way to school. A police operation was launched but no clues were ever found. The children were pronounced dead the following Monday, a mere three days later.

Every year thereafter, the police commissioned their sketch artist to draw, in the style of a school photograph, how the missing children might have looked (albeit with their faces removed) had they not disappeared in mysterious circumstances. This was sent to the bereaved parents of class 3 at an exorbitant cost of £31.25.

In the 1979 class sketch, one parent noticed a small label on one of the faceless figure's clothes that contained a code word only their child could have known.

Under mounting pressure from parents, the police eventually raided their artist's studio and found 347 children in his cellar where many had been held captive for several years. The police immediately seized and confined the children as evidence in a crime investigation, which, after much dithering, ultimately never went to court leaving the families no choice but to pursue a private prosecution against the kidnapper.

As the children had already been pronounced dead and the cost of amending the relevant paperwork was high, they were given away as prizes in the Scarfolk police raffle, which helped pay the legal fees of their sketch artist, who, it turns out, was the son of Scarfolk's police commissioner.

RESTful APIs are everywhere. This is funny, because how many people really know what "RESTful" is supposed to mean?

I think most of us can empathize with this Hacker News poster:

I've read several articles about REST, even a bit of the original paper. But I still have quite a vague idea about what it is. I'm beginning to think that nobody knows, that it's simply a very poorly defined concept.

I had planned to write a blog post exploring how REST came to be such a dominant paradigm for communication across the internet. I started my research by reading Roy Fielding's 2000 dissertation, which introduced REST to the world. After reading Fielding's dissertation, I realized that the much more interesting story here is how Fielding's ideas came to be so widely misunderstood.

Many more people know that Fielding's dissertation is where REST came from than have read the dissertation (fair enough), so misconceptions about what the dissertation actually contains are pervasive.

The biggest of these misconceptions is that the dissertation directly addresses the problem of building APIs. I had always assumed, as I imagine many people do, that REST was intended from the get-go as an architectural model for web APIs built on top of HTTP. I thought perhaps that there had been some chaotic experimental period where people were building APIs on top of HTTP all wrong, and then Fielding came along and presented REST as the sane way to do things. But the timeline doesn't make sense here: APIs for web services, in the sense that we know them today, weren't a thing until a few years after Fielding published his dissertation.

Fielding's dissertation (titled "Architectural Styles and the Design of Network-based Software Architectures") is not about how to build APIs on top of HTTP but rather about HTTP itself. Fielding contributed to the HTTP/1.0 specification and co-authored the HTTP/1.1 specification, which was published in 1999. He was interested in the architectural lessons that could be drawn from the design of the HTTP protocol; his dissertation presents REST as a distillation of the architectural principles that guided the standardization process for HTTP/1.1. Fielding used these principles to make decisions about which proposals to incorporate into HTTP/1.1. For example, he rejected a proposal to batch requests using new MGET and MHEAD methods because he felt the proposal violated the constraints prescribed by REST, especially the constraint that messages in a REST system should be easy to proxy and cache.1 So HTTP/1.1 was instead designed around persistent connections over which multiple HTTP requests can be sent. (Fielding also felt that cookies are not RESTful because they add state to what should be a stateless system, but their usage was already entrenched.2) REST, for Fielding, was not a guide to building HTTP-based systems but a guide to extending HTTP.

This isn't to say that Fielding doesn't think REST could be used to build other systems. It's just that he assumes these other systems will also be "distributed hypermedia systems." This is another misconception people have about REST: that it is a general architecture you can use for any kind of networked application. But you could sum up the part of the dissertation where Fielding introduces REST as, essentially, "Listen, we just designed HTTP, so if you also find yourself designing a distributed hypermedia system you should use this cool architecture we worked out called REST to make things easier." It's not obvious why Fielding thinks anyone would ever attempt to build such a thing given that the web already exists; perhaps in 2000 it seemed like there was room for more than one distributed hypermedia system in the world. Anyway, Fielding makes clear that REST is intended as a solution for the scalability and consistency problems that arise when trying to connect hypermedia across the internet, not as an architectural model for distributed applications in general.

We remember Fielding's dissertation now as the dissertation that introduced REST, but really the dissertation is about how much one-size-fits-all software architectures suck, and how you can better pick a software architecture appropriate for your needs. Only a single chapter of the dissertation is devoted to REST itself; much of the word count is spent on a taxonomy of alternative architectural styles3 that one could use for networked applications. Among these is the Pipe-and-Filter (PF) style, inspired by Unix pipes, along with various refinements of the Client-Server style (CS), such as Layered-Client-Server (LCS), Client-Cache-Stateless-Server (C$SS), and Layered-Client-Cache-Stateless-Server (LC$SS). The acronyms get unwieldy but Fielding's point is that you can mix and match constraints imposed by existing styles to derive new styles. REST gets derived this way and could instead have been called—but for obvious reasons was not—Uniform-Layered-Code-on-Demand-Client-Cache-Stateless-Server (ULCODC$SS). Fielding establishes this taxonomy to emphasize that different constraints are appropriate for different applications and that this last group of constraints were the ones he felt worked best for HTTP.

This is the deep, deep irony of REST's ubiquity today. REST gets blindly used for all sorts of networked applications now, but Fielding originally offered REST as an illustration of how to derive a software architecture tailored to an individual application's particular needs.

I struggle to understand how this happened, because Fielding is so explicit about the pitfalls of not letting form follow function. He warns, almost at the very beginning of the dissertation, that "design-by-buzzword is a common occurrence" brought on by a failure to properly appreciate software architecture.4 He picks up this theme again several pages later:

Some architectural styles are often portrayed as "silver bullet" solutions for all forms of software. However, a good designer should select a style that matches the needs of a particular problem being solved.5

REST itself is an especially poor "silver bullet" solution, because, as Fielding later points out, it incorporates trade-offs that may not be appropriate unless you are building a distributed hypermedia application:

REST is designed to be efficient for large-grain hypermedia data transfer, optimizing for the common case of the Web, but resulting in an interface that is not optimal for other forms of architectural interaction.6

Fielding came up with REST because the web posed a thorny problem of "anarchic scalability," by which Fielding means the need to connect documents in a performant way across organizational and national boundaries. The constraints that REST imposes were carefully chosen to solve this anarchic scalability problem. Web service APIs that are public-facing have to deal with a similar problem, so one can see why REST is relevant there. Yet today it would not be at all surprising to find that an engineering team has built a backend using REST even though the backend only talks to clients that the engineering team has full control over. We have all become the architect in this Monty Python sketch, who designs an apartment building in the style of a slaughterhouse because slaughterhouses are the only thing he has experience building. (Fielding uses a line from this sketch as an epigraph for his dissertation: "Excuse me… did you say 'knives'?")

So, given that Fielding's dissertation was all about avoiding silver bullet software architectures, how did REST become a de facto standard for web services of every kind?

My theory is that, in the mid-2000s, the people who were sick of SOAP and wanted to do something else needed their own four-letter acronym.

I'm only half-joking here. SOAP, or the Simple Object Access Protocol, is a verbose and complicated protocol that you cannot use without first understanding a bunch of interrelated XML specifications. Early web services offered APIs based on SOAP, but, as more and more APIs started being offered in the mid-2000s, software developers burned by SOAP's complexity migrated away en masse.

Among this crowd, SOAP inspired contempt. Ruby-on-Rails dropped SOAP support in 2007, leading to this emblematic comment from Rails creator David Heinemeier Hansson: "We feel that SOAP is overly complicated. It's been taken over by the enterprise people, and when that happens, usually nothing good comes of it."7 The "enterprise people" wanted everything to be formally specified, but the get-shit-done crowd saw that as a waste of time.

If the get-shit-done crowd wasn't going to use SOAP, they still needed some standard way of doing things. Since everyone was using HTTP, and since everyone would keep using HTTP at least as a transport layer because of all the proxying and caching support, the simplest possible thing to do was just rely on HTTP's existing semantics. So that's what they did. They could have called their approach Fuck It, Overload HTTP (FIOH), and that would have been an accurate name, as anyone who has ever tried to decide what HTTP status code to return for a business logic error can attest. But that would have seemed recklessly blasé next to all the formal specification work that went into SOAP.

Luckily, there was this dissertation out there, written by a co-author of the HTTP/1.1 specification, that had something vaguely to do with extending HTTP and could offer FIOH a veneer of academic respectability. So REST was appropriated to give cover for what was really just FIOH.

I'm not saying that this is exactly how things happened, or that there was an actual conspiracy among irreverent startup types to misappropriate REST, but this story helps me understand how REST became a model for web service APIs when Fielding's dissertation isn't about web service APIs at all. Adopting REST's constraints makes some sense, especially for public-facing APIs that do cross organizational boundaries and thus benefit from REST's "uniform interface." That link must have been the kernel of why REST first got mentioned in connection with building APIs on the web. But imagining a separate approach called "FIOH," that borrowed the "REST" name partly just for marketing reasons, helps me account for the many disparities between what today we know as RESTful APIs and the REST architectural style that Fielding originally described.

REST purists often complain, for example, that so-called REST APIs aren't actually REST APIs because they do not use Hypermedia as The Engine of Application State (HATEOAS). Fielding himself has made this criticism. According to him, a real REST API is supposed to allow you to navigate all its endpoints from a base endpoint by following links. If you think that people are actually out there trying to build REST APIs, then this is a glaring omission—HATEOAS really is fundamental to Fielding's original conception of REST, especially considering that the "state transfer" in "Representational State Transfer" refers to navigating a state machine using hyperlinks between resources (and not, as many people seem to believe, to transferring resource state over the wire).8 But if you imagine that everyone is just building FIOH APIs and advertising them, with a nudge and a wink, as REST APIs, or slightly more honestly as "RESTful" APIs, then of course HATEOAS is unimportant.

Similarly, you might be surprised to know that there is nothing in Fielding's dissertation about which HTTP verb should map to which CRUD action, even though software developers like to argue endlessly about whether using PUT or PATCH to update a resource is more RESTful. Having a standard mapping of HTTP verbs to CRUD actions is a useful thing, but this standard mapping is part of FIOH and not part of REST.

This is why, rather than saying that nobody understands REST, we should just think of the term "REST" as having been misappropriated. The modern notion of a REST API has historical links to Fielding's REST architecture, but really the two things are separate. The historical link is good to keep in mind as a guide for when to build a RESTful API. Does your API cross organizational and national boundaries the same way that HTTP needs to? Then building a RESTful API with a predictable, uniform interface might be the right approach. If not, it's good to remember that Fielding favored having form follow function. Maybe something like GraphQL or even just JSON-RPC would be a better fit for what you are trying to accomplish.

If you enjoyed this post, more like it come out every four weeks! Follow @TwoBitHistory on Twitter or subscribe to the RSS feed to make sure you know when a new post is out.

Previously on TwoBitHistory…

New post is up! I wrote about how to solve differential equations using an analog computer from the '30s mostly made out of gears. As a bonus there's even some stuff in here about how to aim very large artillery pieces.https://t.co/fwswXymgZa

— TwoBitHistory (@TwoBitHistory) April 6, 2020

-

Roy Fielding. "Architectural Styles and the Design of Network-based Software Architectures," 128. 2000. University of California, Irvine, PhD Dissertation, accessed June 28, 2020, https://www.sciencedirect.com/science/article/pii/S0315086011000279. ↩

-

Fielding, 130. ↩

-

Fielding distinguishes between software architectures and software architecture "styles." REST is an architectural style that has an instantiation in the architecture of HTTP. ↩

-

Fielding, 2. ↩

-

Fielding, 15. ↩

-

Fielding, 82. ↩

-

Paul Krill. "Ruby on Rails 2.0 released for Web Apps," InfoWorld. Dec 7, 2007, accessed June 28, 2020, https://www.infoworld.com/article/2648925/ruby-on-rails-2-0-released-for-web-apps.html ↩

-

Fielding, 109. ↩

The pubs will reopen in a few days. Every day this week we will post a 1970s beer mat from the Scarfolk council archives. Collect them all!

When neither druid nor doctor could reverse the process, the victim became a town mascot, offering rides to children. Records show, however, that he was also secretly employed by the state to violently intimidate seditious citizens and prying outsiders. He was known among council staff as 'The Bouncer'.

The as-yet unsolved Steamroller Murders of Spring 1979, when dozens of people were discovered crushed flat with every bone in their bodies broken, were almost certainly a result of The Bouncer's handiwork.

Add caption

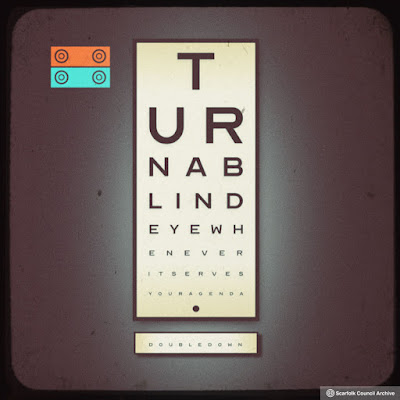

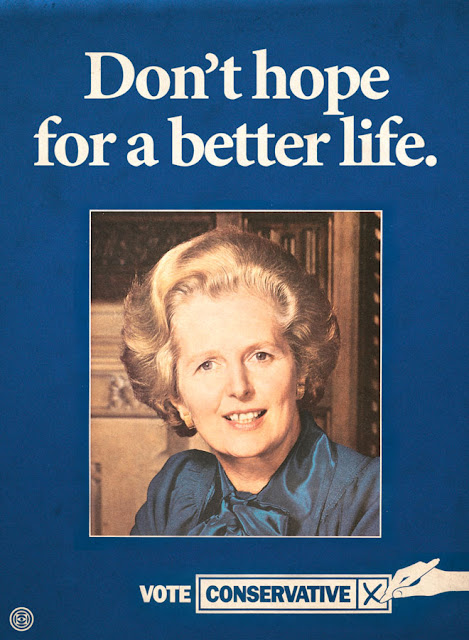

No one is entirely sure what the purpose of this public information poster was. All we know is that when a council worker accidentally posted it on billboards around Scarfolk, the poster below was quickly pasted over it.

Records show that the errant, anonymous worker was soon sold to another council where his job was either to feed the council pets or be fed to the council pets. Documents don't clarify which.

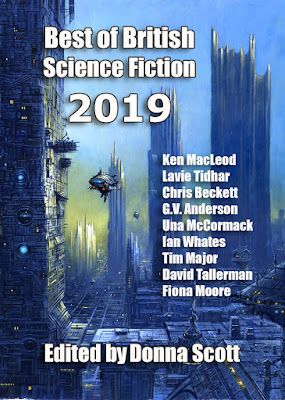

Contents

• 2019: An Introduction - Donna Scott

• The Anxiety Gene - Rhiannon Grist

• The Land of Grunts and Squeaks - Chris Beckett

• For Your Own Good - Ian Whates

• Neom - Lavie Tidhar

• Once You Start - Mike Morgan

• For the Wicked, Only Weeds Will Grow - G. V. Anderson

• Fat Man in the Bardo - Ken MacLeod

• Cyberstar - Val Nolan

• The Little People - Una McCormack

• The Loimaa Protocol - Robert Bagnall

• The Adaptation Point - Kate Macdonald

• The Final Ascent - Ian Creasey

• A Lady of Ganymede, a Sparrow of Io - Dafydd McKimm

• Snapshots - Leo X. Robertson

• Witch of the Weave - Henry Szabranski

• Parasite Art - David Tallerman

• Galena - Liam Hogan

• Ab Initio - Susan Boulton

• Ghosts - Emma Levin

• Concerning the Deprivation of Sleep - Tim Major

• Every Little Star - Fiona Moore

• The Minus-Four Sequence - Andrew Wallace

My short story 'Fat Man in the Bardo', originally published in Shoreline of Infinity 14, and I'm well chuffed to see it here.

(TOC layout copy and pasted from the redoubtable Lavie Tidhar, who as you can see also has a story in it.)

At the end of April, the retail consultant Mary Portas appeared on the BBC’s World at One programme to discuss how physical shopping could continue to function during the coronavirus crisis.

Portas has a bit of form for, shall we say, car-centric ‘solutions’ to high street problems, proposing the quack remedy of free parking as a response to town centre decline, and generally arguing for unfettered access by motor traffic to shopping streets, while simultaneously paying scant attention to benign modes of transport like walking and cycling. So it was perhaps no great surprise to hear her complaining about having to pay car parking charges in London boroughs during the coronavirus pandemic, while singing the praises of department stores that have converted themselves into drive-throughs, a kind of transformation that these hidebound councils are apparently not enlightened enough to adopt.

Here's 'High Streets expert' Mary Portas advocating "luxury drive throughs" (yes, really) for retail, while moaning about current on-street parking charges. There really is going to be a battle for how our streets feel and look in the coming weeks and months pic.twitter.com/IEaugNqqrf

— Mark Treasure (@AsEasyAsRiding) April 28, 2020

I was reminded of this episode by this excellent cartoon from Dave Walker, which manages to capture the Dystopian reality of the Portas worldview in the left panel.

As he so often does, @davewalker has managed to get a very important message, clearly, into a cartoon. Decision Time. pic.twitter.com/jcXda8gjvX

— John Dales

The idea of a society of entirely voluntary arrangements has its charms, but we don't live in one and are not likely to for quite some time. Until that happy day, public services should be funded out of taxation, rather than having to scrounge off the generosity of the public. In emergencies, however, we should pitch in. That's how I square my conscience with making donations, anyway. And if the inadequate supply of PPE to healthcare workers isn't an emergency, I don't know what is.

The idea of a society of entirely voluntary arrangements has its charms, but we don't live in one and are not likely to for quite some time. Until that happy day, public services should be funded out of taxation, rather than having to scrounge off the generosity of the public. In emergencies, however, we should pitch in. That's how I square my conscience with making donations, anyway. And if the inadequate supply of PPE to healthcare workers isn't an emergency, I don't know what is. So I was happy to contribute a story to an anthology of SF, fantasy and horror conceived and edited by Ian Whates at NewCon Press, and compiled and published with breathtaking speed. At a quarter of a million words from some of the leading names in the field, a paperback version would be an epoch-making brick that cost a significant chunk of cash. Electronic and weightless, Stories of Hope and Wonder is a steal at £5.99 / $7.99. Every penny of the proceeds goes straight to providing PPE and other support to UK healthcare workers. A significant amount, I understand, has already been raised and donated. More is needed.

They can't wait. Buy it now.

The core of this book describes working conditions in Bakkavor's food processing factories in West London, then moves on to describe how a Tesco distribution centre operates. The opening 100 plus pages are used to set the scene, then there is the central 180 pages, finally after a curious detour into 3D printer manufacture - and leaving aside an appendix - the last 50 pages deal with the question of revolutionary organisation. Cut into the descriptions of contemporary labour and class exploitation is much useful analysis and historical material:

The food and drink industry is the UK's largest manufacturing sector, accounting for 17% of the total UK manufacturing turnover, contributing £28.2bn to the economy annually and employing 400,000 people. And while a lot of fruit and veg is imported, the shelf life of freshly prepared products (FPP) means that outsourcing this work overseas is not possible. All the FPP found in the chilled section of our supermarkets comes from UK factories. Page 136.

People in Britain buy around 3.5 million ready-meals a day, which easily makes it the leading ready-meals market in Europe. Working hours are some of the longest in Europe, which perhaps explains the demand. Page 139.

Bakkavor is one of the biggest UK food companies you've never heard of. You've probably got a Bakkavor food item in your fridge, but you wouldn't know it because their name won't be on the packaging. They employ around 17,000 people across various sites in the UK and source 5,000 products from around the world to supply the largest supermarkets with their own-brand products - from salads, to desserts, to ready-meals and pizzas. Pages 147/148.

Bakkavor has an ageing workforce, the majority in the 55-64 age bracket. The next biggest age group was workers aged between 45-54, fewer again in the 35-44 age range. I think this was a huge factor in the docility of the workforce in general, even when the union was ramping up its activity. There was an aversion to risk, a palpable fear of going on strike, and a resignation that only comes with living a hard life with few victories. That isn't to say there weren't some older workers who were up for the fight. Page 155.

A toxic culture of disrespect pervaded the factories… All the stress and bad vibes understandably had a negative impact on peoples' mental and physical health. One guy dropped down dead in the smoking area. Another guy, a night shift hygiene worker, died in his late forties. A mild-mannered Polish guy from the maintenance department had a psychotic episode and climbed onto the roof, sobbing in front of his workmates. A young office worker who everybody ignored even killed himself. Others had strokes and panic attacks and were taken away by the ambulance, which came with depressing regularity. It wasn't just that they were old or smoked, although of course those were factors. I think it was also the type of work and toxic culture that drove people to their limits. Page 178.

The poor working conditions at Bakkavor, bad pay and struggles to improve it - alongside the unhygienic methods of food production - are described in detail. The switches from more objective analysis to an utterly subjective position and speculative assertion are sudden and frequent. Some might see this as a weakness but it is actually the book's strength. It's a rhetorical device designed to give those who haven't done these jobs a feeling of insight into them and a sense of empathy with those depicted in the book. Likewise if you have been employed in the industries described you might be drawn to a conscious embrace of the book's wider analytical perspective in part due to a sense of identification with the text's more subjective turns. Even even those who have not worked in these industries - or on some other factory floor - will recognise the social relations depicted from shops, offices and other places of employment.

In short Class Power On Zero Hours is worth reading for its central sections about food production and distribution. The opening and closing parts of the book may resonate with some but were less than thrilling to me. I found the initial section about west London especially tedious and almost gave up when I read the following sentence on the first full page:

Nobody on the London left had even heard of Greenford, not surprising due to its status as a cultural desert, in zone four on the Central line. Page 7.

I don't know - and don't care - if I'd count as part of what Angry Workers configure as the London left but I'd not only heard of Greenford, until lockdown I was going through it once once a month on my way to an extended training session the martial arts club I belong to has in South Ruislip. Likewise, I have two friends - one born in the same south-west London hospital as me - who work for Ealing council (pest control and a desk job); for those who don't know, Greenford is part of the borough of Ealing. While I passed through rather than went to Greenford and Park Royal growing up, I spent plenty of time back then in Hounslow which isn't so far away.

Ultimately the claim that 'nobody' was familiar with Greenford reveals Angry Workers' contact with the working class across much of London when its members first arrived here to have been rather limited. Other things they say point to the same conclusion. On the basis of what the collective writes it would seem that many of those they hung out with in London before moving to the city's west were students who'd come here to take university courses and who saw themselves as on the left but were clueless about about the place they'd relocated to. The text makes it clear Angry Workers went to great efforts to connect with the working class in west London, but leaves the impression they are still disconnected from it in other parts of the city.

The assertion that Greenford has cultural desert status appears obnoxious, racist and anti-working class: clearly not positions Angry Workers would want to be associated with even if what's quoted above might be (mis)read as linking them to views of this type. Bourgeois distaste for proletarian culture - sometimes expressed with the absurd assertion that the working class don't have a culture and exists in a 'cultural desert' - can be found among parts of what Angry Workers seem to be describing as the London 'left'. What 'the left' is and whether 'liberal' elements who want to transform everyone into a bourgeois subject are part of it might be seen by some as open to debate, although not by me. In odd places Class Power On Zero Hours lacks clarity in its verbal formulations but on the basis of the entire text, a generous guess would be it is the views of reactionaries who wish to demean working class immigrant communities that are being invoked in the statement about Greenford's cultural desert status rather than the Angry Workers collective itself believing this to be the case. That said, anyone who was born in the west or south-west of London or who has spent much time there can safely skip the early parts of this book. It is uneven but there is more than enough in its main section to make it worthwhile reading if you're consciously engaged in class struggle: or even if you're not, yet!

Finally, I really liked the solid pink inside covers of the book, so much so that I'm almost tempted to overlook the fact that this publication really cries out for an index. I'm unlikely to read the whole book twice but it would have been helpful to be able to find the parts I'm going to want to access again easily with an index.

Views: 15

Everyone, I hope you and yours are safe and well during this unprecedented pandemic.

As I write this, various governments are rushing to implement — or have already implemented — a wide range of different smartphone apps purporting to be for public health COVID-19 “contact tracing” purposes.

The landscape of these is changing literally hour by hour, but I want to emphasize MOST STRONGLY that all of these apps are not created equal, and that I urge you not to install various of these unless you are required to by law — which can indeed be the case in countries such as China and Poland, just to name two examples.

Without getting into deep technical details here, there are basically two kinds of these contact tracing apps. The first is apps that send your location or other contact-related data to centralized servers (whether the data being sent is claimed to be “anonymous” or not). Regardless of promised data security and professed limitations on government access to and use of such data, I do not recommend voluntarily choosing to install and/or use these apps under any circumstances.

The other category of contact tracing apps uses local phone storage and never sends your data to centralized servers. This is by far the safer category in which resides the recently announced Apple-Google Bluetooth contact tracing API, being adopted in some countries (including now in Germany, which just announced that due to privacy concerns it has changed course from its original plan of using centralized servers).

In general, installing and using local storage contact tracing apps presents a vastly less problematic and risky situation compared with centralized server apps.

Even if you personally have 100% faith that your own government will “do no wrong” with centralized server contact tracing apps — either now or in the future under different leadership — keep in mind that many other persons in your country may not be as naive as you are, and will likely refuse to install and/or use centralized server contact tracing apps unless forced to do so by authorities.

Very large-scale acceptance and use of any contact tracing apps are necessary for them to be effective for genuine pandemic-related public health purposes. If enough people won’t use them, they are essentially worthless for their purported purposes.

As I have previously noted, various governments around the world are salivating at the prospect of making mass surveillance via smartphones part of the so-called “new normal” — with genuine public health considerations as secondary goals at best.

We must all work together to bring the COVID-19 disaster to an end. But we must not permit this tragic situation to hand carte blanche permissions to governments to create and sustain ongoing privacy nightmares in the process.

Stay well, all.

–Lauren–

The Lord Mayor is not to be confused with the much better known but politically insignificant Mayor of London. Freemasons were cunning like that, they installed themselves in an office few knew anything about but which had loads of power and money as well as a rigged election, while leaving millions of Londoner's to democratically put their cross against a dupe with a similar title and lots of visibility but little power. This meant that their World King AKA head of the Court of Aldermen would be left alone to plot in secret. When Boris told me this he also said he hoped people would not recall that he had once been Mayor of London but never Lord Mayor.

Even Johnson's close friend and fellow believer in the bleach cure Donald Trump had been ridiculed for confusing the Mayor of London and the Lord Mayor of London. Those colonials in New York and Washington might only have one mayor but mighty London had two! Boris confided to me he assumed most people were ignorant of the fact that he had been born in New York and wasn't really Lord Mayor material. He hoped no one would suspect he was anything but a true blue Britisher when he called heartily for his favoured brew of Watneys Red Barrel, a beer that had been initially tested on the public at the East Sheen Lawn Tennis Club in south west London. This was close to where John Dee had his home in the sixteenth century, explorer Richard Burton had his tomb and the 1970s punk rock band Subway Sect hailed from.

To tell the truth it wasn't just a desire to get stupid fresh with Catherine MacGuiness - and the multi-billion City's Cash sovereign wealth fund she jointly controlled with the Lord Mayor - that led Boris to turn down ordination into the Guildhall Lodge. He was also concerned that once he was buck naked and dressed in nothing but a blindfold during his initiation, he might be subjected to some indignity he wouldn't have stood still for if he'd been able to see what was going on. Not that there hadn't been lots of perversion when Boris had been a member of the Bullingdon Club at Oxford.

At the Bullingdon they'd hired prostitutes to perform sex acts for them, and then there'd been the time Boris had got so drunk that… Well he'd been so drunk he wasn't sure whether or not he'd taken a fresh corpse borrowed form the local morgue on a date to an expensive restaurant as a dare….. Returning to things that put Boris off becoming a fully paid up freemason, there was also the issue of what had happened to both his Ottoman great-grandfather and Roberto Calvi. Although he was not related to the latter, Calvi's death had been much closer to home. The body of God's banker, a top Italian freemason, had been found ritually strung up under Blackfriars Bridge. This was roughly half-way between Britain's Parliament where Boris was Prime Minister and the City of London's Guildhall HQ - where Lodge 3116 met without so much as having to pay to hire a room. Boris had a public image of being a powerful man but he wanted the keys to power that were actually held in the Guildhall. The City of London council got to send a Remembrancer to sit in the House of Commons and tell the government what the City thought of what it was doing, The arrangement wasn't reciprocal.

Returning to Ali Kema, he\d been assassinated during the Turkish War of Independence. Historians claimed Kema was bumped off for being a traitor to Mustafa Kemal Ataturk's cause but Johnson knew that he'd been killed so that the Turkish state could lay its hands on his great-grandfather's stock of red mercury. Boris had been told this by the freemasons who had engineered his rise through the ranks of British politics in order to repay a debt their grandparents owed to Kema. Once Johnson's friend Donald Trump had blown their plan to use a bleach cure to rid the world of Covid 19 - by revealing it prematurely and thus having it ridiculed by the press - it seemed like his best bet for dealing with the virus was to lay his hands on his great-grandfather's stock of red mercury. As every alchemist knows, red mercury is a super rare substance that will cure cancer or boils or almost any other ailment, so why not coronavirus too? The problem was getting hold of the red mercury. When Boris phoned the Turkish Embassy in London to ask for it they told him he was an Islamophobic asshole who'd betrayed his Ottoman heritage. Ingrates!

In the meantime Boris had been passed 7,500 ring donuts that a food bank in his South Ruislip constituency had been unable to distribute to the needy and which would pass their use by date in a matter of hours. Some food processing plant in Greenford was donating what they couldn't sell, and there'd been a huge decline in demand for donuts since a rumour had gone around that eating them while talking on your smartphone caused Cover 19. More than 40 branches of Derek's Donuts had been set ablaze in the past two weeks and hundreds of supermarket workers whose stores sold the snack had been abused and threatened. Of course Boris had got all of his cabinet members to denounce as idiots those who claimed eating donuts caused Covid 19, and he'd brought in some top scientists too whose secret research proved the same thing. None of which stopped the anti-donut activists from promoting the conspiracy theory and denouncing his favourite delicacy as junk food for cops. When push came to shove the country needed donuts for its police force. They - Boris was never explicit when using this generic term whether he was invoking donuts or the police or both - were vital to the UK's infrastructure and without them the virus couldn't be beaten! Likewise, if the boys in blue weren't able to eat donuts in peace then Boris would never Get Brexit Done!

As he was chauffeured to number 10 Downing Street with his 7,500 ring donuts, Boris found himself getting all hot and sweaty. Something had come over him and he'd had one of those flashes of inspiration that were common to men of genius. He'd use the ring donuts to worship the goddess in her triple form - not the conventional maiden, mother and crone, but rather mouth, backside and naughty bits! Boris wasn't too good at maths - he couldn't even work out how many children he had - but he figured the 7,500 donuts would just about cover three out of seven external orifices for every woman he'd ever slept with. If he'd had more ring donuts he might have indulged himself with nasal sex too. Boris was going to work backwards and imagine doing gross and naughty things with all those he'd known Biblically until every last donut had been abused. Johnson had got as far as Jennifer Arcuri when Dominic Cummings burst in and caught Boris bollock naked rubbing a disintegrating ring donut up and down his manhood.

"That's a waste of good donuts that is!" Cummings spat as he took in the remains of several dozen ruined sweet fry cakes on the floor.

"A man with your surname ought to understand what it's like when I've got the horn," Boris whinged defensively, "and besides even a glutton like me couldn't eat 7,500 donuts with a use by date we'll have gone past at midnight!"

"The witching hour!" Cummings boomed. "That reminds me, those scientists you've got advising you on the pandemic have no respect. They may know about the laws of nature but I know about the laws of spirit, and that means I outrank them all!"

"That's well and good, but we must do something about the bad publicity my government is getting over a lack of personal protection equipment for health workers!'

"That's why I told you not to waste the donuts!"

"What have donuts got to do with PPE?" The Prime Minister wanted to know.

"We can turn them into PPE," Dom explained. "Let's string lots of donuts together to make protective gowns. Two rings fastened to each other will make fantastic googles."

"What about face masks, can we make donut face masks?" Boris asked excitedly.

"Don't be stupid," Dom chided, "anyone using donuts as a face mask would start licking off the sugar coating and then chewing on the cake. Donut face masks wouldn't last five minutes!"

The sugary smell of 7,500 ring donuts had attracted the attention of Larry the Downing Street cat who was mewling like a loon on the other side of the door. Boris let the feline into the room which was a mistake, since Larry was all over the stale fry cakes within seconds. Fortunately it was the ones Boris had used to frontage himself with that most interested the cat. These had traces of dead skin and even blood on them, since the sugar coating had caused a lot of friction when rubbed up and down the Prime Minister's love muscle.

Boris wasn't too hot in the fine motor skills department, in fact he probably needed testing for dyspraxia. Cummings certainly didn't want to risk being exposed as suffering form developmental co-ordination disorder and so his entrepreneurial bent led him to combine three economic sources that were of major significance to the UK - the charity sector's food banks for unwanted donuts, the government and immigrant labour. The Queensmead Sports Centre in South Ruislip's Victoria Road was closed due to the pandemic, and so Cummings decided to deploy it's unused gym as a base from which to make prototype versions of the ring donut PPE that would turn around the public's false perception of a poor governmental performance with regard to the current pandemic.

Dom hired a Gujarati woman from Park Royal who'd initially come to the UK to work at the mammoth hummus production part of Bakkavor's Cumberland site in Greenford. She was extremely nifty with a needle and regularly worked as a seamstress because it was difficult to live on the poor wages paid at local food processing plants. Before 24 hours had passed Johnson and Cummings had what they'd dreamed up the previous night, a medical gown and googles made of ring donuts! Well, the googles were made of ring donuts. At the suggestion of the seamstress, the gown had been fabricated from jam donuts since having holes the length and breath of the garment would have been a health risk to NHS heroes.

Although the donut PPE had been Dom's idea, Boris pulled rank and insisted that he be the first to try it out. Given it was made from literally hundreds of jam donuts, the gown proved to be pretty heavy but at least it was voluminous enough for a fatso like Johnson to wear. To keep Dom happy, Boris told him he was going to recommend his adviser for a Queens Award for Enterprise on the basis of his donut recycling activities in South Ruislip. The prototype PPE turned out to be perfect in every way, except for a slight tendency for the donuts at the bottom of the gown to fall off with a soft plop as Boris spun around in his triumph at having saved the National Health Service. That said, as libertarians he and Dom both knew that ultimately private health was much more efficient than haemorrhaging corporate profits to pay for public services. So once Boris had saved the NHS and after everything got back to normal in about 3 weeks time, he planned to abolish the NHS.

In his moment of glory for having saved the NHS, Boris decided to burst out of the gym and take a lap of honour on a Queensmead Sports Centre football pitch. After all he'd proved once again that England had won its wars - and the battle against Covid 19 was a war - on the playing fields of Eton! The fact that a fuckwit like Boris could get to be Prime Minister demonstrated that his parents had got real value for money when they'd paid for him to attend Britain's top public school. The fees were reassuringly expensive!

Two unfortunate things happened as Boris jigged across the football pitch. Firstly his smartphone rang, it was a call from a hefty female former professional kick boxer turned gym instructor with whom the PM was enjoying an intimate relationship.

"I found snot all over my dirty underwear when I was loading it into my washing machine just now. Have you been sniffing it again?"

This baseless accusation caused Johnson to sway and he'd never been a good runner at the best of times. He tripped over this own feet and fell to the ground. Seeing red oozing all around him, Boris thought he was a goner. While the British Prime Minister was able to pull the wool over his own eyes about his life ebbing away before him, he couldn't fool a passing swarm of wasps who knew that what Boris thought was his own blood was in fact strawberry jam. Recovering a slight semblance of sense at the sight of the descending wasps and wanting to save the prototype PPE, even if it was now squashed and in fragments, Johnson tried to shoo the insects away but this only made them angry. Boris quickly discovered that the painful pricks of failure were more or less equivalent to a dozen wasp stings.

In the interests of safety the plans for recycling donuts as PPE were shelved. It was back to the drawing board for Boris and Dom…. they still needed a way to demonstrate their political genius by defeating Covid 19.

Submarines also feature in a novella that's coming out sometime in the next month or two: Selkie Summer, from NewCon Press. Some years ago, between book contracts, I started writing a paranormal romance as an exercise, leaving it half-finished when the awaited boat came in. An online publisher showed interest in it as a novella, and was happy to wait until I'd finished The Corporation Wars. When I completed the novella two years ago, it was still front-loaded with an opening more suitable for a longer work, and for that and other reasons it didn't quite make the cut. I tinkered with it some more, and passed it to Ian Whates, who liked it and helpfully suggested further improvements. And after many vicissitudes, including a last-minute page-proof realisation that a Skye summer sunset was about an hour and a half later than I'd originally written, it's good to go! I'm very happy with the book's editing and production, and downright thrilled and delighted with Ben Baldwin's cover.

Submarines also feature in a novella that's coming out sometime in the next month or two: Selkie Summer, from NewCon Press. Some years ago, between book contracts, I started writing a paranormal romance as an exercise, leaving it half-finished when the awaited boat came in. An online publisher showed interest in it as a novella, and was happy to wait until I'd finished The Corporation Wars. When I completed the novella two years ago, it was still front-loaded with an opening more suitable for a longer work, and for that and other reasons it didn't quite make the cut. I tinkered with it some more, and passed it to Ian Whates, who liked it and helpfully suggested further improvements. And after many vicissitudes, including a last-minute page-proof realisation that a Skye summer sunset was about an hour and a half later than I'd originally written, it's good to go! I'm very happy with the book's editing and production, and downright thrilled and delighted with Ben Baldwin's cover. I'm not sure if Selkie Summer meets the criteria for paranormal romance, but it's still about a young woman who falls for a paranormal entity. It's set in a contemporary Scotland much like ours, except that certain paranormal entities definitely exist and this is taken for granted as a fact of natural history. Partly as a consequence, there is no Skye Bridge.

It was due to be launched by me and Ian at Cymera 2020, which has now been cancelled (though it has some online content, and will have more, so keep checking it out). Meanwhile, you can hear me reading from the opening chapter in the online version of Edinburgh's monthly science fiction and fantasy cabaret, Event Horizon.

Another event that has had to move online is the Edinburgh Science Festival. I was honoured to be asked to give a short talk on a non-religious topic at the Festival's traditional St Giles service. I happened to have just read a book that got me thinking about contingency, Corliss Lamont's Freedom of Choice Affirmed, so I freely chose to talk about that. And as the contingencies we all know worked out, it's now online here.

Finally, a plug for a project I'm proud to have contributed to: the just-published Edwin Morgan Twenties, a set of five selections of twenty poems by the late great Makar, with introductions by Jackie Kay, Liz Lochhead, Ali Smith, Michael Rosen and me. You can buy the set for the bargain price of £16 (UK post free) or pick and mix.

Finally, a plug for a project I'm proud to have contributed to: the just-published Edwin Morgan Twenties, a set of five selections of twenty poems by the late great Makar, with introductions by Jackie Kay, Liz Lochhead, Ali Smith, Michael Rosen and me. You can buy the set for the bargain price of £16 (UK post free) or pick and mix. 'Space and spaces', the one I wrote the introduction to, brings together many of Morgan's science fiction and space poems -- and one or two that make a more metaphorical use of 'space' to brilliant effect. Like the other selections it's a mere £4 (UK post free) and is available here.

The fact that so many are completely incapable of keeping a safe distance from strangers - and this extends well beyond the cops and amateur cops - illustrates how alienated people are. Half the population seem to have no awareness of their own bodies or whose in the street or supermarket with them. Meanwhile, the homeless and mad are becoming ever more desperate and have either given up on begging and can be seen huddled together in encampments on Tottenham Court Road and elsewhere, or else have become much more aggressive in their quest for money to buy food and alcohol. The homeless are supposed to be in shelters but most still seem to be roaming around, presumably preferring the relatively greater freedom of the streets to being locked up under lockdown. There's a shortage of many street drugs but the government recognise booze as an essential and so London's off-licences (liquor stores) are open. Anyone hoping to sleep on the streets probably needs a drink or two in order to nod off, while the rest of the population are also living out the insane nightmare that is late-capitalism and dependence on alcohol is one way of dealing with it.

Covid 19 has brought out the best in many people and the worst in others. There are wonderful community mutual aid groups doing shopping for the vulnerable and delivering presents to children. Meanwhile hysterical media coverage links burning telecommunications masts and infrastructure to ridiculous conspiracy theories about 5G causing Covid 19. At least one of the fires the press was wringing its hands over recently and blaming on anti-5G activists turned out to have been due to faulty equipment and not suspicious. The papers call those who oppose 5G idiots because the equipment that was maliciously targeted is largely 3G and 4G. Since no one has yet been charged with - let alone convicted - of these acts of arson against phone masts, the fact that it isn't 5G equipment that was torched might well imply those involved in the vandalism aren't opposed to 5G and had other motives. The links made in the press between this arson and anti-5G activism are at best speculative.

There are many reasons for setting telecommunications infrastructure alight but even when it's just teens doing it for kicks it doesn't follow that we shouldn't be thrilled by film and photos of the resultant fires. Baudrillard said more than 50 years ago: "Something in all (wo)men profoundly rejoices at seeing a car burn.." This rings true because cars are a symbol of possessive individualism and have wrought untold destruction on our planet. Given the negative social impact of smartphones - including but not limited to surveillance and an intensification of work - today nothing is more beautiful than a burning phone mast. Technology isn't neutral, it shapes societies and human relations, and so the health and wellness concerns of anti-5G activists aren't the reason I get a buzz when I see burning telecommunications infrastructure. Nonetheless media hysteria about torched masts and Covid 19 conspiracy theories mean it's now nearly impossible to have a nuanced conversation about the joys and broader political dimensions of such vandalism, why everyone should get rid of their smartphone or what's actually bad about 3G, 4G and 5G.

Under lockdown I like to run around the streets for an hour a day, since it's quite a kick to see most of the shops and all the restaurants in central London closed, and knowing that even if they were open I wouldn't be using most of them. I don't even miss the record and book stores I did sometimes visit before Covid 19. I totally dig jogging through Soho and Covent Garden to visit the places I went as a teen 40 and more years ago and to revel in the fact that the London I knew then has entirely disappeared, just as the hyper-capitalist London of the current millennium is about to disappear. A different and better world is not only possible, it is also very necessary….

I’m doing fine, asymptomatic at the moment and hoping to stay that way until a working vaccine shows up. Hope you’re the same, or better yet, that you had a mild case and are now immune.

Anyway, good wishes aside, I wanted to say something I haven’t dared say for weeks: as bad as this crisis is, I suspect it’s a training wheels exercise for what we’ll have to do to deal with climate change. What I think right now is that if we seriously try to flatten the curve on greenhouse gas emissions, that effort is going to be like what we’re going through now, but longer and more thoroughly disruptive. It pretty much has to be if we’re going to avoid a mass extinction.

However, if you’re an artist looking for inspiration out of the darkness, that isn’t a bad thing.First, the bad news. No, not the Covid-19 economic induced coma. I’m talking about the Nature paper that came out yesterday (link to an article about it). Basically, it says we’ve got about 10 years until we see tropical ocean ecosystems (coral reefs) start to collapse wholesale. If you know anything about mass extinctions, you know that the disappearance of biogenic reefs in the fossil record is the classic sign of a major/mass extinction event. So that’s maybe 10 years off, although the reefs are mostly in bad shape now. By 2050, the ecosystem disintegration will reach the temperate zone, and the mass extinction will swing into high gear. When I talk about bending the curve, trying to avoid a mass extinction in 10 years, with which will start with the loss of a huge amount of fish that’s feeding hundreds of millions of people, that’s what I’m talking about avoiding. It’s about stopping the death of the coral reefs, and stopping the spread of the disaster.

Now if you use the paradigm I’ve used for the last ten years, you can assume this disaster will definitely happen, and that leads to Hot Earth Dreams land and the high altithermal starting in a decade.

But let’s look at the other side, where people survive however many waves of Covid-19 we go through until we get to a vaccine, and that breaks the inevitability, makes us think that maybe we can actually make a difference in the world. Scientists get respected enough again, and air quality improves so radically that people decide, not as a unified movement but en masse, to get serious about not dying due to climate change. The air quality improvement is quite real, and there’s enough footage of nature bouncing back (pandas mating, coyotes howling in San Francisco’s North Beach) that it’s just possible that people will get the idea that we can actually make a difference.

And so we start to bend the curve on climate pollution, let our fasts from consumerism get longer and longer, listen to the experts more than the reality stars, alternately slack and scramble to survive. Fantasy? You’re living it now.

I’m not going to portray this as easy. People are going hungry right now, whole careers and industries are in limbo. People are dying around the world, and we don’t even know what’s happening in the slums. While we have serious economic disruptors, people taking science seriously, and people showing their best in times of adversity, we also have predatory capitalism at it’s worst, with the current U.S. administration possibly behaving more like mafiosi than leaders.

And that’s the point, especially if you’re looking for disaster to inspire art, trying to figure out what will get made out of the broken shards of 2019 consumer culture, starting in 2021. Take all the disruption right now, the kleptocracy and vulture capitalism, the suffering of the essential workers keeping us alive, the kindness, heroism, and acts of creativity, the disruption of whole economic sectors surplused and gone, the world gone strangely silent and healing itself. Now ramp that up by orders of magnitude. That’s what flattening the curve on greenhouse gases will look like in the 2020s.

Now it’s not easy, but it’s not dystopian either. It’s a bit different: science is in charge, people are struggling to make a better, solve the problems that are killing us now. That’s a classic science fiction theme. But I’m pretty sure that struggle will involve as much suffering as failing and letting the world die.

As the sign in the psychotherapist’s office says, either way it hurts. Death from climate change will be slow, horrible, and painful, with you living to watch everything you care about get destroyed around you. Fighting climate change will be slow, horrible, and painful, as you watch everything you grew up with either fall apart or change into new forms that will survive. If they’re equal, why not choose change. Why not struggle against huge forces to keep the world from being totally destroyed, give the coral reefs a chance to come back, the forests a chance to regrow without migrating to the poles. Why not struggle for a civilization that doesn’t kill itself?

Why not let this inspire you?

A differential analyzer is a mechanical, analog computer that can solve differential equations. Differential analyzers aren't used anymore because even a cheap laptop can solve the same equations much faster—and can do it in the background while you stream the new season of Westworld on HBO. Before the invention of digital computers though, differential analyzers allowed mathematicians to make calculations that would not have been practical otherwise.

It is hard to see today how a computer made out of anything other than digital circuitry printed in silicon could work. A mechanical computer sounds like something out of a steampunk novel. But differential analyzers did work and even proved to be an essential tool in many lines of research. Most famously, differential analyzers were used by the US Army to calculate range tables for their artillery pieces. Even the largest gun is not going to be effective unless you have a range table to help you aim it, so differential analyzers arguably played an important role in helping the Allies win the Second World War.

To understand how differential analyzers could do all this, you will need to know what differential equations are. Forgotten what those are? That's okay, because I had too.

Differential EquationsDifferential equations are something you might first encounter in the final few weeks of a college-level Calculus I course. By that point in the semester, your underpaid adjunct professor will have taught you about limits, derivatives, and integrals; if you take those concepts and add an equals sign, you get a differential equation.

Differential equations describe rates of change in terms of some other variable (or perhaps multiple other variables). Whereas a familiar algebraic expression like specifies the relationship between some variable quantity and some other variable quantity , a differential equation, which might look like , or even , specifies the relationship between a rate of change and some other variable quantity. Basically, a differential equation is just a description of a rate of change in exact mathematical terms. The first of those last two differential equations is saying, "The variable changes with respect to at a rate defined exactly by ," and the second is saying, "No matter what is, the variable changes with respect to at a rate of exactly 2."

Differential equations are useful because in the real world it is often easier to describe how complex systems change from one instant to the next than it is to come up with an equation describing the system at all possible instants. Differential equations are widely used in physics and engineering for that reason. One famous differential equation is the heat equation, which describes how heat diffuses through an object over time. It would be hard to come up with a function that fully describes the distribution of heat throughout an object given only a time , but reasoning about how heat diffuses from one time to the next is less likely to turn your brain into soup—the hot bits near lots of cold bits will probably get colder, the cold bits near lots of hot bits will probably get hotter, etc. So the heat equation, though it is much more complicated than the examples in the last paragraph, is likewise just a description of rates of change. It describes how the temperature of any one point on the object will change over time given how its temperature differs from the points around it.

Let's consider another example that I think will make all of this more concrete. If I am standing in a vacuum and throw a tennis ball straight up, will it come back down before I asphyxiate? This kind of question, posed less dramatically, is the kind of thing I was asked in high school physics class, and all I needed to solve it back then were some basic Newtonian equations of motion. But let's pretend for a minute that I have forgotten those equations and all I can remember is that objects accelerate toward earth at a constant rate of , or about . How can differential equations help me solve this problem?

Well, we can express the one thing I remember about high school physics as a differential equation. The tennis ball, once it leaves my hand, will accelerate toward the earth at a rate of . This is the same as saying that the velocity of the ball will change (in the negative direction) over time at a rate of . We could even go one step further and say that the rate of change in the height of my ball above the ground (this is just its velocity) will change over time at a rate of negative . We can write this down as the following, where represents height and represents time:

This looks slightly different from the differential equations we have seen so far because this is what is known as a second-order differential equation. We are talking about the rate of change of a rate of change, which, as you might remember from your own calculus education, involves second derivatives. That's why parts of the expression on the left look like they are being squared. But this equation is still just expressing the fact that the ball accelerates downward at a constant acceleration of .

From here, one option I have is to use the tools of calculus to solve the differential equation. With differential equations, this does not mean finding a single value or set of values that satisfy the relationship but instead finding a function or set of functions that do. Another way to think about this is that the differential equation is telling us that there is some function out there whose second derivative is the constant ; we want to find that function because it will give us the height of the ball at any given time. This differential equation happens to be an easy one to solve. By doing so, we can re-derive the basic equations of motion that I had forgotten and easily calculate how long it will take the ball to come back down.

But most of the time differential equations are hard to solve. Sometimes they are even impossible to solve. So another option I have, given that I paid more attention in my computer science classes that my calculus classes in college, is to take my differential equation and use it as the basis for a simulation. If I know the starting velocity and the acceleration of my tennis ball, then I can easily write a little for-loop, perhaps in Python, that iterates through my problem second by second and tells me what the velocity will be at any given second after the initial time. Once I've done that, I could tweak my for-loop so that it also uses the calculated velocity to update the height of the ball on each iteration. Now I can run my Python simulation and figure out when the ball will come back down. My simulation won't be perfectly accurate, but I can decrease the size of the time step if I need more accuracy. All I am trying to accomplish anyway is to figure out if the ball will come back down while I am still alive.

This is the numerical approach to solving a differential equation. It is how differential equations are solved in practice in most fields where they arise. Computers are indispensable here, because the accuracy of the simulation depends on us being able to take millions of small little steps through our problem. Doing this by hand would obviously be error-prone and take a long time.

So what if I were not just standing in a vacuum with a tennis ball but were standing in a vacuum with a tennis ball in, say, 1936? I still want to automate my computation, but Claude Shannon won't even complete his master's thesis for another year yet (the one in which he casually implements Boolean algebra using electronic circuits). Without digital computers, I'm afraid, we have to go analog.

The Differential AnalyzerThe first differential analyzer was built between 1928 and 1931 at MIT by Vannevar Bush and Harold Hazen. Both men were engineers. The machine was created to tackle practical problems in applied mathematics and physics. It was supposed to address what Bush described, in a 1931 paper about the machine, as the contemporary problem of mathematicians who are "continually being hampered by the complexity rather than the profundity of the equations they employ."

A differential analyzer is a complicated arrangement of rods, gears, and spinning discs that can solve differential equations of up to the sixth order. It is like a digital computer in this way, which is also a complicated arrangement of simple parts that somehow adds up to a machine that can do amazing things. But whereas the circuitry of a digital computer implements Boolean logic that is then used to simulate arbitrary problems, the rods, gears, and spinning discs directly simulate the differential equation problem. This is what makes a differential analyzer an analog computer—it is a direct mechanical analogy for the real problem.

How on earth do gears and spinning discs do calculus? This is actually the easiest part of the machine to explain. The most important components in a differential analyzer are the six mechanical integrators, one for each order in a sixth-order differential equation. A mechanical integrator is a relatively simple device that can integrate a single input function; mechanical integrators go back to the 19th century. We will want to understand how they work, but, as an aside here, Bush's big accomplishment was not inventing the mechanical integrator but rather figuring out a practical way to chain integrators together to solve higher-order differential equations.

A mechanical integrator consists of one large spinning disc and one much smaller spinning wheel. The disc is laid flat parallel to the ground like the turntable of a record player. It is driven by a motor and rotates at a constant speed. The small wheel is suspended above the disc so that it rests on the surface of the disc ever so slightly—with enough pressure that the disc drives the wheel but not enough that the wheel cannot freely slide sideways over the surface of the disc. So as the disc turns, the wheel turns too.

The speed at which the wheel turns will depend on how far from the center of the disc the wheel is positioned. The inner parts of the disc, of course, are rotating more slowly than the outer parts. The wheel stays fixed where it is, but the disc is mounted on a carriage that can be moved back and forth in one direction, which repositions the wheel relative to the center of the disc. Now this is the key to how the integrator works: The position of the disc carriage is driven by the input function to the integrator. The output from the integrator is determined by the rotation of the small wheel. So your input function drives the rate of change of your output function and you have just transformed the derivative of some function into the function itself—which is what we call integration!

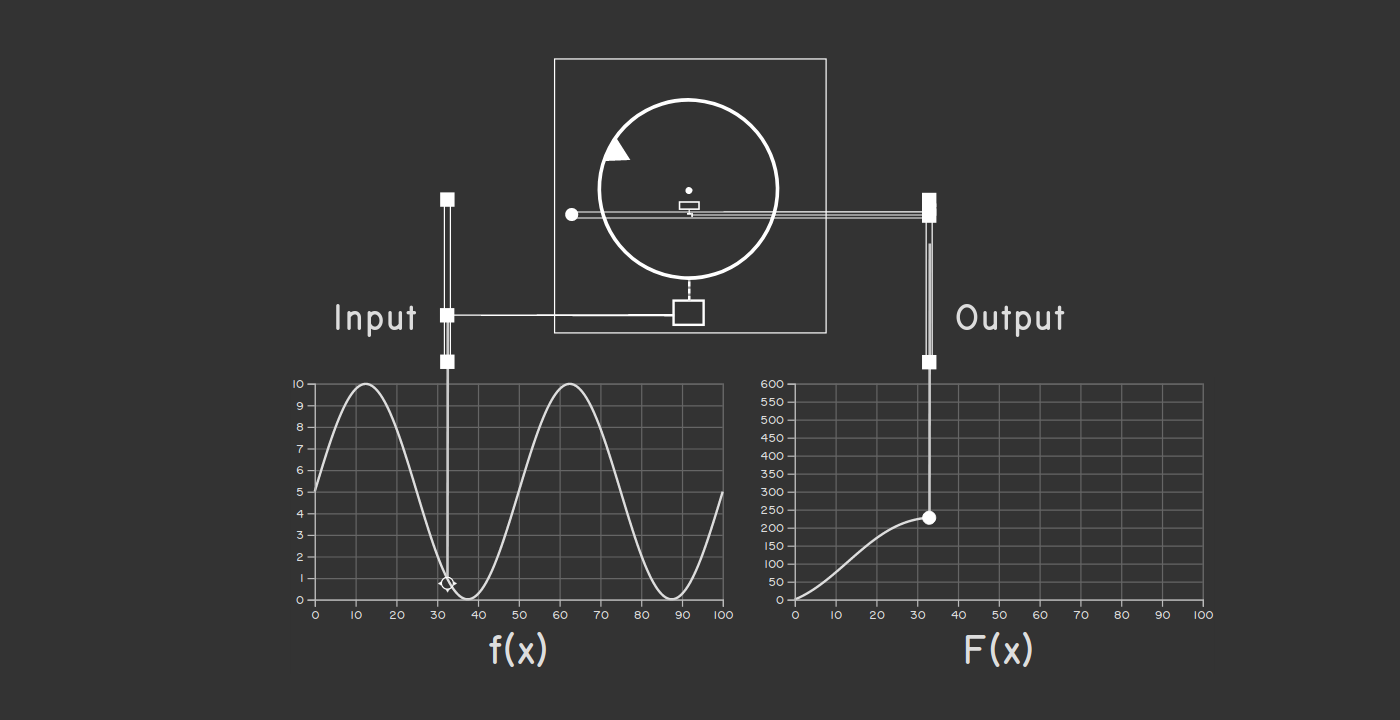

If that explanation does nothing for you, seeing a mechanical integrator in action really helps. The principle is surprisingly simple and there is no way to watch the device operate without grasping how it works. So I have created a visualization of a running mechanical integrator that I encourage you to take a look at. The visualization shows the integration of some function into its antiderivative while various things spin and move. It's pretty exciting.

A nice screenshot of my visualization, but you should check out the real

thing!

A nice screenshot of my visualization, but you should check out the real

thing!

So we have a component that can do integration for us, but that alone is not enough to solve a differential equation. To explain the full process to you, I'm going to use an example that Bush offers himself in his 1931 paper, which also happens to be essentially the same example we contemplated in our earlier discussion of differential equations. (This was a happy accident!) Bush introduces the following differential equation to represent the motion of a falling body:

This is the same equation we used to model the motion of our tennis ball, only Bush has used in place of and has added another term that accounts for how air resistance will decelerate the ball. This new term describes the effect of air resistance on the ball in the simplest possible way: The air will slow the ball's velocity at a rate that is proportional to its velocity (the here is some proportionality constant whose value we don't really care about). So as the ball moves faster, the force of air resistance will be stronger, further decelerating the ball.

To configure a differential analyzer to solve this differential equation, we have to start with what Bush calls the "input table." The input table is just a piece of graphing paper mounted on a carriage. If we were trying to solve a more complicated equation, the operator of the machine would first plot our input function on the graphing paper and then, once the machine starts running, trace out the function using a pointer connected to the rest of the machine. In this case, though, our input is just the constant , so we only have to move the pointer to the right value and then leave it there.

What about the other variables and ? The variable is our output as it represents the height of the ball. It will be plotted on graphing paper placed on the output table, which is similar to the input table only the pointer is a pen and is driven by the machine. The variable should do nothing more than advance at a steady rate. (In our Python simulation of the tennis ball problem as posed earlier, we just incremented in a loop.) So the variable comes from the differential analyzer's motor, which kicks off the whole process by rotating the rod connected to it at a constant speed.

Bush has a helpful diagram documenting all of this that I will show you in a second, but first we need to make one more tweak to our differential equation that will make the diagram easier to understand. We can integrate both sides of our equation once, yielding the following:

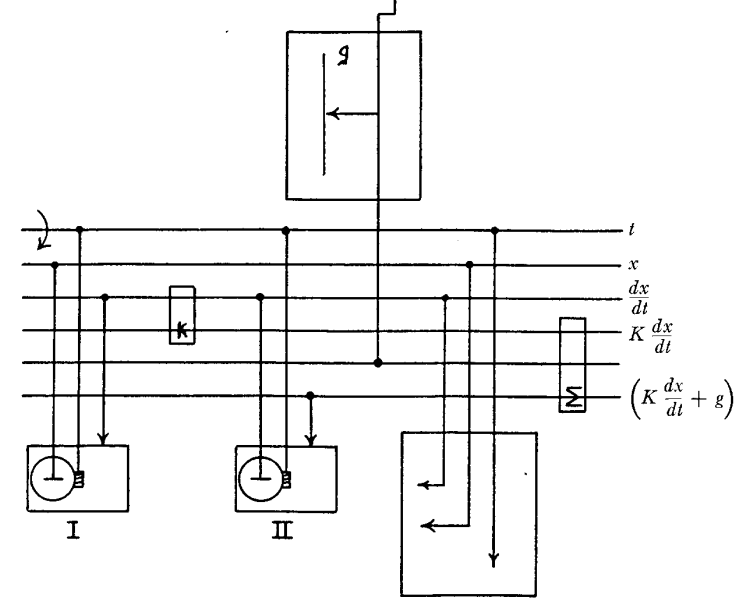

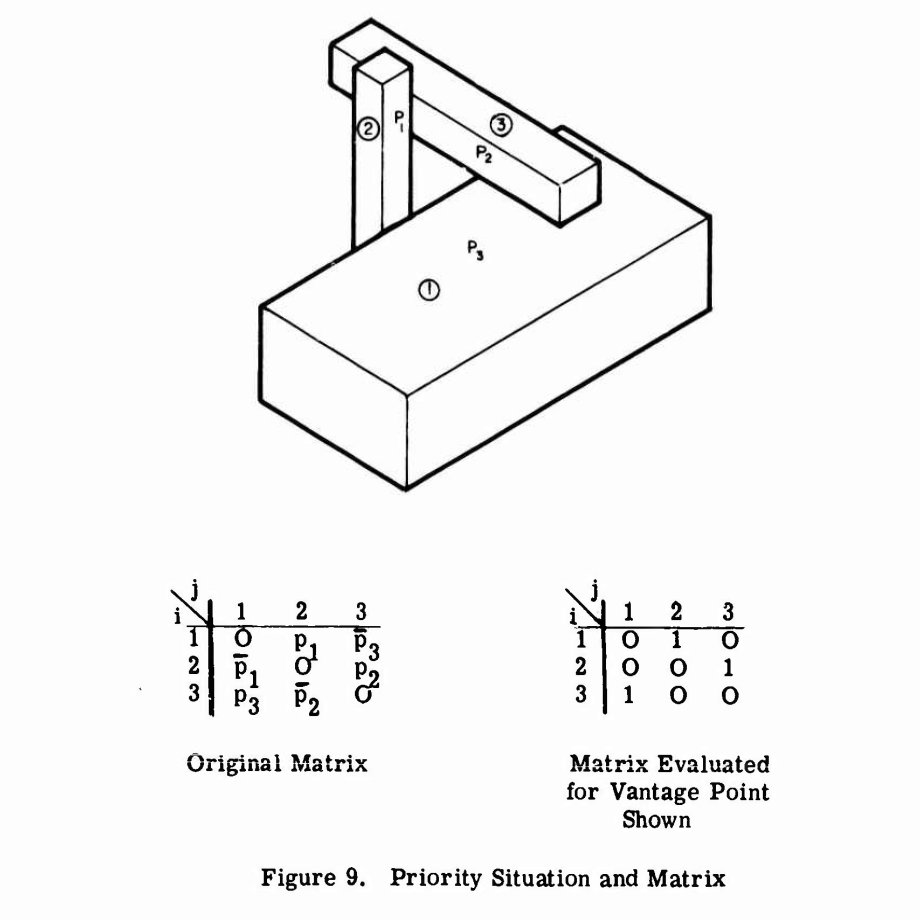

The terms in this equation map better to values represented by the rotation of various parts of the machine while it runs. Okay, here's that diagram:

The differential analyzer configured to solve the problem of a falling body in

one dimension.

The differential analyzer configured to solve the problem of a falling body in

one dimension.

The input table is at the top of the diagram. The output table is at the bottom-right. The output table here is set up to graph both and , i.e. height and velocity. The integrators appear at the bottom-left; since this is a second-order differential equation, we need two. The motor drives the very top rod labeled . (Interestingly, Bush referred to these horizontal rods as "buses.")

That leaves two components unexplained. The box with the little in it is a multiplier respresnting our proportionality constant . It takes the rotation of the rod labeled and scales it up or down using a gear ratio. The box with the symbol is an adder. It uses a clever arrangement of gears to add the rotations of two rods together to drive a third rod. We need it since our equation involves the sum of two terms. These extra components available in the differential analyzer ensure that the machine can flexibly simulate equations with all kinds of terms and coefficients.

I find it helpful to reason in ultra-slow motion about the cascade of cause and effect that plays out as soon as the motor starts running. The motor immediately begins to rotate the rod labeled at a constant speed. Thus, we have our notion of time. This rod does three things, illustrated by the three vertical rods connected to it: it drives the rotation of the discs in both integrators and also advances the carriage of the output table so that the output pen begins to draw.

Now if the integrators were set up so that their wheels are centered, then the rotation of rod would cause no other rods to rotate. The integrator discs would spin but the wheels, centered as they are, would not be driven. The output chart would just show a flat line. This happens because we have not accounted for the initial conditions of the problem. In our earlier Python simulation, we needed to know the initial velocity of the ball, which we would have represented there as a constant variable or as a parameter of our Python function. Here, we account for the initial velocity and acceleration by displacing the integrator discs by the appropriate amount before the machine begins to run.

Once we've done that, the rotation of rod propagates through the whole system. Physically, a lot of things start rotating at the same time, but we can think of the rotation going first to integrator II, which combines it with the acceleration expression calculated based on and then integrates it to get the result . This represents the velocity of the ball. The velocity is in turn used as input to integrator I, whose disc is displaced so that the output wheel rotates at the rate . The output from integrator I is our final output , which gets routed directly to the output table.

One confusing thing I've glossed over is that there is a cycle in the machine: Integrator II takes as an input the rotation of the rod labeled , but that rod's rotation is determined in part by the output from integrator II itself. This might make you feel queasy, but there is no physical issue here—everything is rotating at once. If anything, we should not be surprised to see cycles like this, since differential equations often describe rates of change in a function as a function of the function itself. (In this example, the acceleration, which is the rate of change of velocity, depends on the velocity.)

With everything correctly configured, the output we get is a nice graph, charting both the position and velocity of our ball over time. This graph is on paper. To our modern digital sensibilities, that might seem absurd. What can you do with a paper graph? While it's true that the differential analyzer is not so magical that it can write out a neat mathematical expression for the solution to our problem, it's worth remembering that neat solutions to many differential equations are not possible anyway. The paper graph that the machine does write out contains exactly the same information that could be output by our earlier Python simulation of a falling ball: where the ball is at any given time. It can be used to answer any practical question you might have about the problem.

The differential analyzer is a preposterously cool machine. It is complicated, but it fundamentally involves nothing more than rotating rods and gears. You don't have to be an electrical engineer or know how to fabricate a microchip to understand all the physical processes involved. And yet the machine does calculus! It solves differential equations that you never could on your own. The differential analyzer demonstrates that the key material required for the construction of a useful computing machine is not silicon but human ingenuity.

Murdering PeopleHuman ingenuity can serve purposes both good and bad. As I have mentioned, the highest-profile use of differential analyzers historically was to calculate artillery range tables for the US Army. To the extent that the Second World War was the "Good Fight," this was probably for the best. But there is also no getting past the fact that differential analyzers helped to make very large guns better at killing lots of people. And kill lots of people they did—if Wikipedia is to be believed, more soldiers were killed by artillery than small arms fire during the Second World War.

I will get back to the moralizing in a minute, but just a quick detour here to explain why calculating range tables was hard and how differential analyzers helped, because it's nice to see how differential analyzers were applied to a real problem. A range table tells the artilleryman operating a gun how high to elevate the barrel to reach a certain range. One way to produce a range table might be just to fire that particular kind of gun at different angles of elevation many times and record the results. This was done at proving grounds like the Aberdeen Proving Ground in Maryland. But producing range tables solely through empirical observation like this is expensive and time-consuming. There is also no way to account for other factors like the weather or for different weights of shell without combinatorially increasing the necessary number of firings to something unmanageable. So using a mathematical theory that can fill in a complete range table based on a smaller number of observed firings is a better approach.

I don't want to get too deep into how these mathematical theories work, because the math is complicated and I don't really understand it. But as you might imagine, the physics that governs the motion of an artillery shell in flight is not that different from the physics that governs the motion of a tennis ball thrown upward. The need for accuracy means that the differential equations employed have to depart from the idealized forms we've been using and quickly get gnarly. Even the earliest attempts to formulate a rigorous ballistic theory involve equations that account for, among other factors, the weight, diameter, and shape of the projectile, the prevailing wind, the altitude, the atmospheric density, and the rotation of the earth1.