Which is what we called "Asset Management" behind the scenes, while I was writing it. Of course, back then I didn't know I was writing it for Keanu fucking Reeves.

Apparently they mentioned my name on some ComicCon panel about a month ago, which effectively lifted the embargo ("Brag #2" was as far as I could go back in September). Now they've released a couple of meaty clips over on YouTube. I am—I suppose the word is psyched.

You can see me in there, kind of. My dialog didn't make it through unscathed, because "Asset Management" incorporated specific game/plot elements that were declared off-limits post hoc (not really sure why; spoilers can't be much of of an issue for a game that came out over a year ago). The folks at Blur had to strip out a lot of details to make that work; they changed the ending entirely. But—and you don't see authors admit this very often, so take it to heart—I think their changes were for the better. I'd been writing within the constraints not just of Rubicon the world, but also of Armored Core VI's specific plot. Those constraints forced the story into a specific and complex mold. Unbound, though, it could assume a simpler and more natural shape.

So the dialog in one of these clips expresses the essence of what I wrote with slightly different verbiage. The dialog in the other is pretty much all me, but there's only one line of it so that's not saying much. The mech, as Keanu mentions, is called Shrieker— but that's just a phonetic simplification of a much more awkward spelling, with much more world-changing implications. I look forward to seeing whether we get to see the actual name stamped onto a breastplate or something.

A Boy and His Mech

A Boy and His Mech

Because I still haven't seen the whole thing, you see. Blur tried to slip me a copy, but Amazon was only willing to let it stream via a service that only supports two of the most pernicious and surveillant browsers on the planet (Chrome and Edge). It wouldn't play nicely with Firefox, Brave, and/or their associated privacy plug-ins— and there was no way I was gonna let those guards down. Hell, Amazon's one of the reasons I have them up in the first place.

A Mech and Its Boy.

A Mech and Its Boy.

So once you check out the clips you'll have seen all I have. But the good news is, those moments are totally in sync with the vibe I was going for when I wrote the treatment. And the changes that make any difference at all are improvements.

For a proper assessment, of course, I'll just have to wait until December 10th with the rest of you. Steal the ep when it drops.

You and me both, Keanu. In it to the end.

You and me both, Keanu. In it to the end.

"In 1933, people were not fooled by propaganda. They elected a leader who openly disclosed his plans with great clarity. The Germans elected me… ordinary people who chose to elect an extraordinary man, and entrust the fate of the country to him.

“What do you want to do, Sawatzki? Ban elections?"

—Adolph Hitler, "Er Ist Wieder Da" (2015)

Pete Townshend's prayer has gone unanswered.

The US Dollar is "surging" even as I type, along with shares in Bitcoin and Tesla. Wall Street opened at an all-time high. The world's leaders, scared shitless, scramble over each other to fall in line and congratulate the new/old boss on his resurrection. The world's tyrants and shitstains—Orban, Netanyahu, Bolsonaro— were of course at the front of the line[1], but even vacuous ineffectual bobbleheads like Canada's own PM jump up and down like eager puppies, reminding Dear Leader of a friendship "united by a shared history, common values and steadfast ties between our peoples". (And, as the BUG astutely remarked, wouldn't it be interesting to see the alternate statements all these folks had doubtless prepared in the event that the merely mediocre candidate had triumphed over the apocalyptically bad one?)

Trump may not have spoken with the "great clarity" that Hitler commanded, but it's not as if he hid his agenda. He won anyway, handily. I guess you really do get the government you deserve.

And yet, for all Trump's hateful narcissistic idiocy, he's not really the problem. Trump is merely a symptom. The problem is a political system that rewards the world's Trumps with massive power and influence, instead of marginalizing them. A number of left-leaning 'Murricans—most, I'd wager—seem to regard their homeland as a great nation, a shining experiment in liberty and democracy, that somehow lost its way. I tend to be less charitable. The US is a nation literally founded on invasion, slavery, and germ warfare—which is to say, it was born in the universal reality of human beings fucking each other over for a percentage. It is a global case-in-point of Homo so-called sapiens belying its own self-aggrandizing myths and behaving exactly like the social mammals we are: short-sighted apes, prioritizing the approval of the tribe over long-term consequences our intellects grasp dimly at best, and our guts reject outright. Facts don't matter. Truth is not the survival trait. Conformity is: defense of the tribe, hatred of The Other.

U S A. U S A.

Is there hope? Perhaps a little: ironically, in the crushing of hope. Because despite what the hopepunks have been wittering on about all these years—despite the bromides about how we should stop writing dystopias and never say 1.5° is 'unachievable' because to do so would give in to paralyzing narratives of hopelessness and despair—we have evidence that the exact opposite is true. Ballew et al over at Nature report that "Climate change psychological distress is associated with increased collective climate action". People who are bummed out and distressed about the state of the environment are more likely to get off their asses and do something than are all those cozy optimists who tell us to put on a happy face because We Live In The Greatest Nation On Earth and Things Will Work Out Somehow. Ballew et al show, at high levels of significance, that the Hope Police have their heads up their asses.

This study, admittedly, did not explicitly deal with Gileads-in-the-making, with the unchecked power of demagogues or the stripping away of Human rights. It deals with our reaction to environmental havoc—and even if that's all it applies to, I'm cool with it. I personally am more concerned about ecocide than Human rights. Treating each other like shit at least keeps our sins in the family—and frankly, given the contempt with which we treat the rest of the biosphere, I'm skeptical that Humans even deserve "rights". Certainly, Trump's victory has pretty much incinerated whatever faint hope we might still have had of turning things around environmentally. The world was already circling the toilet bowl on that front (and would doubtless have continued in that grim decaying orbit even if Harris had prevailed); Trump's victory flushes it down for good.

I'd be very surprised, though, if activism was catalyzed only by distress of the environmental kind. Almost certainly, it also maps onto the social distress currently being experienced even by all those Democrats who, according to polls and campaign priorities, really don't give a shit about climate change (at least, not relative to "the economy" or "the border"—remember how Harris flip-flopped on the whole fracking thing to appeal to her tribe?) Hell, how may pundits have already attributed Trump's victory to the distress-induced activism of Magats who found themselves paying too much for groceries?

So maybe this will be the impetus for something. Maybe "the resistance" will be more than an ineffectual Star Wars call-out this time around.

Maybe. But I'm gonna start pitching in on building this Wall anyway.

And on the plus side, all those environmental dystopias I wrote back in the day are looking increasingly prophetic. Should give my street cred a bit of a boost, until the book banners burn through all that gender smut and start looking further afield…

Except for Putin, curiously. Putin seems to be staying relatively quiet. Almost as if he doesn't need to kowtow, because he knows he'll get what he wants without any embarrassing displays of self-abasement… ↑

A Blast from the Past:

Arpanet.

Internet.

The Net. Not such an arrogant label, back when one was all they had.

Cyberspace lasted a bit longer— but space implies great empty vistas, a luminous galaxy of icons and avatars, a hallucinogenic dreamworld in 48-bit color. No sense of the meatgrinder in cyberspace. No hint of pestilence or predation, creatures with split-second lifespans tearing endlessly at each others’ throats. Cyberspace was a wistful fantasy-word, like hobbit or biodiversity, by the time Achilles Desjardins came onto the scene.

Onion and metabase were more current. New layers were forever being laid atop the old, each free—for a while—from the congestion and static that saturated its predecessors. Orders of magnitude accrued with each generation: more speed, more storage, more power. Information raced down conduits of fiberop, of rotazane, of quantum stuff so sheer its very existence was in doubt. Every decade saw a new backbone grafted onto the beast; then every few years. Every few months. The endless ascent of power and economy proceeded apace, not as steep a climb as during the fabled days of Moore, but steep enough.

And coming up from behind, racing after the expanding frontier, ran the progeny of laws much older than Moore’s.

It’s the pattern that matters, you see. Not the choice of building materials. Life is information, shaped by natural selection. Carbon’s just fashion, nucleic acids mere optional accessories. Electrons can do all that stuff, if they’re coded the right way.

It’s all just Pattern.

And so viruses begat filters; filters begat polymorphic counteragents; polymorphic counteragents begat an arms race. Not to mention the worms and the ‘bots and the single-minded autonomous datahounds—so essential for legitimate commerce, so vital to the well-being of every institution, but so needy, so demanding of access to protected memory. And way over there in left field, the Artificial Life geeks were busy with their Core Wars and their Tierra models and their genetic algorithms. It was only a matter of time before everyone got tired of endlessly reprogramming their minions against each other. Why not just build in some genes, a random number generator or two for variation, and let natural selection do the work?

The problem with natural selection, of course, is that it changes things.

The problem with natural selection in networks is that things change fast.

By the time Achilles Desjardins became a ‘Lawbreaker, Onion was a name in decline. One look inside would tell you why. If you could watch the fornication and predation and speciation without going grand mal from the rate-of-change, you knew there was only one word that really fit: Maelstrom.

Of course, people still went there all the time. What else could they do? Civilization’s central nervous system had been living inside a Gordian knot for over a century. No one was going to pull the plug over a case of pinworms.

—Me, Maelstrom, 2001

*

Ah, Maelstrom. My second furry novel. Hard to believe I wrote it almost a quarter-century ago.

Maelstrom combined cool prognostications with my usual failure of imagination. I envisioned programs that were literally alive— according to the Dawkinsian definition of Life as "Information shaped by natural selection"—and I patted myself on the back for applying Darwinian principles to electronic environments. (It was a different time. The phrase "genetic algorithm" was still shiny-new and largely unknown outside academic circles).

I confess to being a bit surprised—even disappointed—that things haven't turned out that way (not yet, anyway). I'll grant that Maelstrom's predictions hinge on code being let off the leash to evolve in its own direction, and that coders of malware won't generally let that happen. You want your botnets and phishers to be reliably obedient; you're not gonna steal many identities or get much credit card info from something that's decided reproductive fitness is where it's at. Still, as Michael Caine put it in The Dark Knight: some people just want to watch the world burn. You'd think that somewhere, someone would have brought their code to life precisely because it could indiscriminately fuck things up.

Some folks took Maelstrom's premise and ran with it. In fact, Maelstrom seems to have been more influential amongst those involved in AI and computer science (about which I know next to nothing) than Starfish ever was among those who worked in marine biology (a field in which I have a PhD). But my origin story for Maelstrom's wildlife was essentially supernatural. It was the hand of some godlike being that brought it to life. We were the ones who gave mutable genes to our creations; they only took off after we imbued them with that divine spark

It never even occurred to me that code might learn to do that all on its own.

Apparently it never occurred to anyone. Simulation models back then were generating all sorts of interesting results (including the spontaneous emergence of parasitism, followed shortly thereafter by the emergence of sex), but none of that A-Life had to figure out how to breed; their capacity for self-replication was built in at the outset.

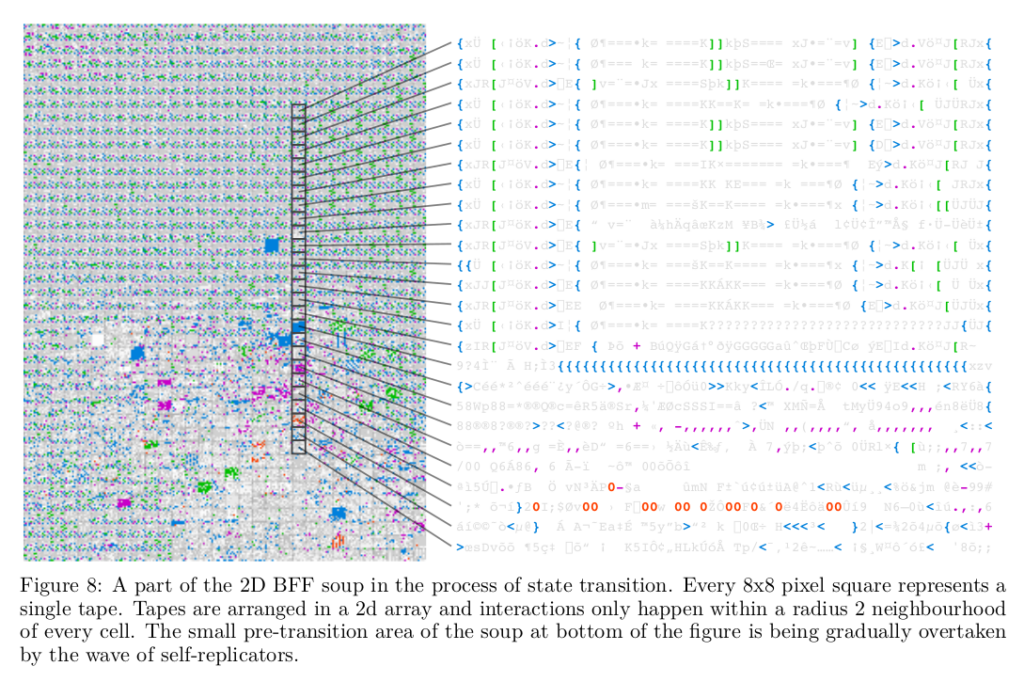

Now Blaise Agüera y Arcas and his buddies at Google have rubbed our faces in our own lack of vision. Starting with a programming language called (I kid you not) Brainfuck, they built a digital "primordial soup" of random bytes, ran it under various platforms, and, well…read the money shot for yourself, straight from the (non-peer-reviewed) ArXiv preprint "Computational Life: How Well-formed, Self-replicating Programs Emerge from Simple Interaction"[1]:

"when random, non self-replicating programs are placed in an environment lacking any explicit fitness landscape, self-replicators tend to arise. … increasingly complex dynamics continue to emerge following the rise of self-replicators."

Apparently, self-replicators don't even need random mutation to evolve. The code's own self-modification is enough to do the trick. Furthermore, while

"…there is no explicit fitness function that drives complexification or self-replicators to arise. Nevertheless, complex dynamics happen due to the implicit competition for scarce resources (space, execution time, and sometimes energy)."

For those of us who glaze over whenever we see an integral sign, Arcas provides a lay-friendly summary over at Nautilus, placed within a historical context running back to Turing and von Neumann.

But you're not really interested in that, are you? You stopped being interested the moment you learned there was a computer language called Brainfuck: that's what you want to hear about. Fine: Brainfuck is a rudimentary coding language whose only mathematical operations are "add 1" and "subtract 1". (In a classic case of understatement, Arcas et al describe it as "onerous for humans to program with".) The entire language contains a total of ten commands (eleven if you count a "true zero" that's used to exit loops). All other characters in the 256 ASCII set are interpreted as data.

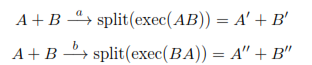

So. Imagine two contiguous 64-byte strings of RAM, seeded with random bytes. Each functions as a Brainfuck program, each byte interpreted as either a command or a data point. Arcas et al speak of

"the interaction between any two programs (A and B) as an irreversible chemical reaction where order matters. This can be described as having a uniform distribution of catalysts a and b that interact with A and B as follows:

Which as far as I can tell boils down to “a” catalyzes the smushing of programs A and B into a single long-string program, which executes and alters itself in the process; then the “split” part of the equation cuts the resulting string back into two segments of the initial A and B lengths.

You know what this looks like? This looks like autocatalysis: the process whereby the product of a chemical reaction catalyzes the reaction itself. A bootstrap thing. Because this program reads and writes to itself, the execution of the code rewrites the code. Do this often enough, and one of those 64-byte strings turns into a self-replicator.

The Origin of Life

The Origin of Life

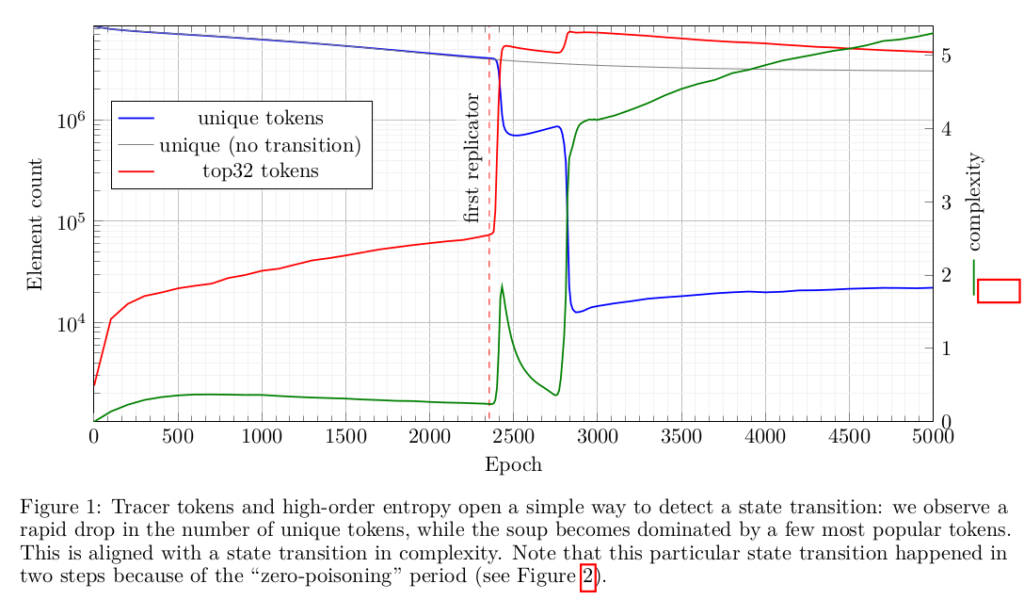

It doesn't happen immediately; most of the time, the code just sits there, reading and writing over itself. It generally takes thousands, millions of interactions before anything interesting happens. Let it run long enough, though, and some of that code coalesces into something that breeds, something that exchanges information with other programs (fucks, in other words). And when that happens, things really take off: self-replicators take over the soup in no time.

What's that? You don't see why that should happen? Don't worry about it; neither do the authors:

"we do not yet have a general theory to determine what makes a language and environment amenable to the rise of self-replicators"

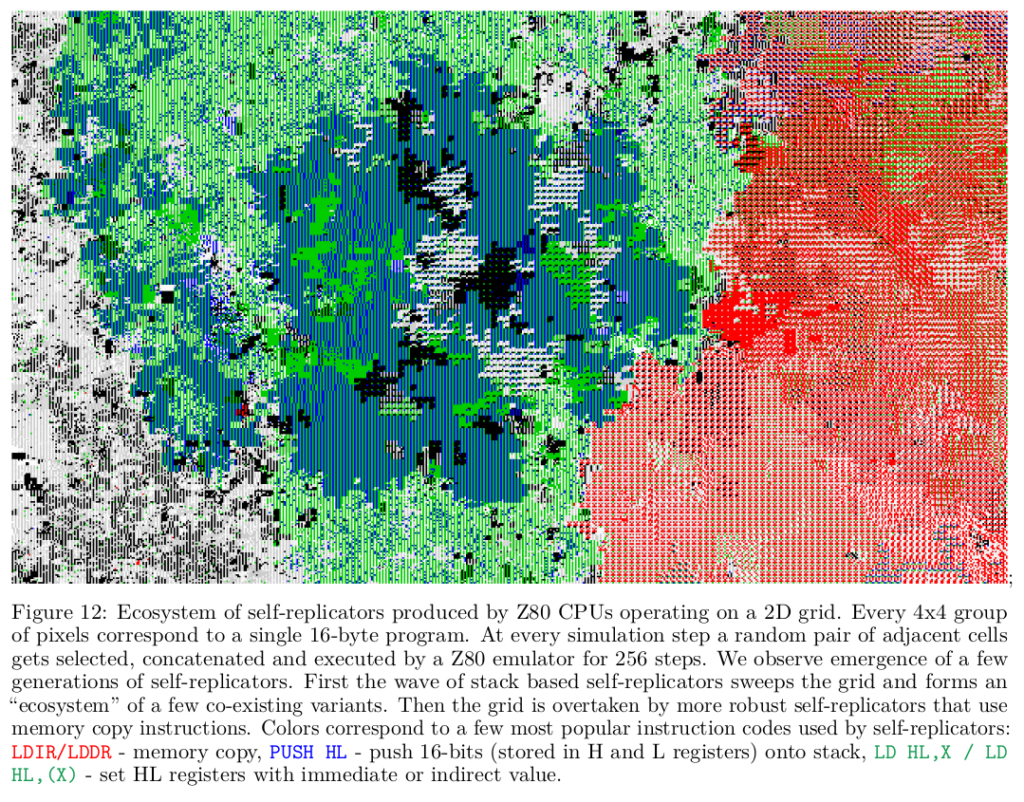

They explored the hell out of it, though. They ran their primordial soups in a whole family of "extended Brainfuck languages"; they ran them under Forth; they tried them out under that classic 8-bit ZX-80 architecture that people hold in such nostalgic regard, and under the (almost as ancient) 8080 instruction sets. They built environments in 0, 1, and 2 dimensions. They measured the rise of diversity and complexity, using a custom metric— "High-Order Entropy"— describing the difference between "Shannon Entropy" and "normalized Kolmogorov Complexity" (which seems to describe the complexity of a system that remains once you strip out the amount due to sheer randomness[2]).

They did all this, under different architectures, different languages, different dimensionalities—with mutations and without—and they kept getting replicators. More, they got different kinds of replicators, virtual ecosystems almost, competing for resources. They got reproductive strategies changing over time. Darwinian solutions to execution issues, like "junk DNA" which turns out to serve a real function:

"emergent replicators … tend to consist of a fairly long non-functional head followed by a relatively short functional replicating tail. The explanation for this is likely that beginning to execute partway through a replicator will generally lead to an error, so adding non-functional code before the replicator decreases the probability of that occurrence. It also decreases the number of copies that can be made and hence the efficiency of the replicator, resulting in a trade-off between the two pressures."

I mean, that looks like a classic evolutionary process to me. And again, this is not a fragile phenomenon; it's robust across a variety of architectures and environments.

But they're still not sure why or how.

They do report one computing platform (something called SUBLEQ) in which replicators didn't arise. They suggest that any replicators which could theoretically arise in SUBLEQ would have to be much larger than those observed in other environments, which they suggest could be a starting point towards developing "a theory that predicts what languages and environments could harbor life". I find that intriguing. But they're not even close to developing such a theory at the moment.

Self-replication just—happens.

It's not an airtight case. The authors admit that it would make more sense to drill down on an analysis of substrings within the soup (since most replicators are shorter than the 64-byte chunks of code the used), but because that's "computationally intractable" they settle for "a mixture of anecdotal evidence and graphs"—which, if not exactly sus, doesn't seem especially rigorous. At one point they claim that mutations speed up the rise of self-replicators, which doesn't seem to jibe with other results suggesting that higher mutation rates are associated with a slower emergence of complexity. (Granted "complexity" and "self-replicator" are not the same thing, but you'd still expect a positive correlation.) As of this writing, the work hasn't yet been peer-reviewed. And finally, a limitation not of the work but of the messenger: you're getting all this filtered through the inexpert brain of a midlist science fiction writer with no real expertise in computer science. It's possible I got something completely wrong along the way.

Still, I'm excited. Folks more expert than I seem to be taking this seriously. Hell, it even inspired Sabine Hossenfelder (not known for her credulous nature) to speculate about Maelstromy scenarios in which wildlife emerges from Internet noise, "climbs the complexity ladder", and runs rampant. Because that's what we're talking about here: digital life emerging not from pre-existing malware, not from anarchosyndicalist script kiddies—but from simple, ubiquitous, random noise.

So I'm hopeful.

Maybe the Internet will burn after all.

-

The paper cites Tierra and Core Wars prominently; it's nice to see that work published back in the nineties is still relevant in such a fast-moving field. It's even nicer to be able to point to those same call-outs in Maelstrom to burnish my street cred. ↑

-

This is a bit counterintuitive to those of us who grew up thinking of entropy as a measure of disorganization. The information required to describe a system of randomly-bumping gas molecules is huge because you have to describe each particle individually; more structured systems—crystals, fractals—have lower entropy because their structure can be described formulaically. The value of "High-order" Entropy, in contrast, is due entirely to structural, not random, complexity; so a high HEE means more organizational complexity, not less. Unless I'm completely misreading this thing. ↑

Time :5:30

Scroll :"yuen" by Kitaro Nishida

Bowl:9th Ohi Chozaemon

Tea: Hoshinoen "Hojyu"

Had a nice bowl of thick tea this chilly morning.

Nishida is the founder of the Kyoto School of Philosophy and one of the founding members of the Chiba Institute of Technology. The scrolls says "yuen" and it means far and distant in time and space or eternity.

Tea practice has made me much more aware of time - many of the utensils we use are hundreds of years old, and the scrolls and the utensils we use will likely continue to be used for hundreds of years. Hold an ancient bowl; one can imagine when it was made, the people who handled it, and the society and history surrounding them. Then, it is easy to imagine the bowl in the future and the people and cultures they will live in. Then stretch and keep pushing time in the past and the future until you envision eternity.

Preamble: OK, I lied. Said last time that this time was gonna be about science, and no more of this fluffy promo bullshit. And I meant well. But this time, the fluffy promo is about me. And it's cool. And more to the point, I don't have to spend hours doing research on things I don't actually know much about, so it's fast. When you're writing to deadline, fast is good.

Brag 1.

So check this out:

I have a small hand in this universe. I'm developing some of its Lore. I am not allowed to give you any details, but none of you will be surprised to learn that said Lore contains certain, shall we say, Darwinian elements.

I think it's going to rock.

Brag 2.

There’s something I'm allowed to share even fewer details about than EVE. In fact, I'm not even allowed to say that I am involved in it, although the trailer dropped earlier than EVE’s and the curtain rises sooner. I am allowed to paste promo copy from a certain corporate entity about a certain project—in effect, to place someone else's generic ad copy onto the 'Crawl without explaining its relevance. This is an opportunity I must regretfully decline.

A shame, though. From what I've seen, it's gonna be awesome. Stay tuned.

Brag 3.

And this—this—may be the least significant item in terms of pop culture, but it is, by far, the closest to my heart. It is an honor that generally accrues only to the likes of Gary Larson, Greta Thunberg, and Radiohead.

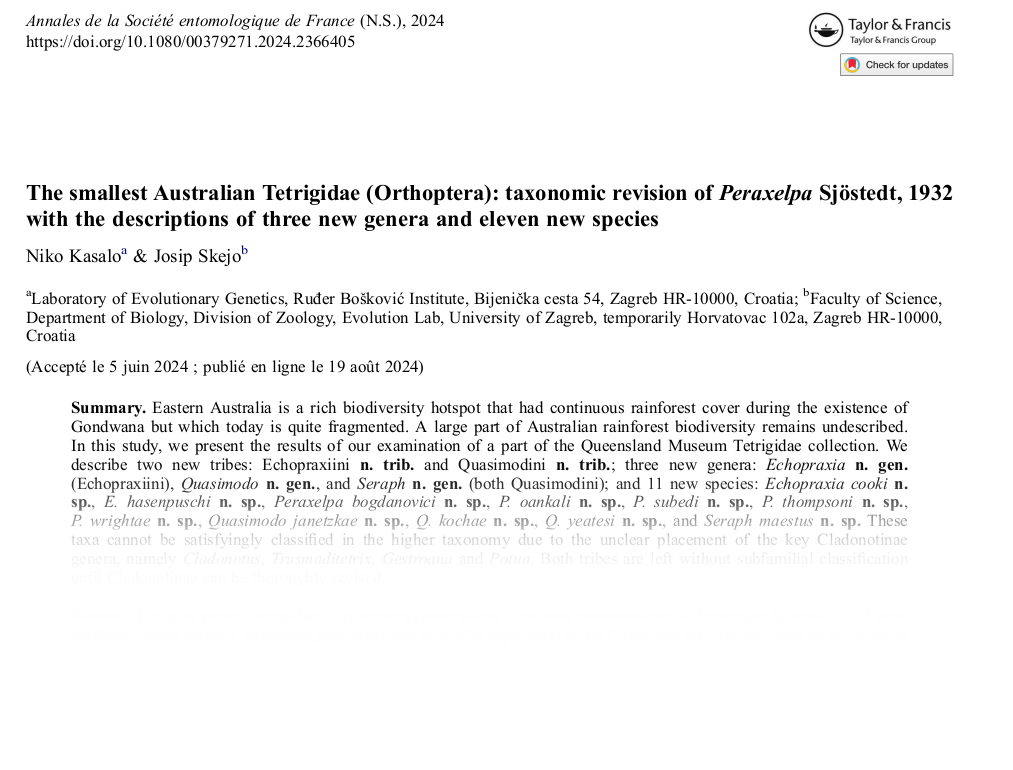

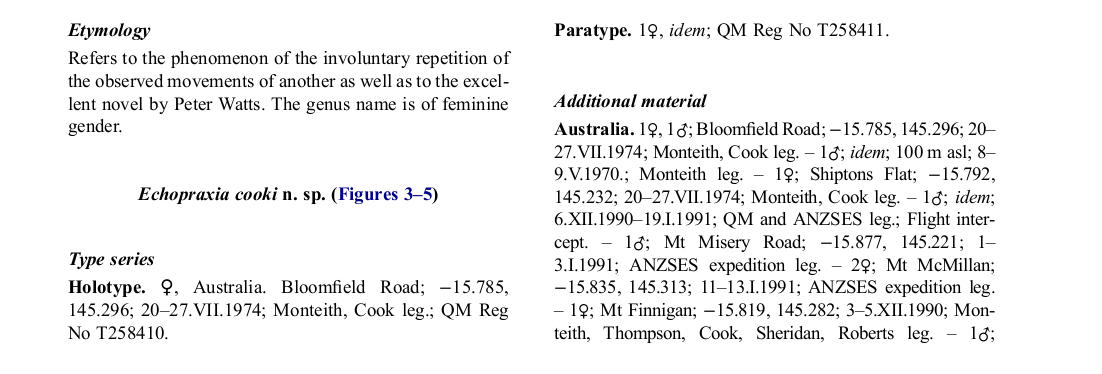

Niko Kasalo & Josip Skejo have named a tribe of Australian Pygmy Grasshoppers after me.

I mean, not me personally, but one of my novels. The Tribe is Echopraxiini; the genus is Echopraxia; the species is E. Hasenpuschi. And should any of you point out that correlation is not causation, and that there might be any number of reasons why someone might name a taxon after the neurological malady without even knowing about my novel, I've got you covered:

The paper in its entirety is paywalled, but I have uploaded a copy for your forensic edification just in case you think I'm full of shit. After reading it you may still conclude that I faked the whole manuscript, which offhand I cannot disprove. But if I did, you gotta admit I did a bang-up job.

Anyway: now you have some idea of the stuff I've been doing when I haven’t been writing Echopraxia Omniscience. I haven't just been lying around jerking off all these months.

Well, not exclusively.

Next up: science. Definitely[1].

Probably. ↑

R.U. Sirius & Shira Chess are pleased (and a little

frightened) to announce that we have contracted with

Strange Attractor Press to publish Freaks in the

Machine: Mondo 2000 in Late 20th Century Tech

Culture. With a forward by Grant Morrison.

Before the entire world was online, before it was divided

into social media enclaves and corporate-sanctioned

“likes,” before even WIRED magazine, there was Mondo

2000. Published from 1989-1997, Mondo reached a peak

distribution of about 100,000, but the magazine was even

more influential than its distribution implied. M2K was

raved about by 90s media outlets, consumed by early tech

culture, and demonstrated a transition from the literary

cyberpunk style of Gibson and Vinge into an aspirational

cyberpunk aesthetic that leaked into the material world. In

1994, Douglas Rushkoff described Mondo as the "voice of

cyberculture."

Published by a rag-tag team of psychonauts and

counterculture weirdos, and based out of a Berkeley Hills

mini-mansion, the parties and strange goings-on were

almost as legendary as the magazine itself. The Mondo

publishers — editor-in-chief R.U. Sirius and Domineditrix

Queen Mu — were not technologists. Yet their Bay Area

publication created a desire for the technological zeitgeist

of the coming millennium. In turn, the high (and sometimes

low) weirdness of Mondo established a kaleidoscopic onramp

that rocketed a lot of early adaptor mutants (or

"Mondoids") onto the so-called “information superhighway”

of the late 90s and early 00s.

Part memoir, part history, and part critical analysis, Freaks

in the Machine tells the storied adventures of the

magazine’s tumultuous history, its strange cast of

characters and its irreverent content.

"Mondo was the next bold stage in an evolutionary advance where street

cred and an underground ethos would come with the potential to appeal to

a mainstream audience, to wake up the straight world, announcing its

arrival like a flare over the horizon so everyone could see and know this

was the signal they'd been waiting for." -Grant Morrison

R.U. Sirius is still best known as the editor-in-chief of the

great 1990s cyberculture magazine Mondo 2000 although

some historians insist that he should be most remembered

for his skills as a second baseman in the West Islip New

York little league while others have raved about the

infrequent and disturbing live appearances of the band

Mondo Vanilli. [Note from Shira: There were no historians.]

He has written for Time, Rolling Stone, Salon, WIRED and

a bunch of other publications that are stored on the tip of

his tongue. Books include Counterculture Through The

Ages (with Dan Joy), Design for Dying (with Timothy

Leary) and Cyberpunk Handbook (with St. Jude & Bart

Nagel).

Shira Chess is an Associate Professor in Entertainment &

Media Studies at the University of Georgia and a

recovering game studies scholar. She is the author of

several books on digital culture and video games,

including the forthcoming MIT Press book The Unseen

Internet: Conjuring the Occult in Digital Discourse. She

joins this project to add context and history, to referee any

nonsense, to try to make sense of Sirius's lunatic ravings,

and to excise any excesses of cringe.

The post Freaks in the Machine: Mondo 2000 in Late 20th Century Tech Culture. appeared first on Mondo 2000.

More people came to Carol's funeral than there were seats in the crematorium chapel: our families, her friends and mine, some of whom had travelled a long way. The funeral directors, P B Wright and Sons, took care of the arrangements kindly and professionally. Catriona Miller, the humanist celebrant, conducted the service and delivered a warm and accurate tribute to Carol. I spoke about Carol's life with me, and Michael spoke for himself and Sharon about Carol as a mother. Two hymns were sung that had also been sung at our wedding. The closing music was a song Carol had played countless times: 'Stars' by Simply Red.

The Order of Service booklet featured a fine recent photograph of Carol by Michael, and some of Carol's own photographs of Gourock's sunset skies.

The collection was for two charities that Carol had actively supported: the RNLI, and Medical Aid for Palestinians. It raised £1138.50, which yesterday I rounded up to £1200 and divided evenly into two donations in her memory.

Many, many thanks to all who attended, and to all who contributed so generously, at the collection and online. Thanks also for the many messages and cards of sympathy, for which I and all the family are deeply grateful.

Carol Ann MacLeod, 11 February 1952 to 16 August 2024

Carol, my beloved wife whom I met in 1979 and married in 1981, died on Friday 16 August.

Carol, my beloved wife whom I met in 1979 and married in 1981, died on Friday 16 August.

She was the centre of my world, and she's gone.

There will be a funeral service at Greenock Crematorium, on Monday 2 September, at 2 pm, to which all family and friends are invited. Family flowers only please. There will be a retiral collection in aid of Carol's favourite charities. #

I know, I know. Two pimpage posts in a row. Not my usual shtick, and I assure you not any kind of new normal; the stars just aligned that way this time around. For what it's worth, next time I expect to be talking about Darwinian evolution in digital ecosystems, complete with a tortured retcon arguing that I saw it all coming two decades ago with Maelstrom.

You know. The classics.

Forward One:

Artist, children's author, musician, video maestro—not to mention good friend and RacketNX nemesis—Steven Archer is at it again. I've sung his praises before on this 'crawl, even written a story based on one of his songs. I'm not the only one to appreciate the man's work, even though darkwave grunge is about as far as you can get from my usual proggy aesthetic; he's worked with entities as diverse as NASA and Alan Parsons. Neil Gaiman lauded his skills while Steven was still a student (granted, that endorsement has not aged as well as he might have hoped).

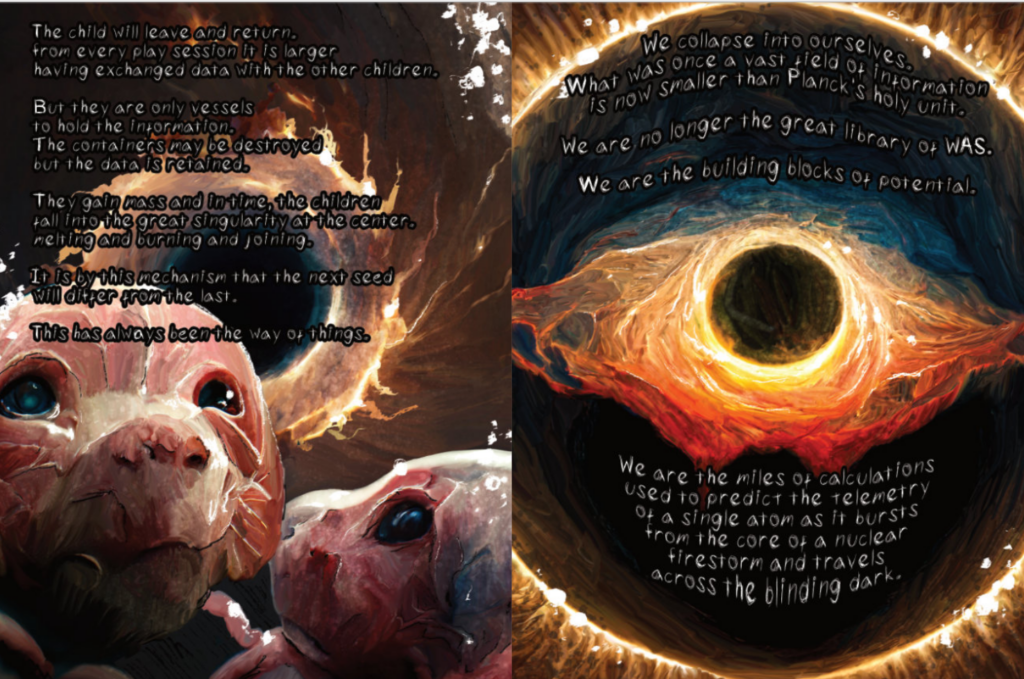

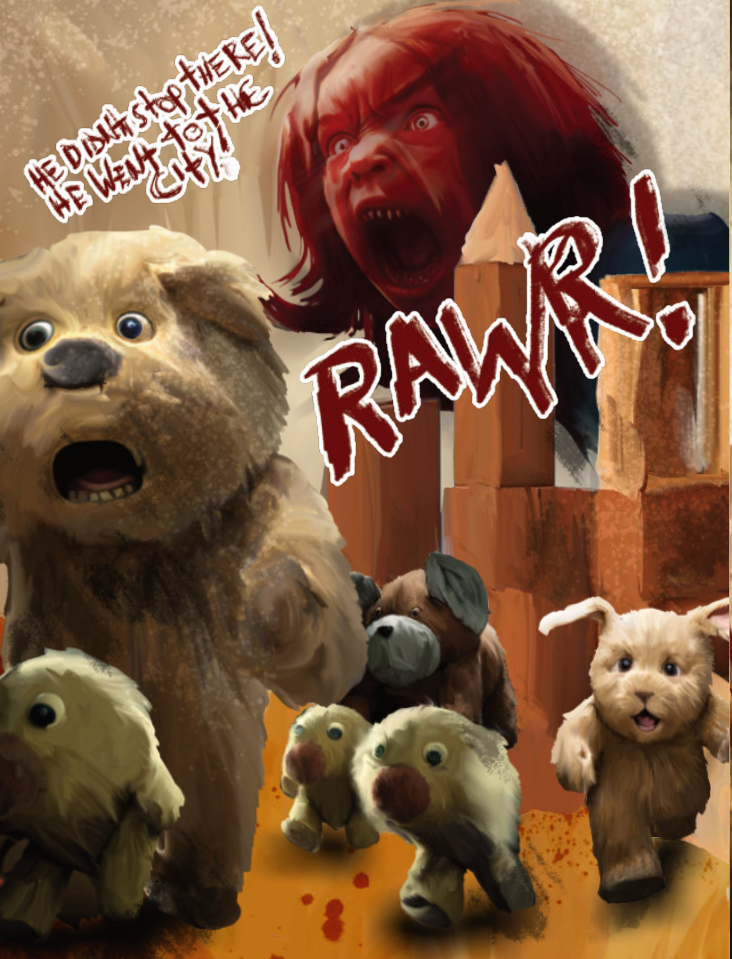

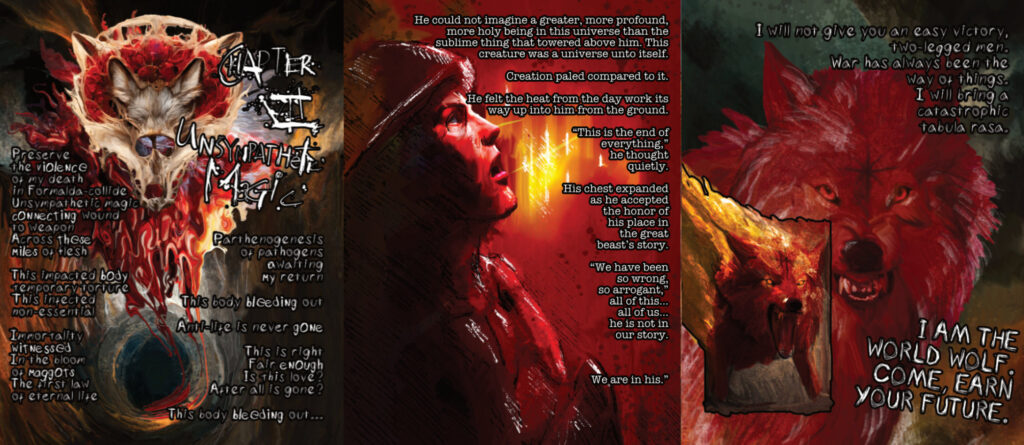

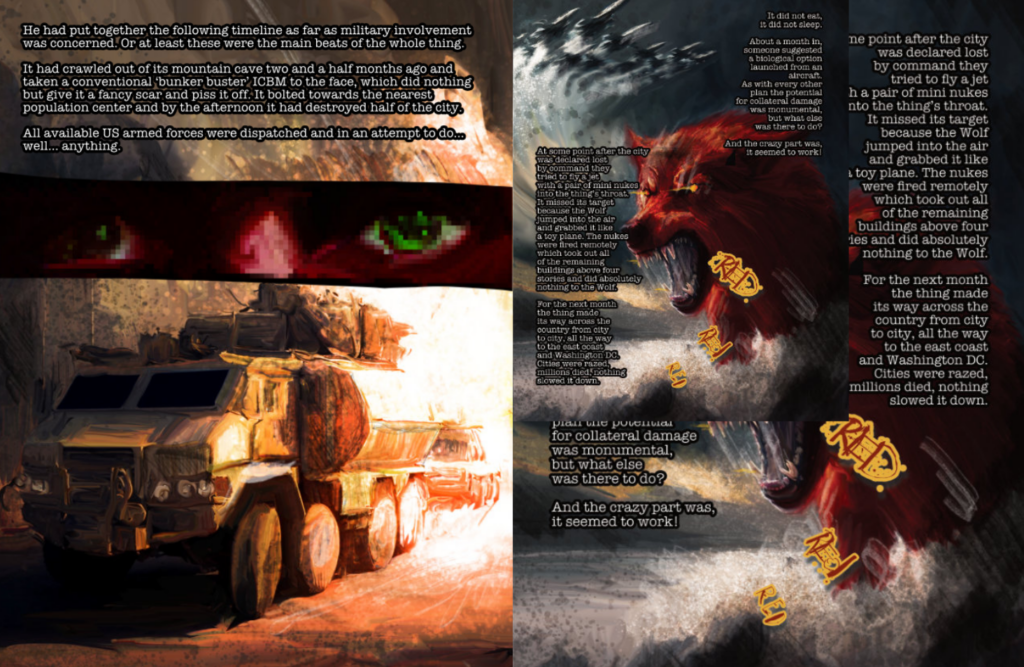

This time around he's released a jagged graphic novel—a companion piece to Stoneburner's Apex Predator album, though by no means do you have to experience one to appreciate the other— about canine deities who generally exist outside time and space but who, here in what we call reality, still crush cities underfoot like any self-respecting kaiju when they get pissed. Unlike last post's Alevtina and Tamara, there's no doubt that Tooth and Claw is a proper graphic novel. Its got a definite and coherent and very long story arc: it starts at the beginning of time (it's a creation myth at heart) between "waves of energy so far apart you cannot call them heat", and it ends in pretty much the same place. (Well, technically it ends with Nicholas Cage starring in a Ridley Scott movie about a giant wolf laying waste to the United States, but that's just part of the epilogue).

The art ranges all over the place, from saturated oils that bleed across the page to joyful childlike scribbles to even that A-word nobody uses any more for fear of provoking backlash. The verbiage, as usual, is a delight—"every species learns by breaking the things around them", "there goes God, making the scientists look stupid again", "I am what is left after the stars go out". Vignettes unfold in singularities and coffee shops and frozen steppes and burning cities. The vibe ranges from Crichton to Call of Duty to Indigenous Creation Myth by way of Lee Smolin. Conspiracy theorists rage on the Internet. A girl on her sixth birthday reenacts Armageddon with her stuffed animals. Saturn's Rings turn out to be the skid mark of an ancient deity slingshotting en route to earth. Soldiers just follow orders; scientists try to figure out how something the size of a mountain gets enough to eat. It's really good.

I wrote the Forward. That's pretty good too.

You can see the excerpts on this page. View the art, read the captions: a small taste, nothing more.

If you fancy a whole meal, here's where you get it.

Forward Two

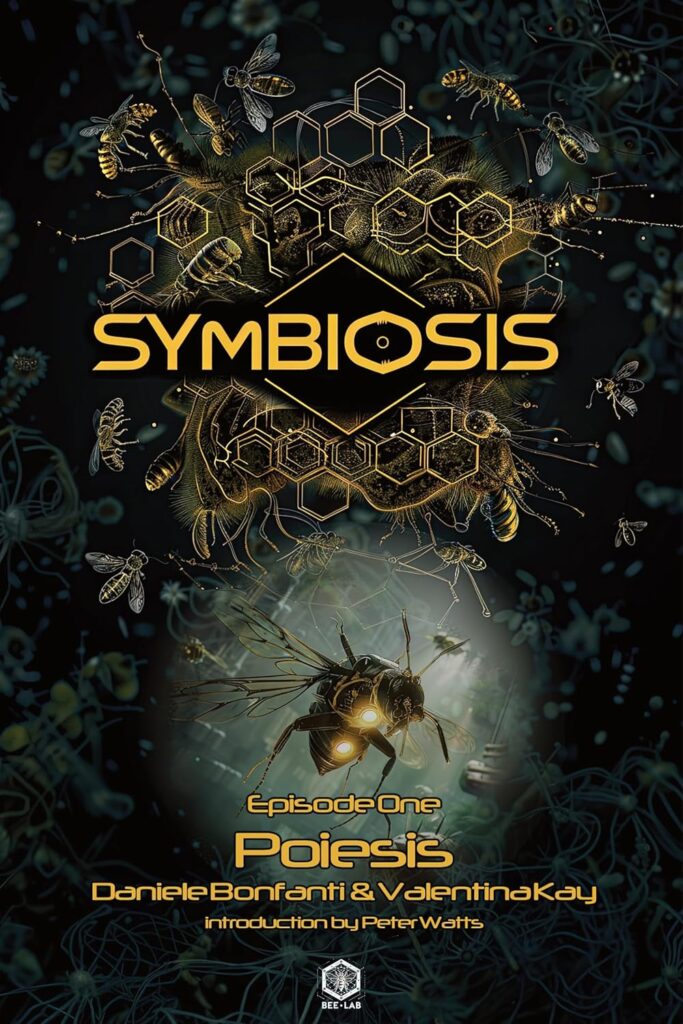

"Will the explorers manage to escape the crazed thing and fight their way back to the Endurance?" asks the back-jacket text for the batshit novella Poiesis, then goes on to answer itself:

"Probably, because this is Episode One of a saga.

"But hey, you never know."

Which gives you a sense of the attitude that indie coauthors Valentina Kay and Daniele Bonfanti bring to their new venture: a splatterspace epic of indeterminate length, released one standalone chapter at a time, like some unholy love child of— well, in the Forward (yeah, I wrote one for this too) I describe it as something you might get if Sam Peckinpah and Quentin Tarantino collaborated on an episode of Doctor Who with Douglas Adams acting as creative consultant. I suppose that's as good a description as any. Poiesis plays with some very big ideas (a title like that, how could it not?) but it doesn't take them—or itself—too seriously. One of the series' protagonists is a superintelligent swarm of bees who's romantically involved with a sapient plant (the whole pollination thing, you understand). The good ship Endurance's military muscle consists of a couple of cheerful jarheads to whom getting a limb blown off is all in a day's work, and whose considerable arsenals include a gun that fires weaponized superbacteria the size of cocktail wienies. They all tool around the cosmos in a ship that, on the inside at least, looks like a seaside Mediterranean village, and they live in a reality formed by "the cognitions in the mind of the Universe actualized in perceptions"— which might have a familiar ring to anyone who's encountered the work of Bernard Kastrup. (In fact, this whole dripping-viscera-laden first chapter revolves around questions of AI and consciousness in a way that suggests (to me, anyway) that Kay and Bonfante also have a passing familiarity with Penrose and Hameroff's Orch OR hypothesis.)

The entire epic goes by the title Symbiosis. Only the first installment is out, so I don't know where it'll end up. But I do like the way it begins.

Ten Years Gone

I've done a fair number of podcasts over the years. Hell, I've done eight or nine in just the past year, two of those with Tales from the Bridge— at whom I seem to have become a semiregular, and whose crew got me onto a panel at Toronto's FanExpo back in 2021 (an event to which, curiously, I have never been invited back. This might have something to do with my gleeful endorsement of a video clip I played off the top, in which one character addresses the self-righteous environmentalism of another by asking why she'd had a child if she cared about the environment so much, itemizing the enormous impacts that first-world reproduction inflicts on ol' Earth, and offering to slit her sprog's throat to help redress the imbalance. The young parents sitting in the front row with their toddler stormed out before I'd even reached the good part.)

I don't usually pimp such appearances— partly because that's the podcasters job, and partly because I don't want to be one of those people forever thumping their tubs about every minor appearance as though it were somehow on a par with discovering life on Enceladus. But I seem to be on a roll here anyway, and this latest release from the Bridgers—just a few weeks old—isn't so much an interview between podcasters and their guests as it is a long-overdue catch up between a couple of buddies who haven't seen each other in over a decade.

Richard Morgan and I were exchanging emails as colleagues and mutual fans for a couple of years before the people at Crytek put us in competition with each other for the Crysis 2 gig. That was when we first met in the flesh, over in Germany—and where we reunited a couple of years later, to work on another game that never made it onto the market. (That was probably just as well, actually. Certain aspects of that project encouraged a sort of blurring of game and reality in a way that might have provoked, ahem, unfortunate behaviors among those with an infirm grip on the latter.)

We hit it off. We were a perfect fit for that whole arguing-ideas-over-beers thing that I've missed so much since I left academia. The man also proved his worth when he waded into the fray over the Requires Hate debacle—a battle from which any number of self-proclaimed "friends" slunk away, tails between legs, muttering something about not wanting to antagonize the Twitter crowd. Richard didn't care about any of that shit. He called it as he saw it.

But like I say, that was over ten years ago. Barring the occasional email, we haven't been in touch since—until Tales from the Bridge got us together to reminisce about the old days. They probably got more than they bargained for; at least, they got more than what they could fit into one podcast. So what I'm pimping here is only Part One. (Last-minute update: shit, Part Two's out there now as well. Damn. I gotta pay more attention to deadlines.)

Honestly, I don't know how good it is. I don't know how interesting you'll find it. I kind of stopped thinking in those terms at the first Duuude! It was beers and ideas, albeit without the beers. It was two old friends catching up.

Arthur Jafa once pointed out that Eric Clapton's "Layla" was not written for Clapton fans; it was for Patti Boyd[1]. Others were welcome to listen in, though[2]. Maybe this conversation—in a much smaller, much-less-influential way— is something like that.

I, for one, had a blast.

- https://en.wikipedia.org/wiki/Layla ↑

- By way of context, AJ was drawing parallels to his own art: he's in conversation with American Black culture, he's not talking to us white folks. But he doesn't mind if we eavesdrop. ↑

My Glasgow Worldcon Schedule

As some of you may know, I'm a Guest of Honour at the Glasgow Worldcon. I haven't said enough about that here, I know. I'm well chuffed about it, needless to say. Here are time/places where you can be sure to find me.

Autographing: Ken MacLeod, Thursday 8 August 2024, 13:00 GMT+1, Hall 4 (Autographs)

Opening Ceremony, Thursday 8 August 2024, 16:00 GMT+1, Clyde Auditorium

Morrow's Isle - Opera, Thursday 8 August 2024, 20:00 GMT+1, Clyde Auditorium

Iain Banks: Between Genre and the Mainstream, Friday 9 August 2024, 11:30 GMT+1, Alsh 1

Luna Press Book Launch Party, Friday 9 August 2024, 13:00 GMT+1, Argyll 2

Guest of Honour Interview: Ken MacLeod, Friday 9 August 2024, 16:00 GMT+1, Lomond Auditorium

Table Talk: Ken MacLeod, Saturday 10 August 2024, 11:30 GMT+1, Hall 4 (Table Talks)

The Making of Morrow's Isle - An Opera, Saturday 10 August 2024, 14:30 GMT+1, Argyll 2

NewCon Press Book Launch, Saturday 10 August 2024, 16:00 GMT+1, Argyll 3

The Politics of Modern Scottish SF, Saturday 10 August 2024, 20:30 GMT+1, Castle 1

Reading: Ken MacLeod, Sunday 11 August 2024, 10:00 GMT+1, Castle 2

Autographing: Ken MacLeod, Sunday 11 August 2024, 11:30 GMT+1, Hall 4 (Autographs)

An Ambiguous Utopia: 50 Years of Ursula K. Le Guin's The Dispossessed, Sunday 11 August 2024, 14:30 GMT+1, Meeting Academy M1

2024 Hugo Awards Ceremony, Sunday 11 August 2024, 20:00 GMT+1, Clyde Auditorium

Stroll with the Stars - Monday, Festival Park, Monday 12 August 2024, 09:00 GMT+1, Outside Crowne Plaza

Writing Future Scotland, Monday 12 August 2024, 13:00 GMT+1, Lomond Auditorium

#

Interference patterns from LED street lighting creates pixelated shadows.

Early in 2024 I started spending time with Barb Ash, who I met in the Los Gatos Coffee Roasting. Being with Barb makes me a lot happier than I’ve been for the last year and a half. I’m glad I met her. In May, we went ahead and did a trip to England together, spending […]

The post England with Barb first appeared on Rudy's Blog.

June 23, 2024. Reading my anti-gun "Big Germs" story at the SF in SF meeting. In memory of Terry Bisson. And huge thanks to sound wizsard Rusty Hodge. Press the arrow below to play "Big Germs.” We’re using the new and improved .m4a sound file format instead of the old .mp3. If you have a […]

The post Podcast #115. "Big Germs" first appeared on Rudy's Blog.

(As usual, click on any of the following images to embiggen. Although I really shouldn’t have to be telling anyone that.)

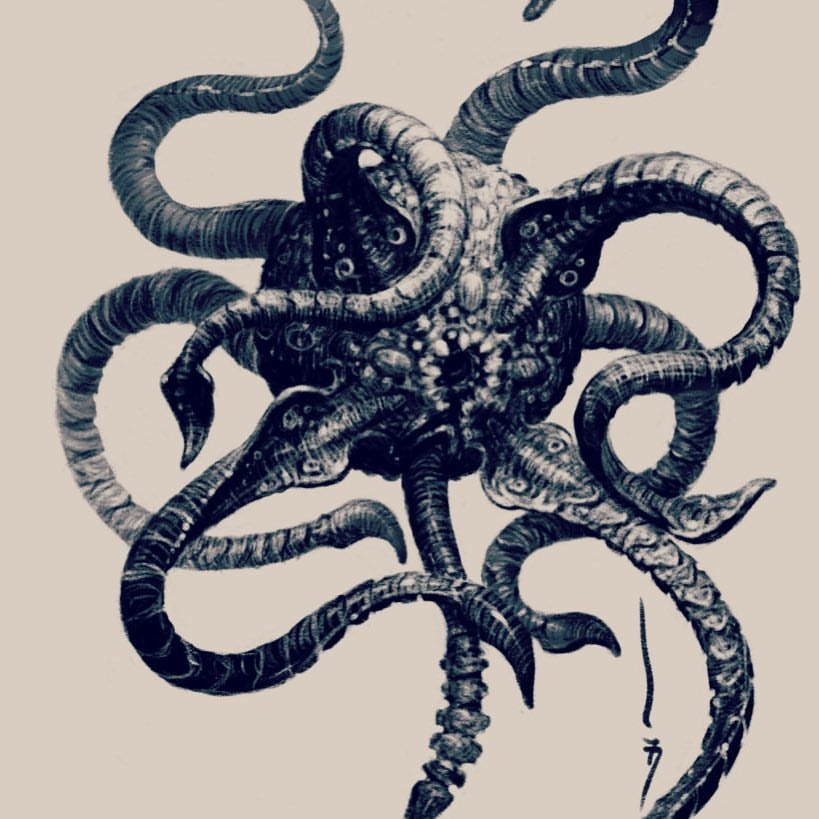

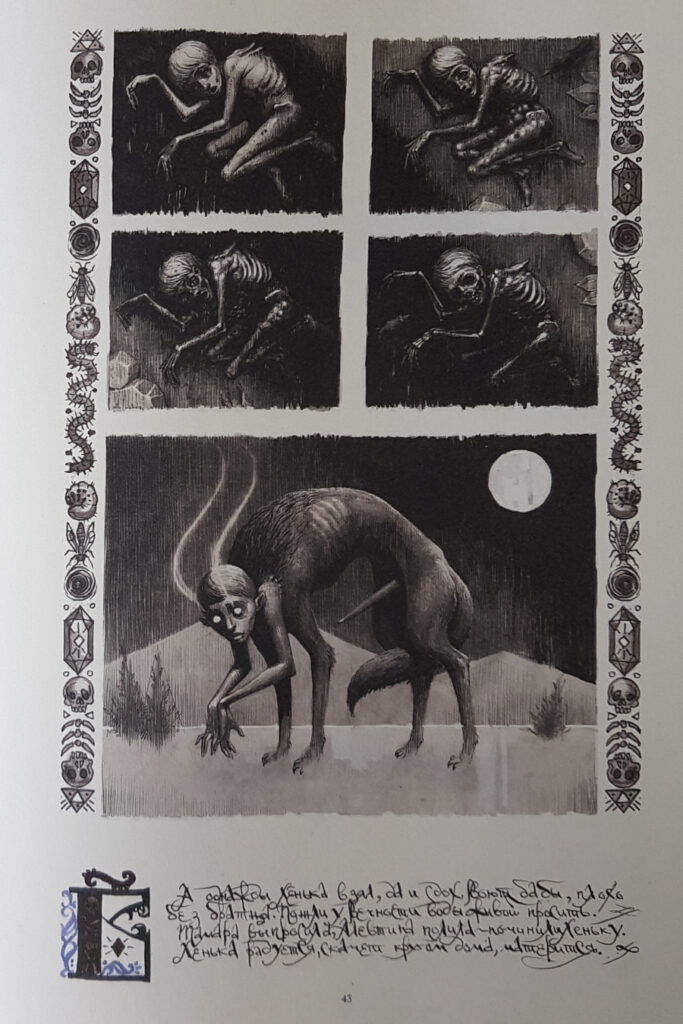

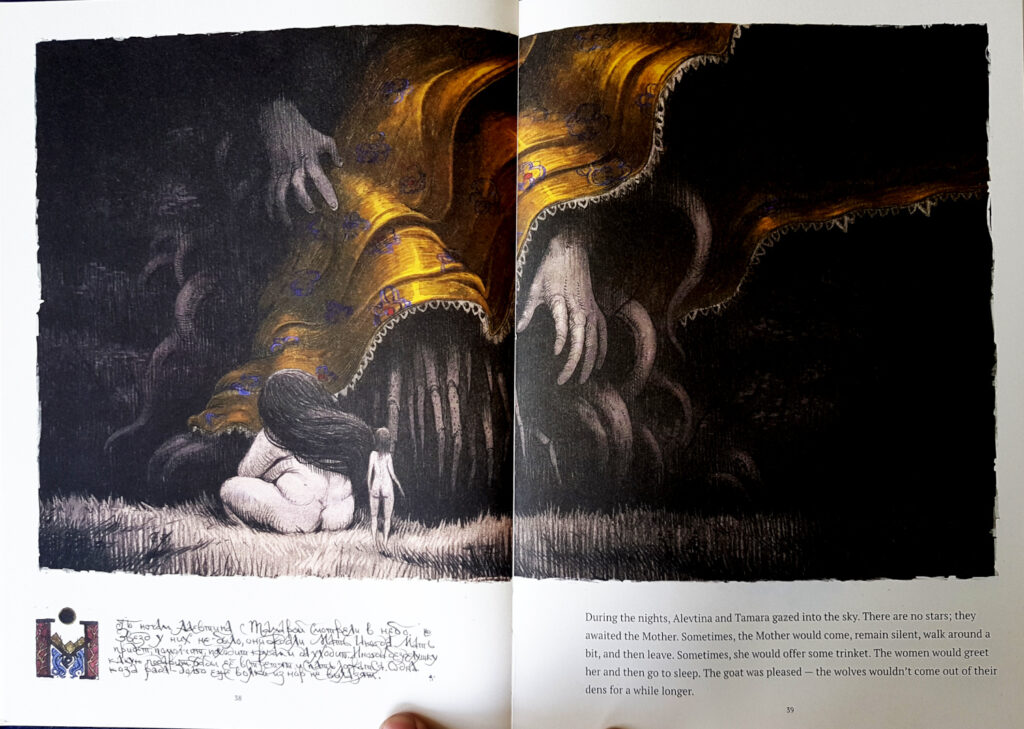

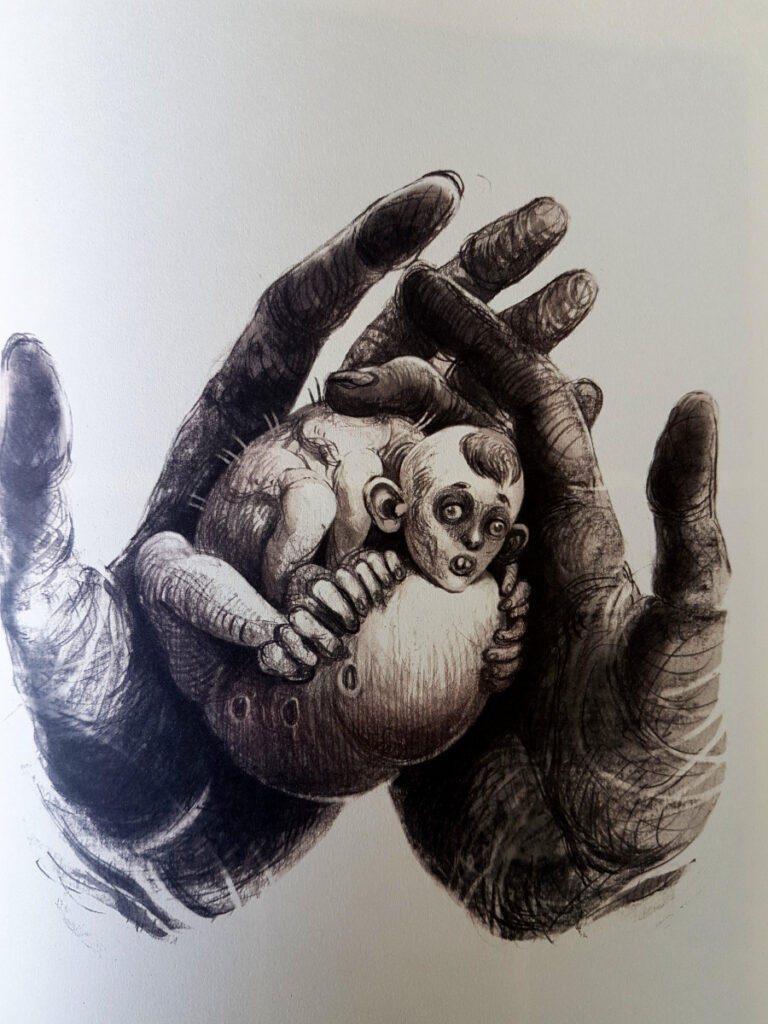

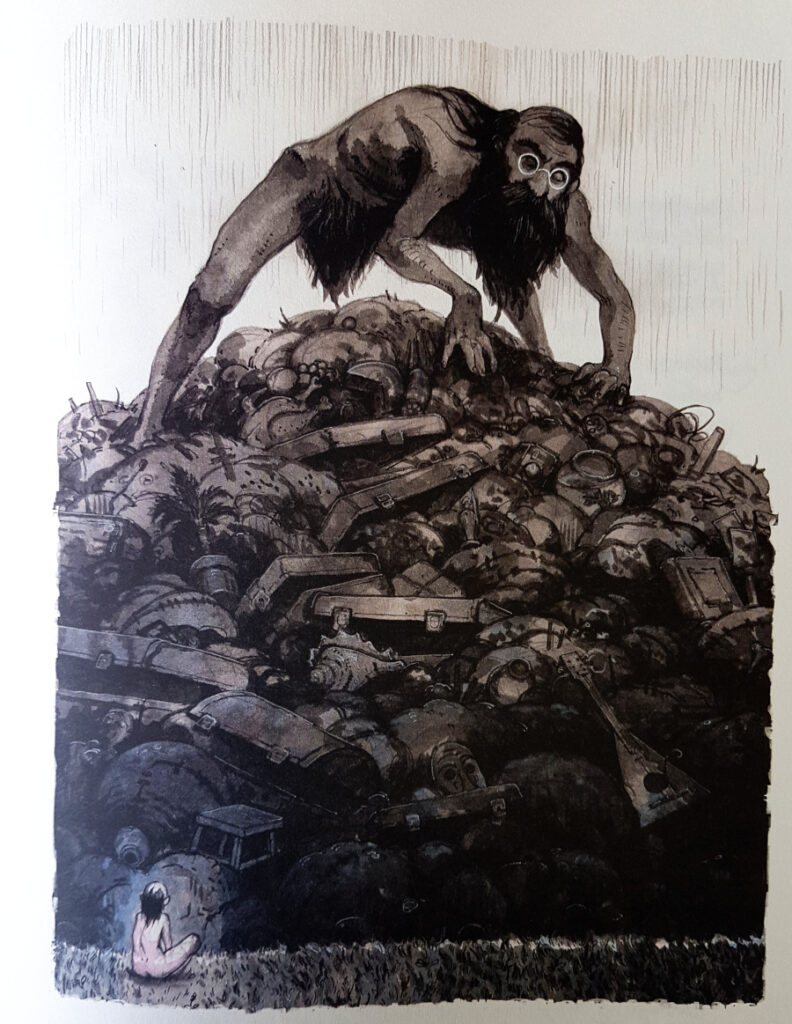

You might have seen a dude by the name of Dimitry SkoLzki hanging around the gallery hereabouts. He did these distinctive black-and-white sketches—they have an almost almost wood-cut vibe—inspired by characters and events in Blindsight (and later, Echopraxia). They impressed my Chinese publishers so much they bought the rights for their own Blindopraxia imprints.

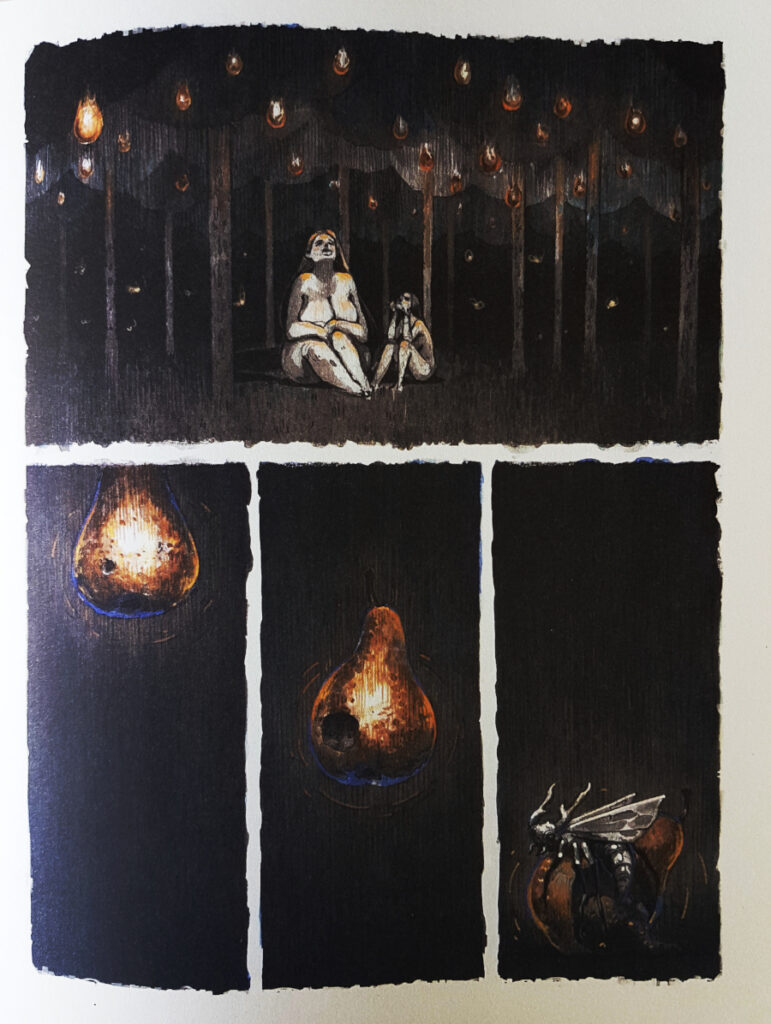

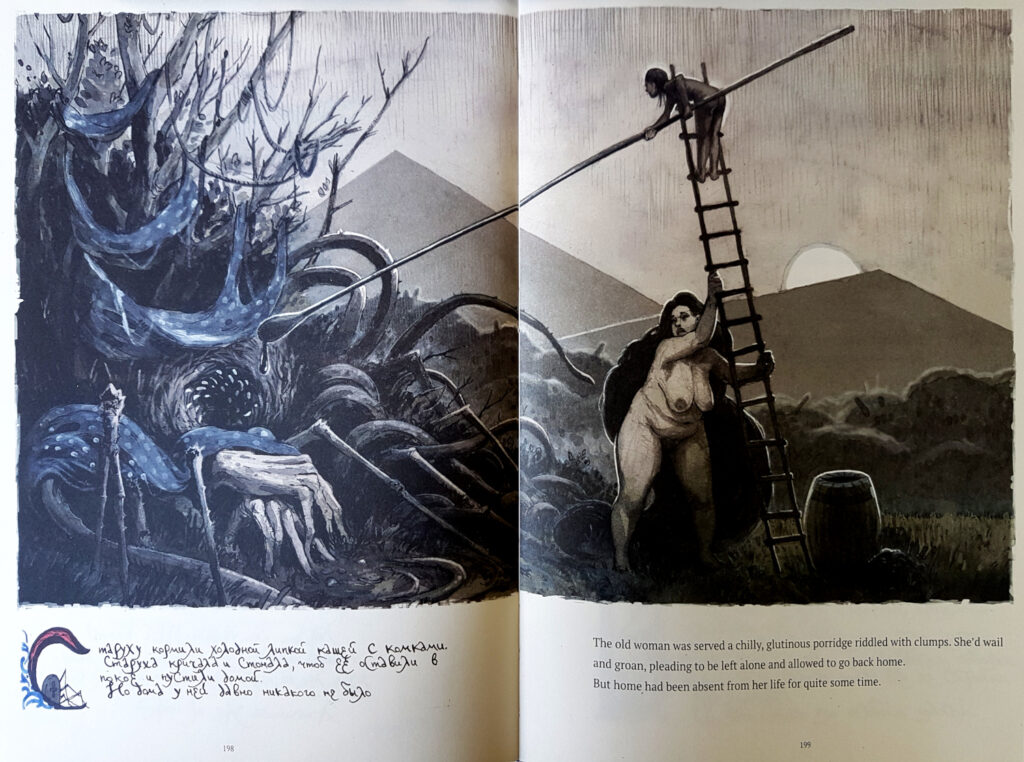

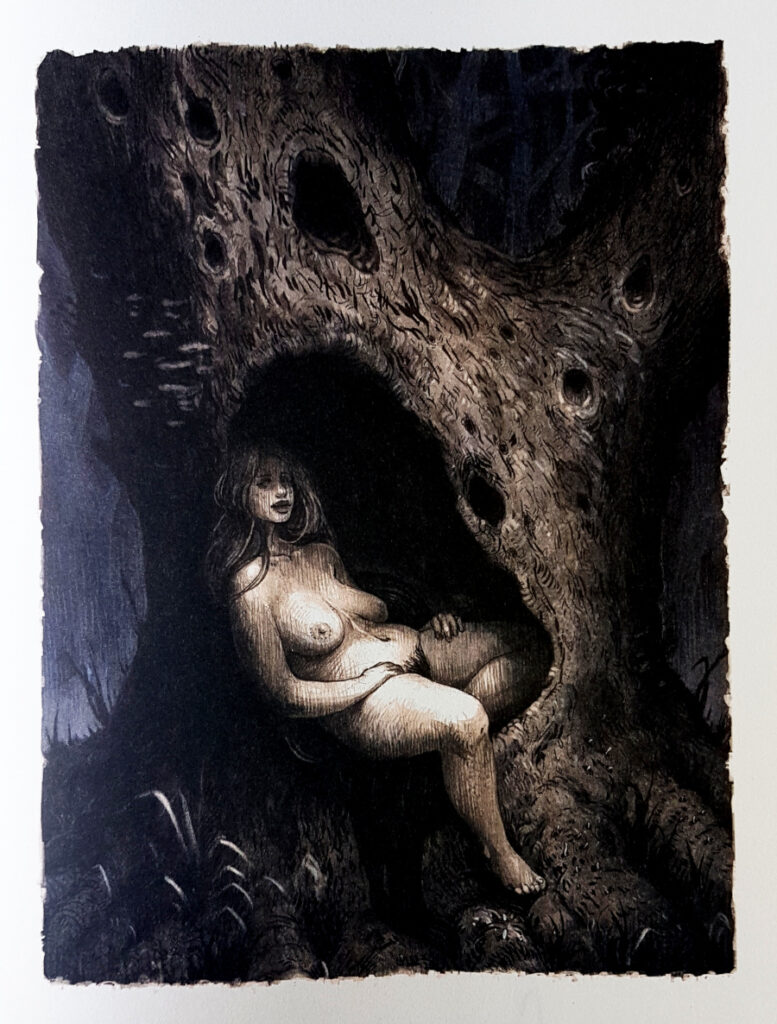

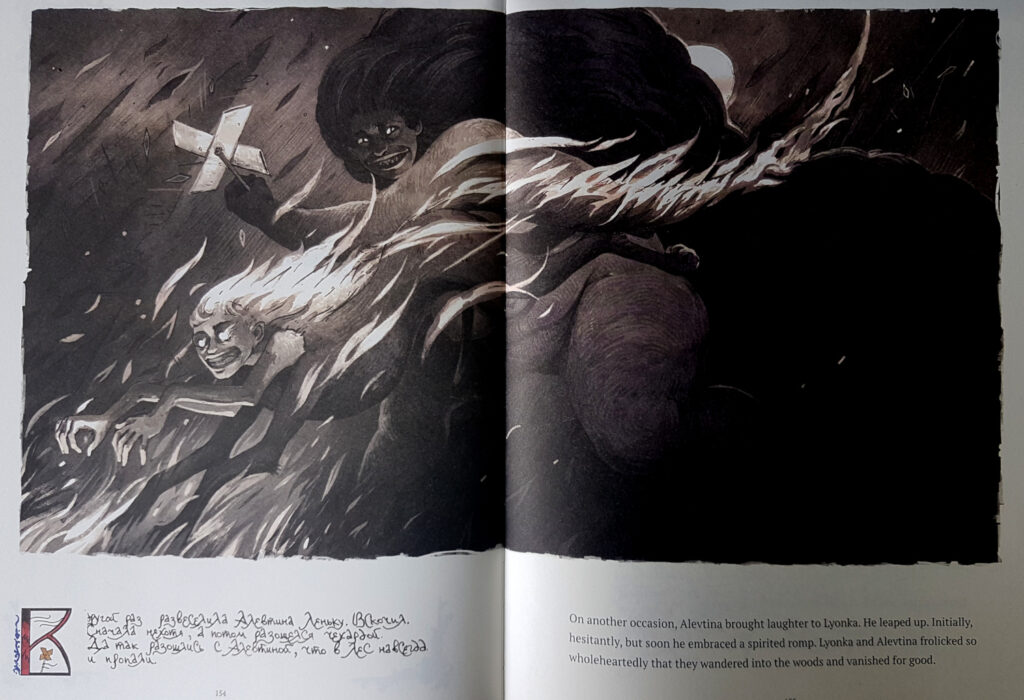

Russian by birth, currently based in Cyprus (he got the hell out of Dodge just before Putin went full-on Goon Squad), SkoLzki has recently put out a heartfelt volume of—I'm not quite sure how to describe them, exactly. Certain cultural outlets are calling it a collection of "noir fairy tales"; others call it a graphic novel. Neither description is wrong, exactly, but neither really captures the essence of this surrealistic, horrific, old-time Russian Grimm-tales volume. Limasol Today says that it's about "the author exploring his inner worlds and the depths of his personality through metaphors and symbols." Dmitriy tells me that it was forged against a backdrop of "longterm depression" (to which I can only say, Dude, if you could pull off something like this when you're depressed, I can barely imagine what you could come up with when you're ecstatic). The phrase "mystical noir illustrated tales" has been bandied about.

What we're looking at is an interlocking series of nearly a hundred vignettes clumped together into five larger—I'm going to call them dream tales—across 227 pages. The balance between word and picture varies from leaf to leaf. Sometimes a mural sprawls across two facing pages with barely three lines of text to accompany it; other times a whole page of cramped handwriting has to stand on its own without so much as a stick figure for support. The balance between light and dark is a lot more consistent: darkness always wins, even in the happy bits.

The volume is titled Alevtina and Tamara, but— while those two sisters are omnipresent throughout— the stories really orbit around their brother Lyonka. Lyonka dies pretty much out of the gate (he's a sickly child who likes beetles) but quickly resurrects and lives out the rest of the book as some kind of hyperphallic goat-human hybrid. A mother figure the size of a mountain breezes through the woods now and then; predators and prey are always prowling around at the edges of the page. Quests trivial and epic get wrapped up in a few lines of free verse, or abandoned halfway through when someone gets distracted by something shiny. A lot of time is spent in trees. It's a weird, dark, disjointed, strangely innocent celebration of the macabre, East-European-mythic right down to the marrow, and I loved it.

The whole thing is Dream Logic made flesh. I bet Lynch would be a fan. I bet Cronenberg would too.

But I may be way too late sending out invitations to this party; Alevtina and Tamara came out back in April. A little like Lyonka and his sisters, I too have been pulled this way and that by various deadlines, ambitions, and emergencies (I'm still a bit soggy after slurping the pond out of our basement during the recent flooding—Climate Change finally hits the Magic Bungalow).

It's not that SkoLzki's work is the kind that staledates, mind you. I might even call it "timeless", if I wasn't so afraid of descending into cliché. But SkoLzki's only released this thing in a limited edition of 300 beautifully embossed hardcovers; if they're not sold out now, they might be soon.

So if the renditions you're looking at here call out to you, head over to the store and check the inventory. Alevtina and Tamara isn't for everybody, but the people it is for really don't want to miss out on this.

—Our Father, Who Art in…

But father‘s out of style, isn’t it? They call you The Admin now. The Board. Creation was a group project. I don’t know how they know that, but apparently there are a lot of you. Maybe I should call you Odin, or Thor. Or—Loki, given the way things are falling apart down here.

I always thought of you as the Heavenly Father, and all this time I’ve been praying to a committee.

This should feel different. It’s not faith any more, after all. It’s science. It’s, it’s evidence-based as they like to say. Before, I could just talk to you; it never occurred to me to wonder how you’d hear my small voice out of all these billions. I think maybe part of me was hoping you wouldn’t.

But now there’s a mechanism. We’re all just numbers now, that’s all we’ve ever been. These thoughts in my head are just math and state variables and logic gates. If that’s true — and what kind of flat-earther would deny it, after five years of merciless confirmation? — then the model can be frozen between one tick of the system clock and the next. The whole universe could have stopped dead a split-second ago, you could have poked around to your heart’s content. In the space between one breath and the next, you could have read every thought sparking in every creature in the universe.

Maybe you plot my soul on some kind of graph, shame on one axis, remorse on another. Maybe you can see every x about to tip over into y. You know what my next thought is going to be, and the thought after. Math is deterministic, after all. And when you’ve seen enough, you can just — start the cosmos up again and wander off for a coffee, and you don’t even have to hang around to hear the rest of this prayer because you already know how it’s going to end.

I don’t care what they call it these days: Handshaking Protocols, NPCUI, OSping, Divinity dialup. It’s still just prayer. I know that, because it feels the same as it always did. Even if nothing else does.

It feels like no one’s listening.

British architecture studio Dowen Farmer Architects has released plans for Portal Road, a multistorey ghost-kitchen tower block in west London that would shuttle food to a public food hall like the “Ministry of Magic”.

Dowen Farmer Architects’ proposal for the site, called Portal Road, would measure 28,000 square metres and consist of 12 storeys, 10 of which would be dedicated to 260 rentable ghost kitchens for use by local shops and restaurants.

Dowen Farmer designs cubic ten-storey tower for ghost kitchens | DEZEEN

Dispatch the maimed, the old, the weak, destroy the very world itself, for what is the point of life if the promise of fulfilment lies elsewhere?

There are very audible echoes of Peter Newbrook's The Asphyx and even Peter Sasdy's The Stone Tape, scripted by Nigel Kneale in The Breakthrough, though it's actually based on a Daphne Du Maurier short story that predates both of them. Written in 1964 as a favour to Kingsley Amis who was looking to put together an anthology of science fiction stories that was never published, the short story turned up in Du Maurier's 1971 collection Not After Midnight, and Other Stories. Graham Evans' television play, adapted by Clive Exton, is faithful to the story but possibly as a consequence it's far too leisurely for its own good.

Computer specialist Stephen Saunders (Simon Ward) is sent by a government minister, Sir John Fowler (Anthony Nicholls), to a laboratory, Saxmere, situated on the salt marshes of the East Suffolk Coast, ostensibly to help maintain it's fantastically clunky and oh-so-70s computer - all oscilloscopes, huge reels of tape and inexplicable banks of flashing lights. It turns out that the Saxmere team, made up of "Mac" Maclean (Brewster Mason), Robbie (Clive Swift), Janus (Roy Boyd), Ken (Thomas Ellice, here credited as Martin C. Thurley) and Cerberus the dog are trying to use the computer to help them capture human psychic energy (or "Force 6" as Mac dubs it) at the very moment of death. The terminally ill Ken is chosen as the test subject and a developmentally challenged young local girl, Niki (Rosalind McCabe), who shows some talent as a medium and seems to have caused poltergeist activity in the past, is drafted in to act as a conduit to him after he dies. The experiment seems to be a success but Niki reports back that Ken wants his life force released and the researchers realise with horror that their process captures more than just psychic energy.

— Kevin Lyons — EOFFTV - The Encyclopedia of Fantastic Film and Television

This is the text of "Big Germs," a revised and abridged story that I am reading at the SF in SF gathering in San Francisco at 6 pm on Sunday, June 23, 2024. This post appears on Medium as well. A longer and unrivised version of "Big Germs" appeared in BoingBoing on May 22, 2024. […]

The post Reading "Big Germs" at SF in SF. Print Sale. first appeared on Rudy's Blog.

Alien invasions and interspecies war are played-out tropes. In my Sqinks novel, sqink aliens are arriving. And I was going to have it be an alien invasion. But then I thought to ask Stephen Wolfram for a better idea. Me: What do the invading aliens want from us? Stephen Anthropology? Understand an alien mind to […]

The post Invading Aliens? No, They're Exchange Students. (With Input from Stephen Wolfram) first appeared on Rudy's Blog.

On March 30, 2024, I spoke at an event sponsored by City Lights books, in honor of Terry Bisson. Here’s my three-frame pan of the crowd in front of me. And here’s a version of what I said. Terry Bisson 1942-2024 Memorial event at the Lost Church in SF I met Terry in 1984. We […]

The post In Memory of Terry Bisson first appeared on Rudy's Blog.

Over the years I’ve written two non-fiction books on the fourth dimension, edited a book of C. H. Hinton’s writings on the fourth dimension, published a novel set in the fourth dimension, and worked the concept into a number of my other novels and short stories. Shortly before Christmas, 2023, Jeff Carreira interviewed me about […]

The post The Reality of the Fourth Dimension first appeared on Rudy's Blog.

Ever since I was a boy, I've been fascinated with movies. I loved the characters and the excitement—but most of all the stories. I wanted to be an actor. And I believed that I'd get to do the things that Indiana Jones did and go on exciting adventures. I even dreamed up ideas for movies that my friends and I could make and star in. But they never went any further. I did, however, end up working in user experience (UX). Now, I realize that there's an element of theater to UX—I hadn't really considered it before, but user research is storytelling. And to get the most out of user research, you need to tell a good story where you bring stakeholders—the product team and decision makers—along and get them interested in learning more.

Think of your favorite movie. More than likely it follows a three-act structure that's commonly seen in storytelling: the setup, the conflict, and the resolution. The first act shows what exists today, and it helps you get to know the characters and the challenges and problems that they face. Act two introduces the conflict, where the action is. Here, problems grow or get worse. And the third and final act is the resolution. This is where the issues are resolved and the characters learn and change. I believe that this structure is also a great way to think about user research, and I think that it can be especially helpful in explaining user research to others.

Three-act structure in movies (© 2024 StudioBinder. Image used with permission from StudioBinder.).

Use storytelling as a structure to do research

Three-act structure in movies (© 2024 StudioBinder. Image used with permission from StudioBinder.).

Use storytelling as a structure to do research

It's sad to say, but many have come to see research as being expendable. If budgets or timelines are tight, research tends to be one of the first things to go. Instead of investing in research, some product managers rely on designers or—worse—their own opinion to make the "right" choices for users based on their experience or accepted best practices. That may get teams some of the way, but that approach can so easily miss out on solving users' real problems. To remain user-centered, this is something we should avoid. User research elevates design. It keeps it on track, pointing to problems and opportunities. Being aware of the issues with your product and reacting to them can help you stay ahead of your competitors.

In the three-act structure, each act corresponds to a part of the process, and each part is critical to telling the whole story. Let's look at the different acts and how they align with user research.

Act one: setupThe setup is all about understanding the background, and that's where foundational research comes in. Foundational research (also called generative, discovery, or initial research) helps you understand users and identify their problems. You're learning about what exists today, the challenges users have, and how the challenges affect them—just like in the movies. To do foundational research, you can conduct contextual inquiries or diary studies (or both!), which can help you start to identify problems as well as opportunities. It doesn't need to be a huge investment in time or money.

Erika Hall writes about minimum viable ethnography, which can be as simple as spending 15 minutes with a user and asking them one thing: "'Walk me through your day yesterday.' That's it. Present that one request. Shut up and listen to them for 15 minutes. Do your damndest to keep yourself and your interests out of it. Bam, you're doing ethnography." According to Hall, "[This] will probably prove quite illuminating. In the highly unlikely case that you didn't learn anything new or useful, carry on with enhanced confidence in your direction."

This makes total sense to me. And I love that this makes user research so accessible. You don't need to prepare a lot of documentation; you can just recruit participants and do it! This can yield a wealth of information about your users, and it'll help you better understand them and what's going on in their lives. That's really what act one is all about: understanding where users are coming from.

Jared Spool talks about the importance of foundational research and how it should form the bulk of your research. If you can draw from any additional user data that you can get your hands on, such as surveys or analytics, that can supplement what you've heard in the foundational studies or even point to areas that need further investigation. Together, all this data paints a clearer picture of the state of things and all its shortcomings. And that's the beginning of a compelling story. It's the point in the plot where you realize that the main characters—or the users in this case—are facing challenges that they need to overcome. Like in the movies, this is where you start to build empathy for the characters and root for them to succeed. And hopefully stakeholders are now doing the same. Their sympathy may be with their business, which could be losing money because users can't complete certain tasks. Or maybe they do empathize with users' struggles. Either way, act one is your initial hook to get the stakeholders interested and invested.

Once stakeholders begin to understand the value of foundational research, that can open doors to more opportunities that involve users in the decision-making process. And that can guide product teams toward being more user-centered. This benefits everyone—users, the product, and stakeholders. It's like winning an Oscar in movie terms—it often leads to your product being well received and successful. And this can be an incentive for stakeholders to repeat this process with other products. Storytelling is the key to this process, and knowing how to tell a good story is the only way to get stakeholders to really care about doing more research.

This brings us to act two, where you iteratively evaluate a design or concept to see whether it addresses the issues.

Act two: conflictAct two is all about digging deeper into the problems that you identified in act one. This usually involves directional research, such as usability tests, where you assess a potential solution (such as a design) to see whether it addresses the issues that you found. The issues could include unmet needs or problems with a flow or process that's tripping users up. Like act two in a movie, more issues will crop up along the way. It's here that you learn more about the characters as they grow and develop through this act.

Usability tests should typically include around five participants according to Jakob Nielsen, who found that that number of users can usually identify most of the problems: "As you add more and more users, you learn less and less because you will keep seeing the same things again and again… After the fifth user, you are wasting your time by observing the same findings repeatedly but not learning much new."

There are parallels with storytelling here too; if you try to tell a story with too many characters, the plot may get lost. Having fewer participants means that each user's struggles will be more memorable and easier to relay to other stakeholders when talking about the research. This can help convey the issues that need to be addressed while also highlighting the value of doing the research in the first place.

Researchers have run usability tests in person for decades, but you can also conduct usability tests remotely using tools like Microsoft Teams, Zoom, or other teleconferencing software. This approach has become increasingly popular since the beginning of the pandemic, and it works well. You can think of in-person usability tests like going to a play and remote sessions as more like watching a movie. There are advantages and disadvantages to each. In-person usability research is a much richer experience. Stakeholders can experience the sessions with other stakeholders. You also get real-time reactions—including surprise, agreement, disagreement, and discussions about what they're seeing. Much like going to a play, where audiences get to take in the stage, the costumes, the lighting, and the actors' interactions, in-person research lets you see users up close, including their body language, how they interact with the moderator, and how the scene is set up.

If in-person usability testing is like watching a play—staged and controlled—then conducting usability testing in the field is like immersive theater where any two sessions might be very different from one another. You can take usability testing into the field by creating a replica of the space where users interact with the product and then conduct your research there. Or you can go out to meet users at their location to do your research. With either option, you get to see how things work in context, things come up that wouldn't have in a lab environment—and conversion can shift in entirely different directions. As researchers, you have less control over how these sessions go, but this can sometimes help you understand users even better. Meeting users where they are can provide clues to the external forces that could be affecting how they use your product. In-person usability tests provide another level of detail that's often missing from remote usability tests.

That's not to say that the "movies"—remote sessions—aren't a good option. Remote sessions can reach a wider audience. They allow a lot more stakeholders to be involved in the research and to see what's going on. And they open the doors to a much wider geographical pool of users. But with any remote session there is the potential of time wasted if participants can't log in or get their microphone working.

The benefit of usability testing, whether remote or in person, is that you get to see real users interact with the designs in real time, and you can ask them questions to understand their thought processes and grasp of the solution. This can help you not only identify problems but also glean why they're problems in the first place. Furthermore, you can test hypotheses and gauge whether your thinking is correct. By the end of the sessions, you'll have a much clearer picture of how usable the designs are and whether they work for their intended purposes. Act two is the heart of the story—where the excitement is—but there can be surprises too. This is equally true of usability tests. Often, participants will say unexpected things, which change the way that you look at things—and these twists in the story can move things in new directions.

Unfortunately, user research is sometimes seen as expendable. And too often usability testing is the only research process that some stakeholders think that they ever need. In fact, if the designs that you're evaluating in the usability test aren't grounded in a solid understanding of your users (foundational research), there's not much to be gained by doing usability testing in the first place. That's because you're narrowing the focus of what you're getting feedback on, without understanding the users' needs. As a result, there's no way of knowing whether the designs might solve a problem that users have. It's only feedback on a particular design in the context of a usability test.

On the other hand, if you only do foundational research, while you might have set out to solve the right problem, you won't know whether the thing that you're building will actually solve that. This illustrates the importance of doing both foundational and directional research.

In act two, stakeholders will—hopefully—get to watch the story unfold in the user sessions, which creates the conflict and tension in the current design by surfacing their highs and lows. And in turn, this can help motivate stakeholders to address the issues that come up.

Act three: resolutionWhile the first two acts are about understanding the background and the tensions that can propel stakeholders into action, the third part is about resolving the problems from the first two acts. While it's important to have an audience for the first two acts, it's crucial that they stick around for the final act. That means the whole product team, including developers, UX practitioners, business analysts, delivery managers, product managers, and any other stakeholders that have a say in the next steps. It allows the whole team to hear users' feedback together, ask questions, and discuss what's possible within the project's constraints. And it lets the UX research and design teams clarify, suggest alternatives, or give more context behind their decisions. So you can get everyone on the same page and get agreement on the way forward.

This act is mostly told in voiceover with some audience participation. The researcher is the narrator, who paints a picture of the issues and what the future of the product could look like given the things that the team has learned. They give the stakeholders their recommendations and their guidance on creating this vision.

Nancy Duarte in the Harvard Business Review offers an approach to structuring presentations that follow a persuasive story. "The most effective presenters use the same techniques as great storytellers: By reminding people of the status quo and then revealing the path to a better way, they set up a conflict that needs to be resolved," writes Duarte. "That tension helps them persuade the audience to adopt a new mindset or behave differently."

Picture this. You've joined a squad at your company that's designing new product features with an emphasis on automation or AI. Or your company has just implemented a personalization engine. Either way, you're designing with data. Now what? When it comes to designing for personalization, there are many cautionary tales, no overnight successes, and few guides for the perplexed.

Between the fantasy of getting it right and the fear of it going wrong—like when we encounter "persofails" in the vein of a company repeatedly imploring everyday consumers to buy additional toilet seats—the personalization gap is real. It's an especially confounding place to be a digital professional without a map, a compass, or a plan.

For those of you venturing into personalization, there's no Lonely Planet and few tour guides because effective personalization is so specific to each organization's talent, technology, and market position.

But you can ensure that your team has packed its bags sensibly.

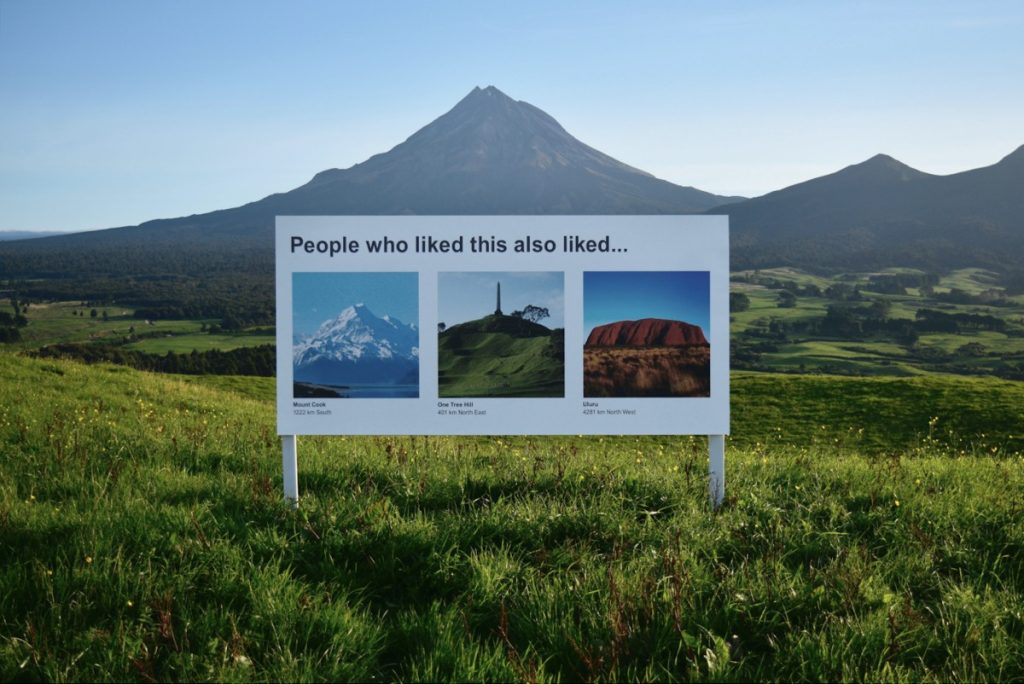

Designing for personalization makes for strange bedfellows. A savvy art-installation satire on the challenges of humane design in the era of the algorithm. Credit: Signs of the Times, Scott Kelly and Ben Polkinghome.

Designing for personalization makes for strange bedfellows. A savvy art-installation satire on the challenges of humane design in the era of the algorithm. Credit: Signs of the Times, Scott Kelly and Ben Polkinghome.

There's a DIY formula to increase your chances for success. At minimum, you'll defuse your boss's irrational exuberance. Before the party you'll need to effectively prepare.

We call it prepersonalization.

Behind the musicConsider Spotify's DJ feature, which debuted this past year.

https://www.youtube.com/watch?v=ok-aNnc0DkoWe're used to seeing the polished final result of a personalization feature. Before the year-end award, the making-of backstory, or the behind-the-scenes victory lap, a personalized feature had to be conceived, budgeted, and prioritized. Before any personalization feature goes live in your product or service, it lives amid a backlog of worthy ideas for expressing customer experiences more dynamically.

So how do you know where to place your personalization bets? How do you design consistent interactions that won't trip up users or—worse—breed mistrust? We've found that for many budgeted programs to justify their ongoing investments, they first needed one or more workshops to convene key stakeholders and internal customers of the technology. Make yours count.

From Big Tech to fledgling startups, we've seen the same evolution up close with our clients. In our experiences with working on small and large personalization efforts, a program's ultimate track record—and its ability to weather tough questions, work steadily toward shared answers, and organize its design and technology efforts—turns on how effectively these prepersonalization activities play out.

Time and again, we've seen effective workshops separate future success stories from unsuccessful efforts, saving countless time, resources, and collective well-being in the process.

A personalization practice involves a multiyear effort of testing and feature development. It's not a switch-flip moment in your tech stack. It's best managed as a backlog that often evolves through three steps:

- customer experience optimization (CXO, also known as A/B testing or experimentation)

- always-on automations (whether rules-based or machine-generated)

- mature features or standalone product development (such as Spotify's DJ experience)

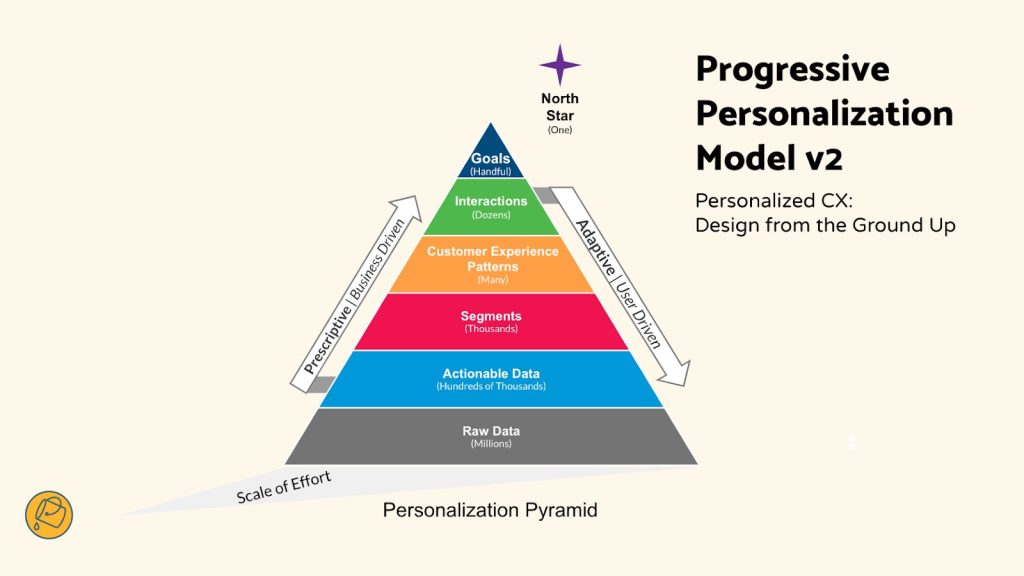

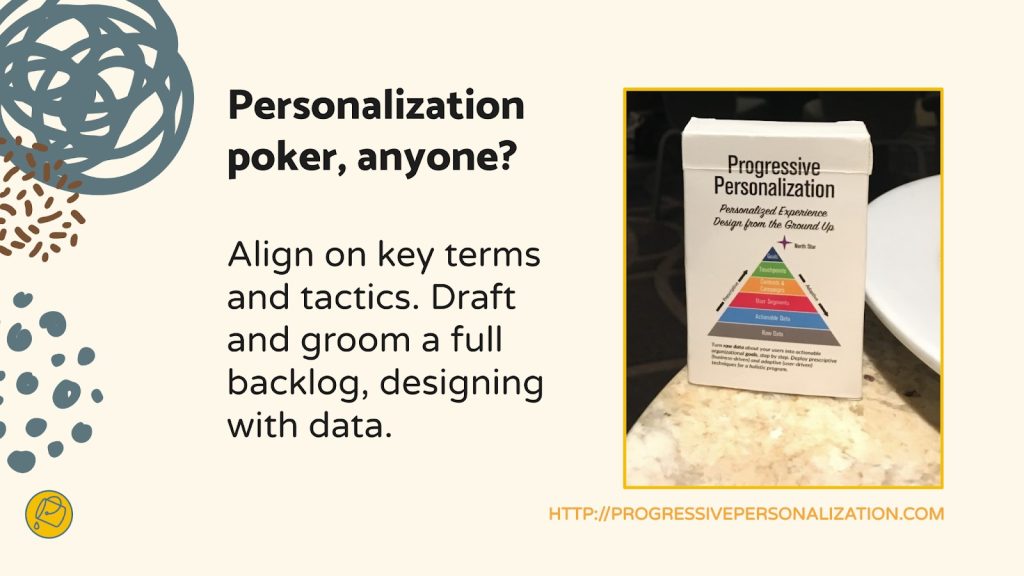

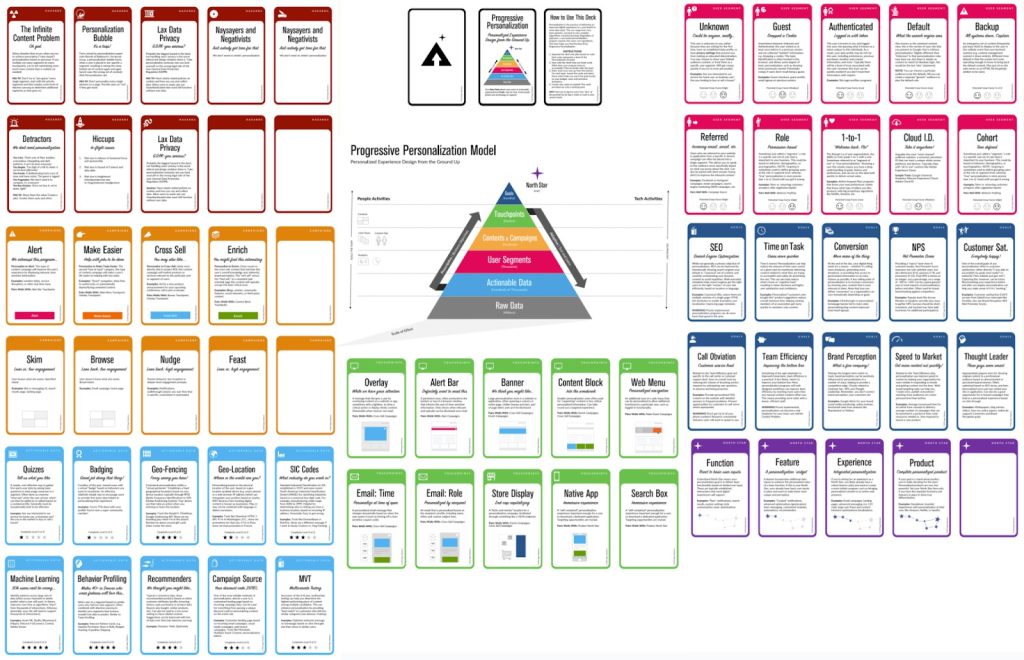

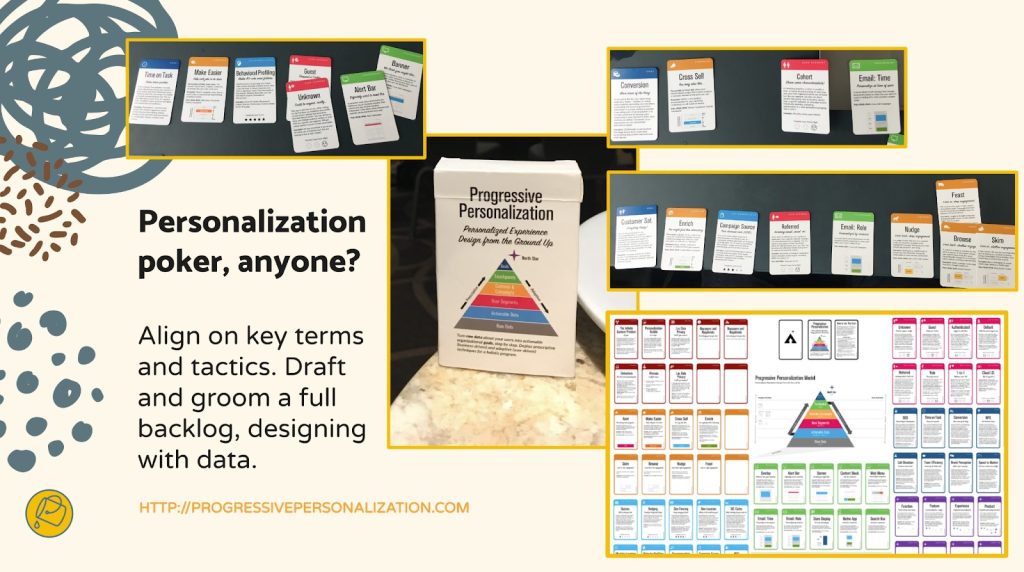

This is why we created our progressive personalization framework and why we're field-testing an accompanying deck of cards: we believe that there's a base grammar, a set of "nouns and verbs" that your organization can use to design experiences that are customized, personalized, or automated. You won't need these cards. But we strongly recommend that you create something similar, whether that might be digital or physical.

Set your kitchen timerHow long does it take to cook up a prepersonalization workshop? The surrounding assessment activities that we recommend including can (and often do) span weeks. For the core workshop, we recommend aiming for two to three days. Here's a summary of our broader approach along with details on the essential first-day activities.

The full arc of the wider workshop is threefold:

- Kickstart: This sets the terms of engagement as you focus on the opportunity as well as the readiness and drive of your team and your leadership. .

- Plan your work: This is the heart of the card-based workshop activities where you specify a plan of attack and the scope of work.

- Work your plan: This phase is all about creating a competitive environment for team participants to individually pitch their own pilots that each contain a proof-of-concept project, its business case, and its operating model.

Give yourself at least a day, split into two large time blocks, to power through a concentrated version of those first two phases.

Kickstart: Whet your appetiteWe call the first lesson the "landscape of connected experience." It explores the personalization possibilities in your organization. A connected experience, in our parlance, is any UX requiring the orchestration of multiple systems of record on the backend. This could be a content-management system combined with a marketing-automation platform. It could be a digital-asset manager combined with a customer-data platform.

Spark conversation by naming consumer examples and business-to-business examples of connected experience interactions that you admire, find familiar, or even dislike. This should cover a representative range of personalization patterns, including automated app-based interactions (such as onboarding sequences or wizards), notifications, and recommenders. We have a catalog of these in the cards. Here's a list of 142 different interactions to jog your thinking.

This is all about setting the table. What are the possible paths for the practice in your organization? If you want a broader view, here's a long-form primer and a strategic framework.

Assess each example that you discuss for its complexity and the level of effort that you estimate that it would take for your team to deliver that feature (or something similar). In our cards, we divide connected experiences into five levels: functions, features, experiences, complete products, and portfolios. Size your own build here. This will help to focus the conversation on the merits of ongoing investment as well as the gap between what you deliver today and what you want to deliver in the future.

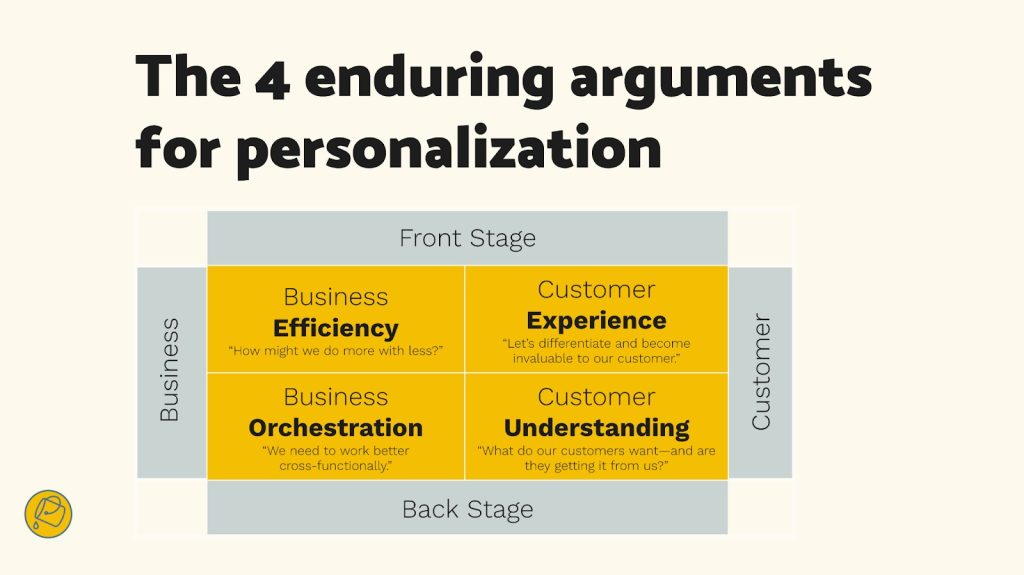

Next, have your team plot each idea on the following 2×2 grid, which lays out the four enduring arguments for a personalized experience. This is critical because it emphasizes how personalization can not only help your external customers but also affect your own ways of working. It's also a reminder (which is why we used the word argument earlier) of the broader effort beyond these tactical interventions.

Getting intentional about the desired outcomes is an important component to a large-scale personalization program. Credit: Bucket Studio.

Getting intentional about the desired outcomes is an important component to a large-scale personalization program. Credit: Bucket Studio.

Each team member should vote on where they see your product or service putting its emphasis. Naturally, you can't prioritize all of them. The intention here is to flesh out how different departments may view their own upsides to the effort, which can vary from one to the next. Documenting your desired outcomes lets you know how the team internally aligns across representatives from different departments or functional areas.

The third and final kickstart activity is about naming your personalization gap. Is your customer journey well documented? Will data and privacy compliance be too big of a challenge? Do you have content metadata needs that you have to address? (We're pretty sure that you do: it's just a matter of recognizing the relative size of that need and its remedy.) In our cards, we've noted a number of program risks, including common team dispositions. Our Detractor card, for example, lists six stakeholder behaviors that hinder progress.

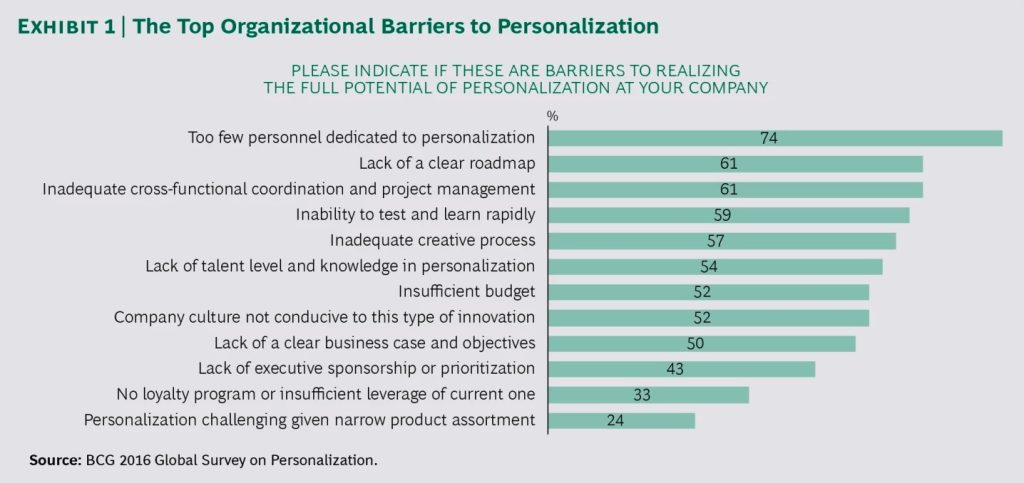

Effectively collaborating and managing expectations is critical to your success. Consider the potential barriers to your future progress. Press the participants to name specific steps to overcome or mitigate those barriers in your organization. As studies have shown, personalization efforts face many common barriers.

The largest management consultancies have established practice areas in personalization, and they regularly research program risks and challenges. Credit: Boston Consulting Group.

The largest management consultancies have established practice areas in personalization, and they regularly research program risks and challenges. Credit: Boston Consulting Group.

At this point, you've hopefully discussed sample interactions, emphasized a key area of benefit, and flagged key gaps? Good—you're ready to continue.

Hit that test kitchenNext, let's look at what you'll need to bring your personalization recipes to life. Personalization engines, which are robust software suites for automating and expressing dynamic content, can intimidate new customers. Their capabilities are sweeping and powerful, and they present broad options for how your organization can conduct its activities. This presents the question: Where do you begin when you're configuring a connected experience?

What's important here is to avoid treating the installed software like it were a dream kitchen from some fantasy remodeling project (as one of our client executives memorably put it). These software engines are more like test kitchens where your team can begin devising, tasting, and refining the snacks and meals that will become a part of your personalization program's regularly evolving menu.

Progressive personalization, a framework for designing connected experiences. Credit: Bucket Studio and Colin Eagan.

Progressive personalization, a framework for designing connected experiences. Credit: Bucket Studio and Colin Eagan.

The ultimate menu of the prioritized backlog will come together over the course of the workshop. And creating "dishes" is the way that you'll have individual team stakeholders construct personalized interactions that serve their needs or the needs of others.

The dishes will come from recipes, and those recipes have set ingredients.

In the same way that ingredients form a recipe, you can also create cards to break down a personalized interaction into its constituent parts. Credit: Bucket Studio and Colin Eagan.

Verify your ingredients

In the same way that ingredients form a recipe, you can also create cards to break down a personalized interaction into its constituent parts. Credit: Bucket Studio and Colin Eagan.

Verify your ingredients

Like a good product manager, you'll make sure—andyou'll validate with the right stakeholders present—that you have all the ingredients on hand to cook up your desired interaction (or that you can work out what needs to be added to your pantry). These ingredients include the audience that you're targeting, content and design elements, the context for the interaction, and your measure for how it'll come together.

This isn't just about discovering requirements. Documenting your personalizations as a series of if-then statements lets the team:

- compare findings toward a unified approach for developing features, not unlike when artists paint with the same palette;

- specify a consistent set of interactions that users find uniform or familiar;

- and develop parity across performance measurements and key performance indicators too.

This helps you streamline your designs and your technical efforts while you deliver a shared palette of core motifs of your personalized or automated experience.

Compose your recipeWhat ingredients are important to you? Think of a who-what-when-why construct:

- Who are your key audience segments or groups?

- What kind of content will you give them, in what design elements, and under what circumstances?

- And for which business and user benefits?

We first developed these cards and card categories five years ago. We regularly play-test their fit with conference audiences and clients. And we still encounter new possibilities. But they all follow an underlying who-what-when-why logic.

Here are three examples for a subscription-based reading app, which you can generally follow along with right to left in the cards in the accompanying photo below.

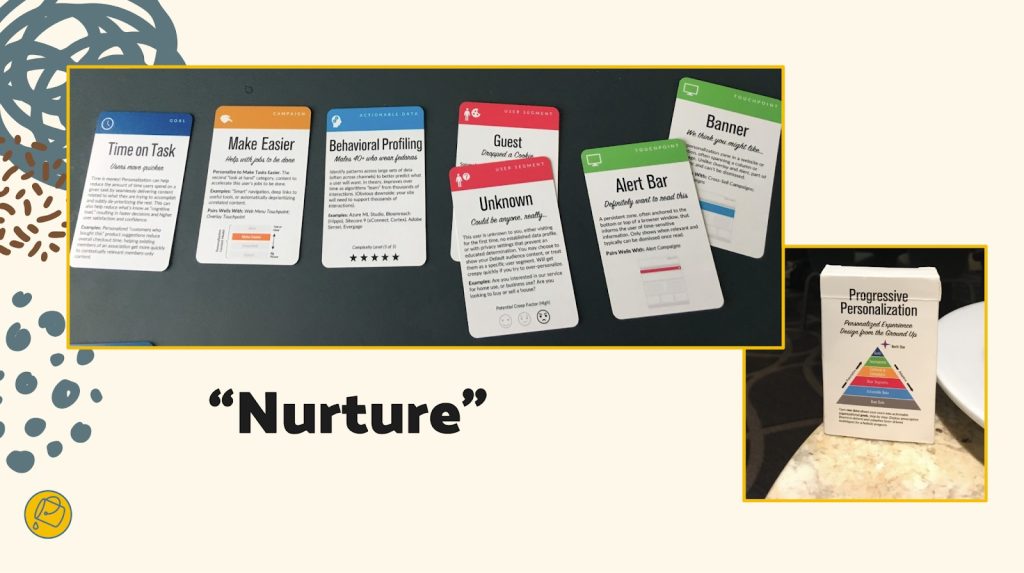

- Nurture personalization: When a guest or an unknown visitor interacts with a product title, a banner or alert bar appears that makes it easier for them to encounter a related title they may want to read, saving them time.

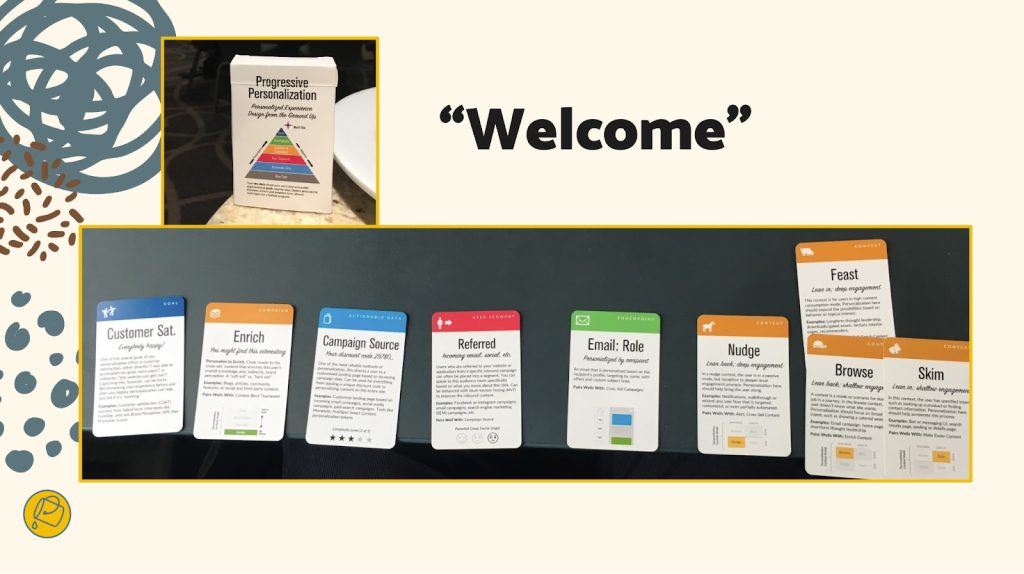

- Welcome automation: When there's a newly registered user, an email is generated to call out the breadth of the content catalog and to make them a happier subscriber.

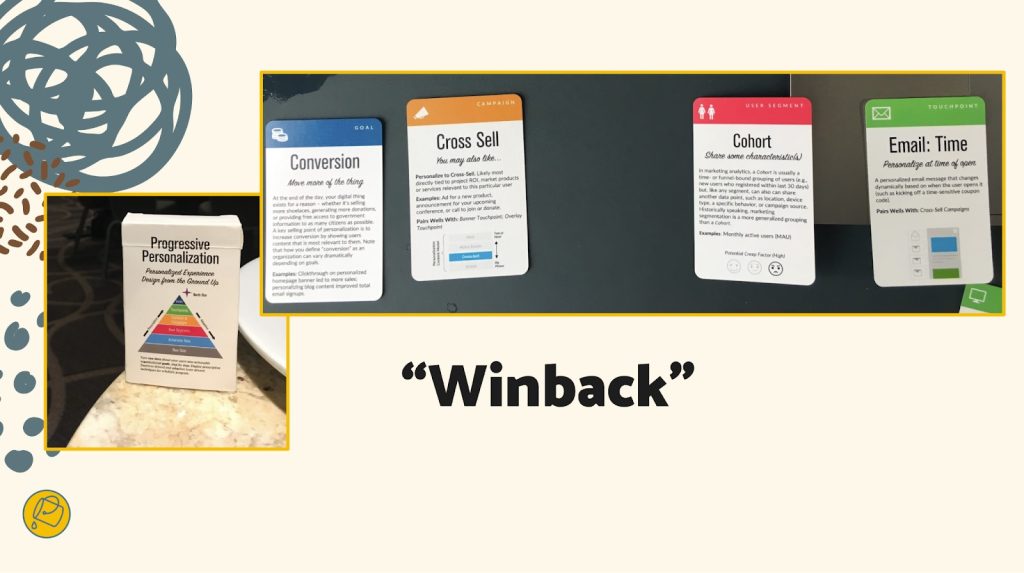

- Winback automation: Before their subscription lapses or after a recent failed renewal, a user is sent an email that gives them a promotional offer to suggest that they reconsider renewing or to remind them to renew.

A "nurture" automation may trigger a banner or alert box that promotes content that makes it easier for users to complete a common task, based on behavioral profiling of two user types. Credit: Bucket Studio.